Gradle User Manual: Version 8.7

- OVERVIEW

- RELEASES

- RUNNING GRADLE BUILDS

- CORE CONCEPTS

- OTHER TOPICS

- AUTHORING GRADLE BUILDS

- THE BASICS

- STRUCTURING BUILDS

- DEVELOPING TASKS

- Configuring Tasks Lazily

- Understanding Lazy properties

- Creating a Property or Provider instance

- Connecting properties together

- Working with files

- Working with task inputs and outputs

- Working with collections

- Working with maps

- Applying a convention to a property

- Making a property unmodifiable

- Using the Provider API

- DEVELOPING PLUGINS

- BEST PRACTICES

- OTHER TOPICS

- AUTHORING JVM BUILDS

- JAVA TOOLCHAINS

- JVM PLUGINS

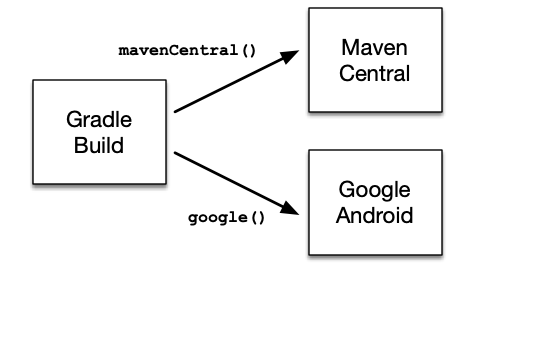

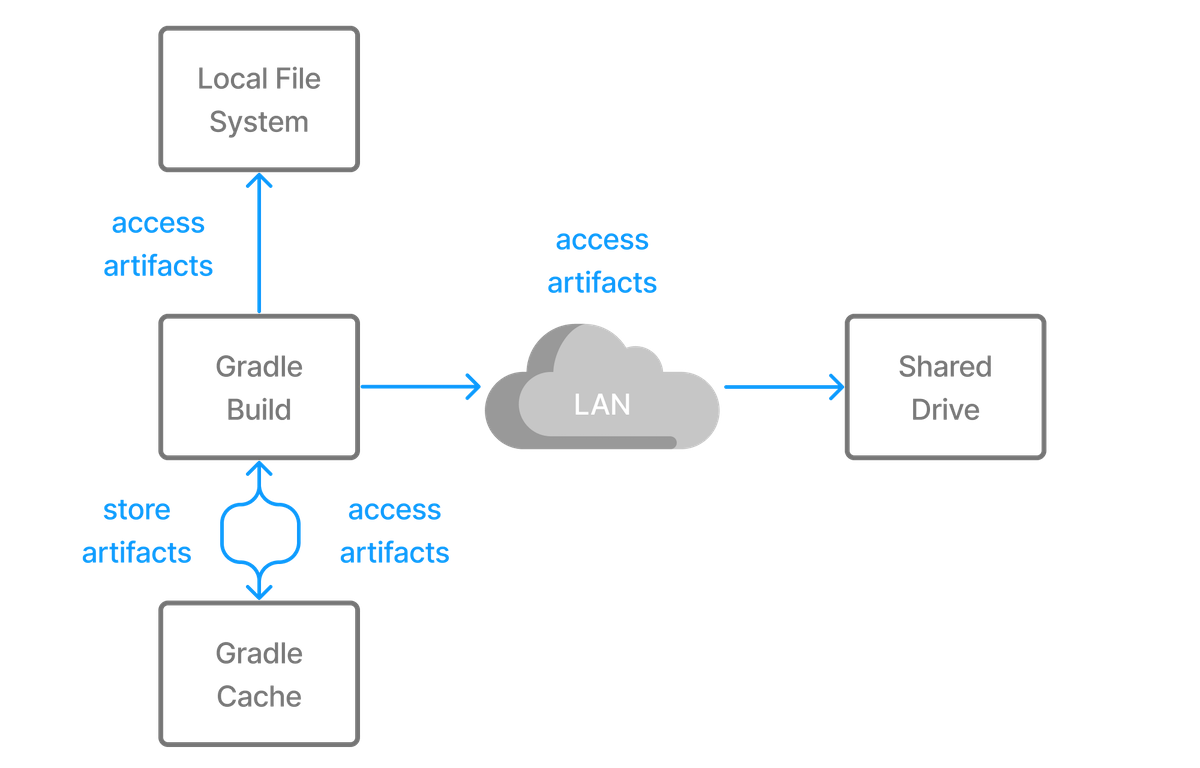

- WORKING WITH DEPENDENCIES

- THE BASICS

- DECLARING VERSIONS

- CONTROLLING TRANSITIVES

- Upgrading versions of transitive dependencies

- Downgrading versions and excluding dependencies

- Sharing dependency versions between projects

- Aligning dependency versions

- Handling mutually exclusive dependencies

- Fixing metadata with component metadata rules

- Customizing resolution of a dependency directly

- Preventing accidental dependency upgrades

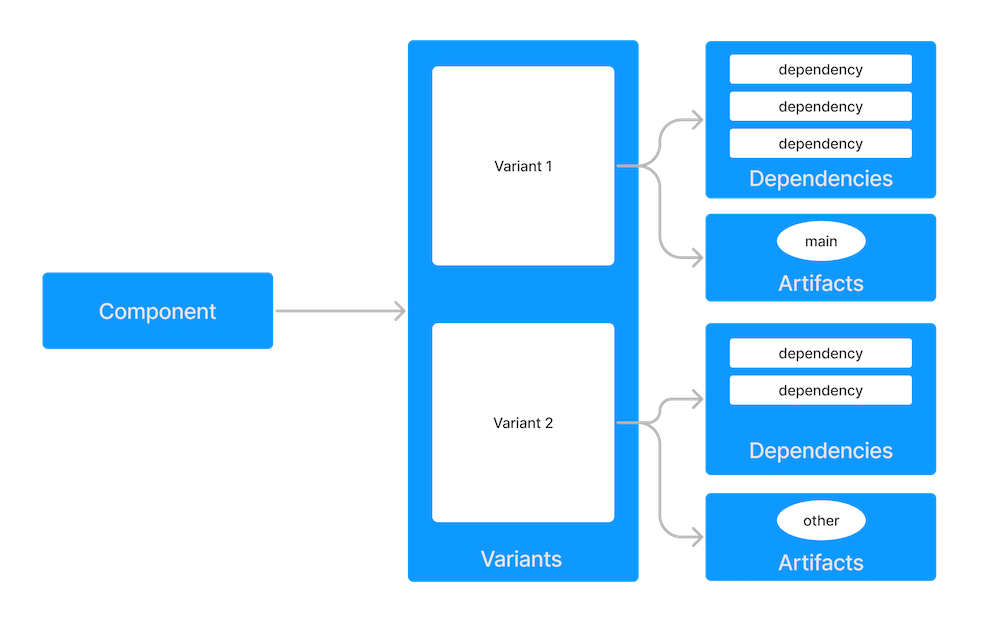

- PRODUCING AND CONSUMING VARIANTS OF LIBRARIES

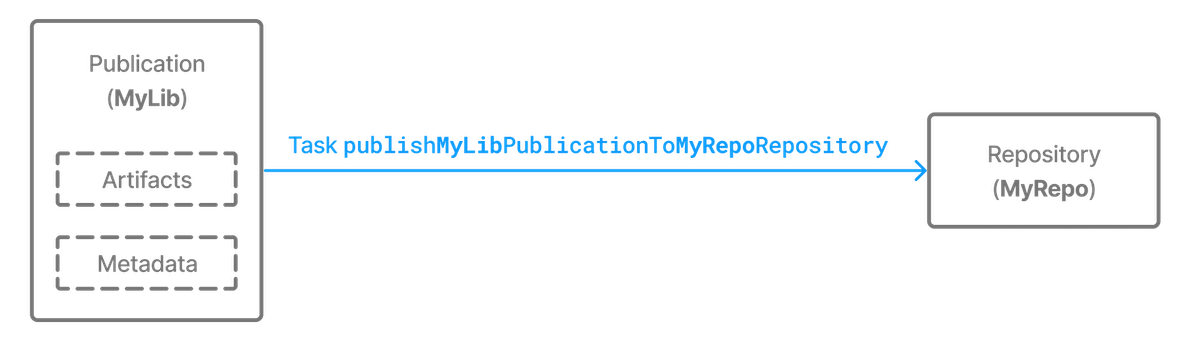

- PUBLISHING LIBRARIES

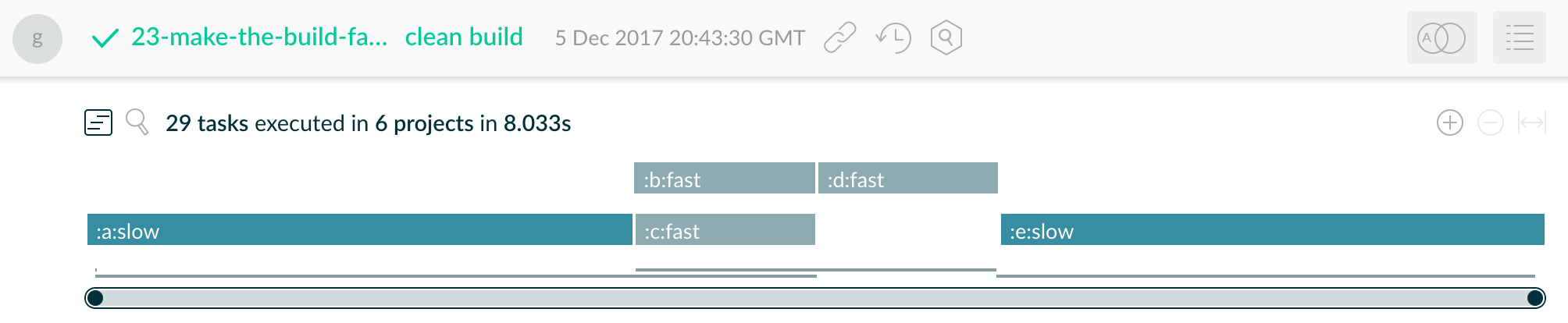

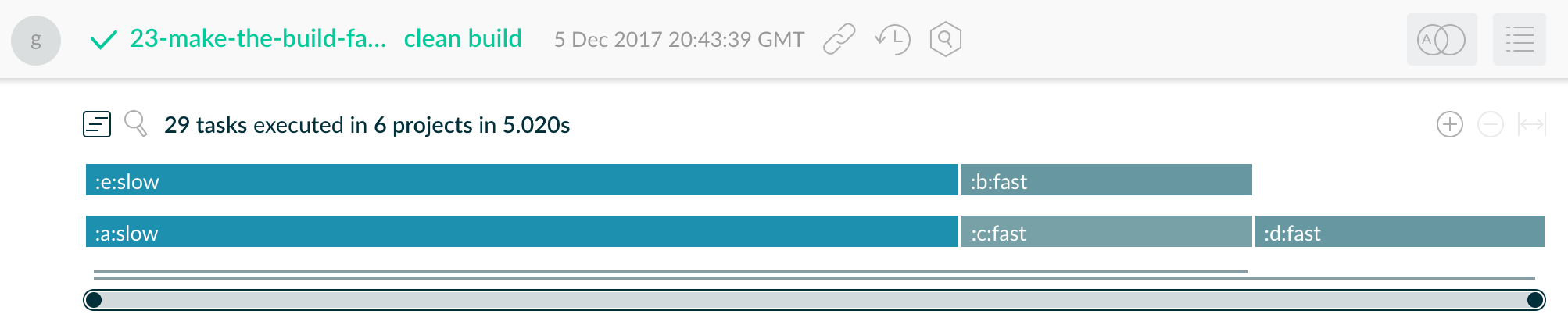

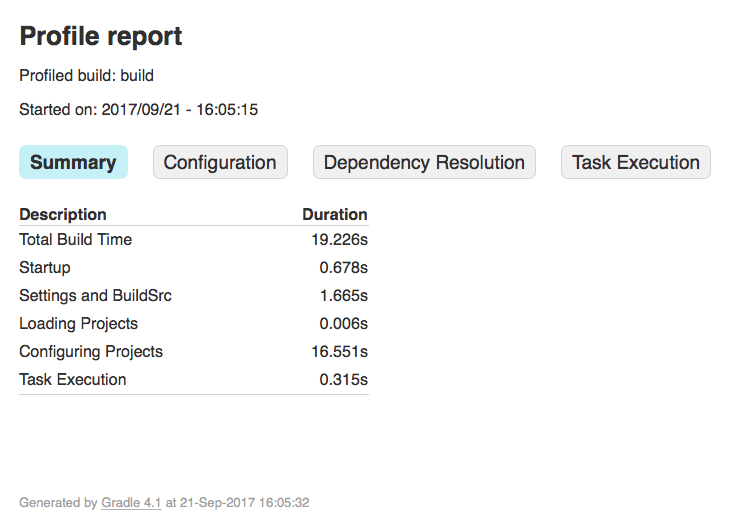

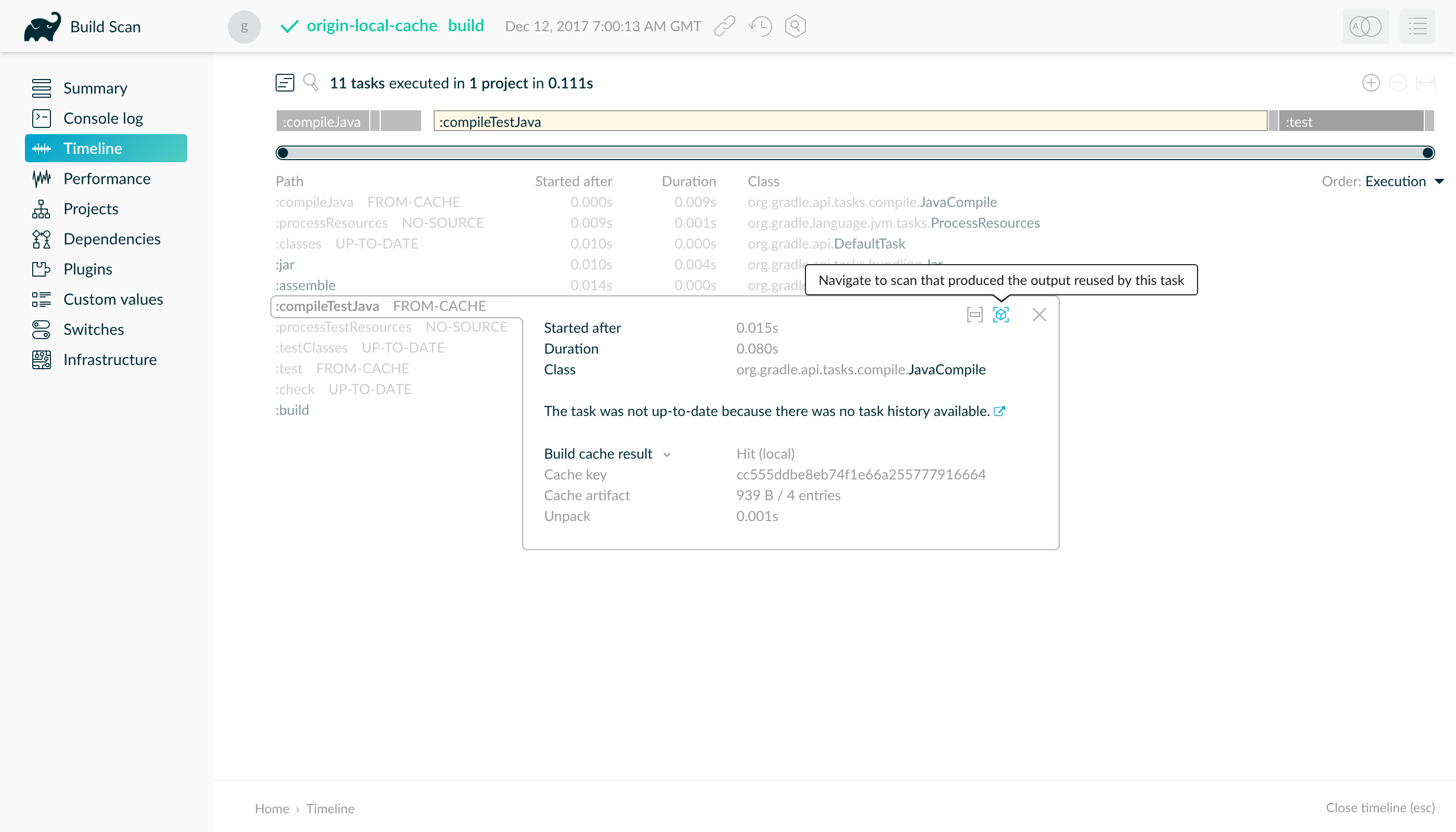

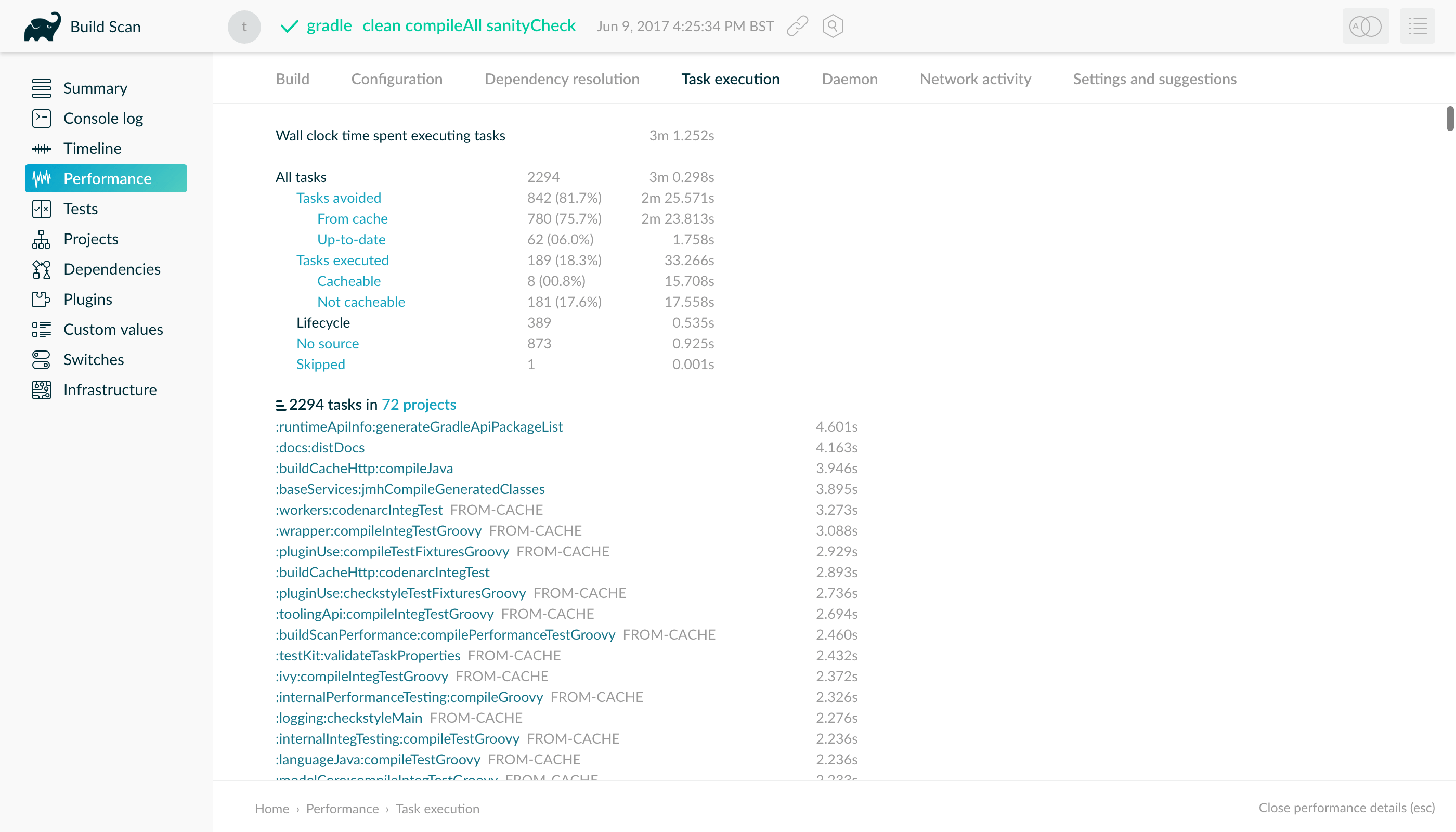

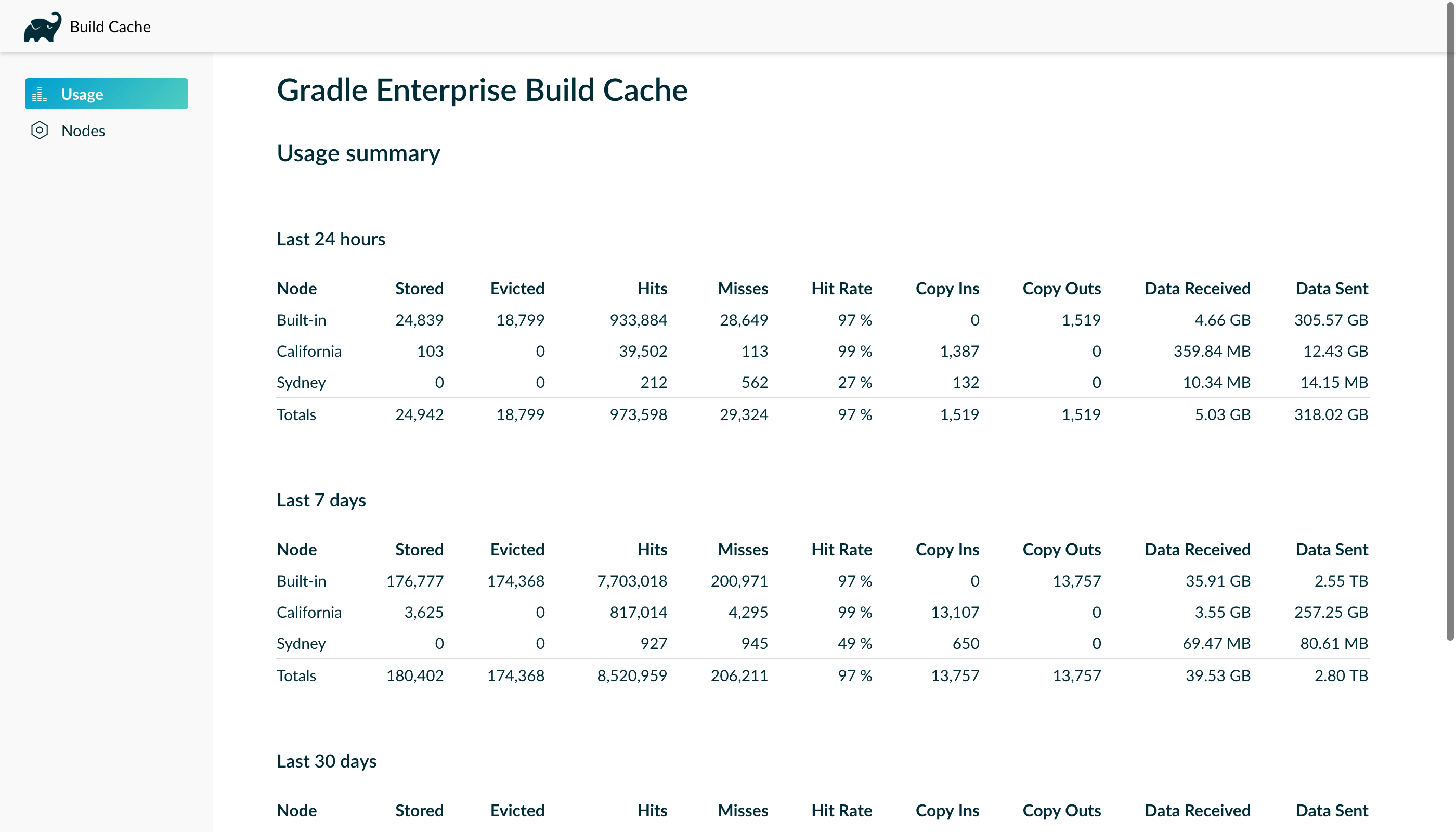

- OPTIMIZING BUILD PERFORMANCE

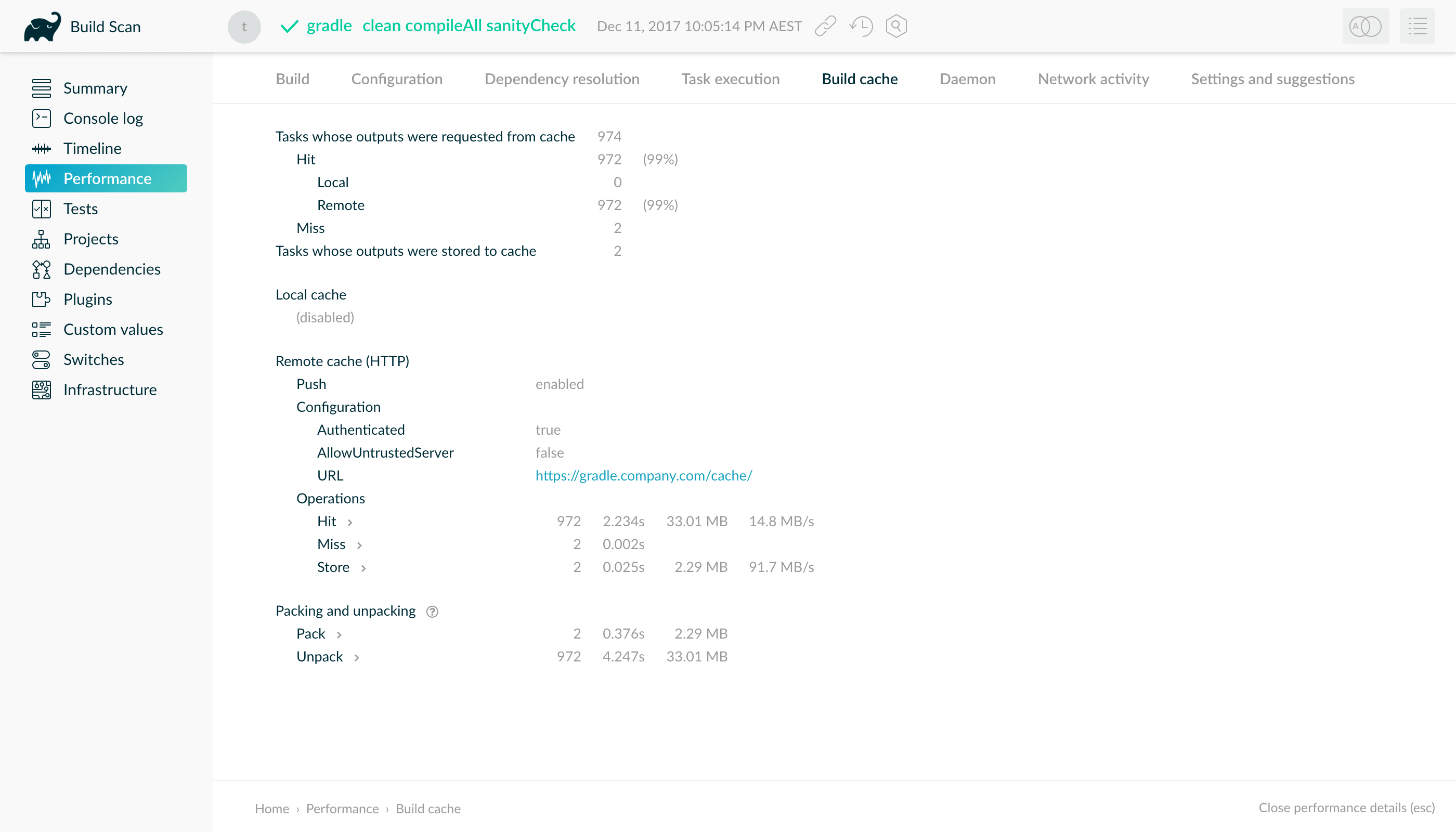

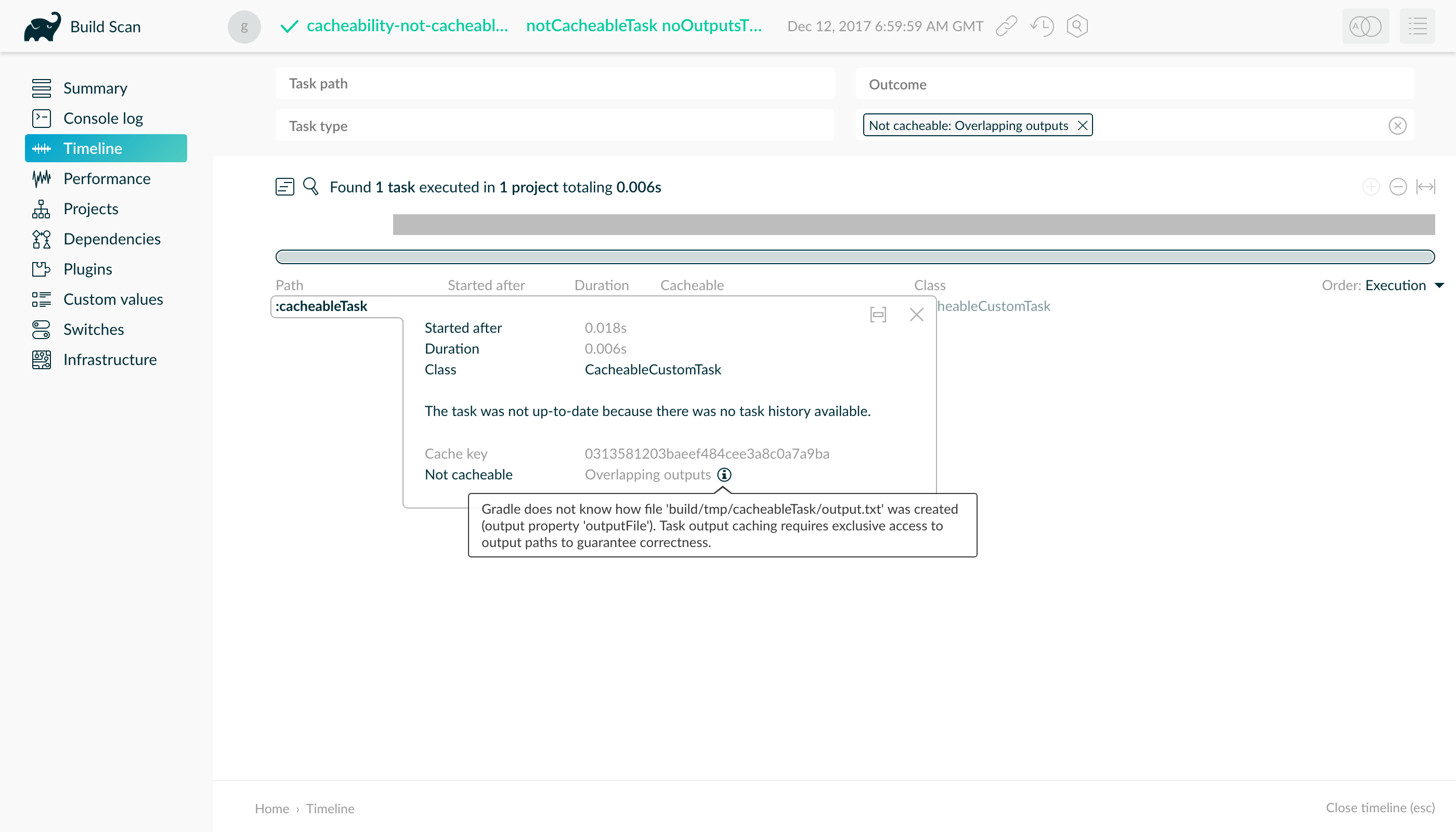

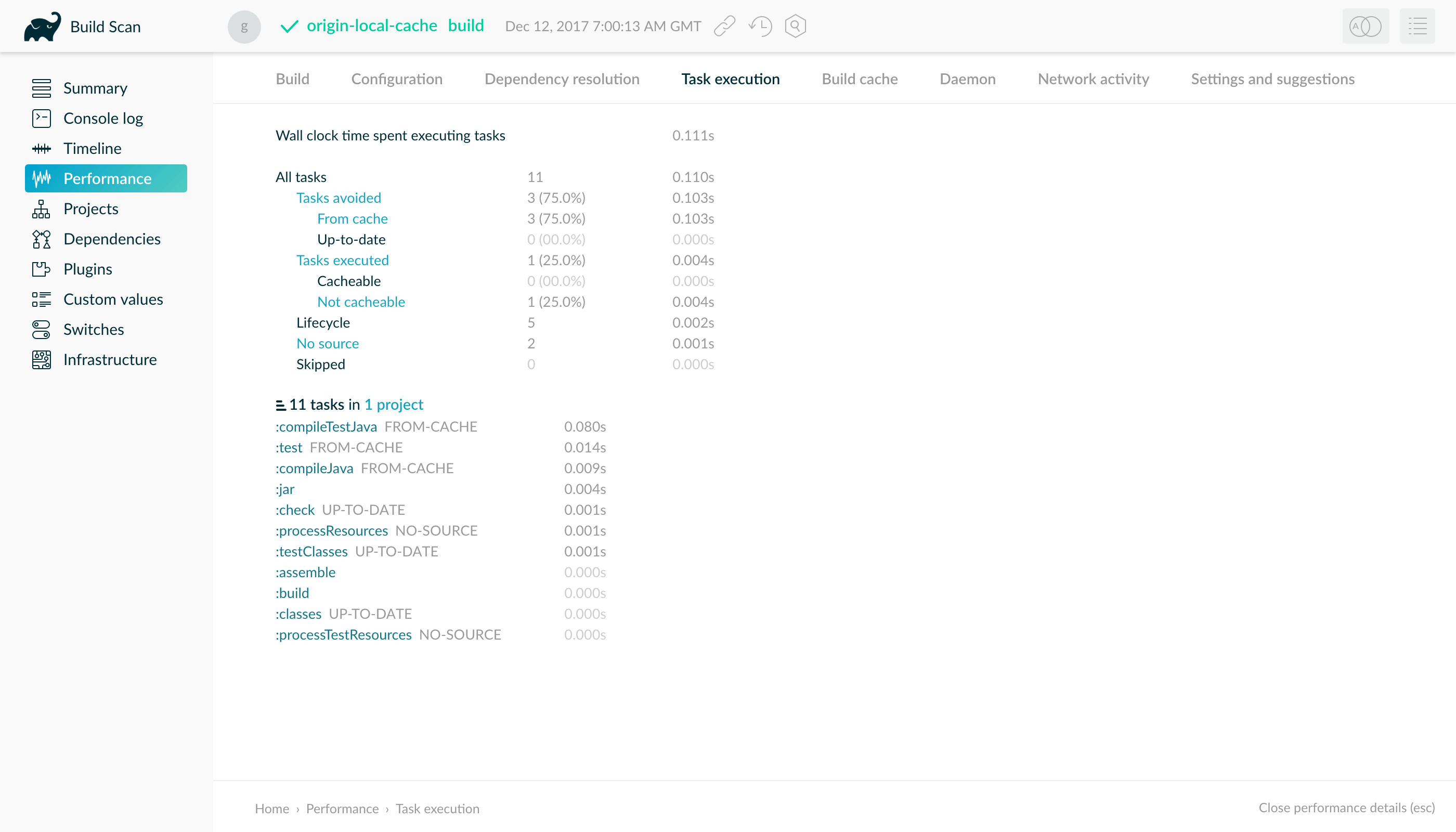

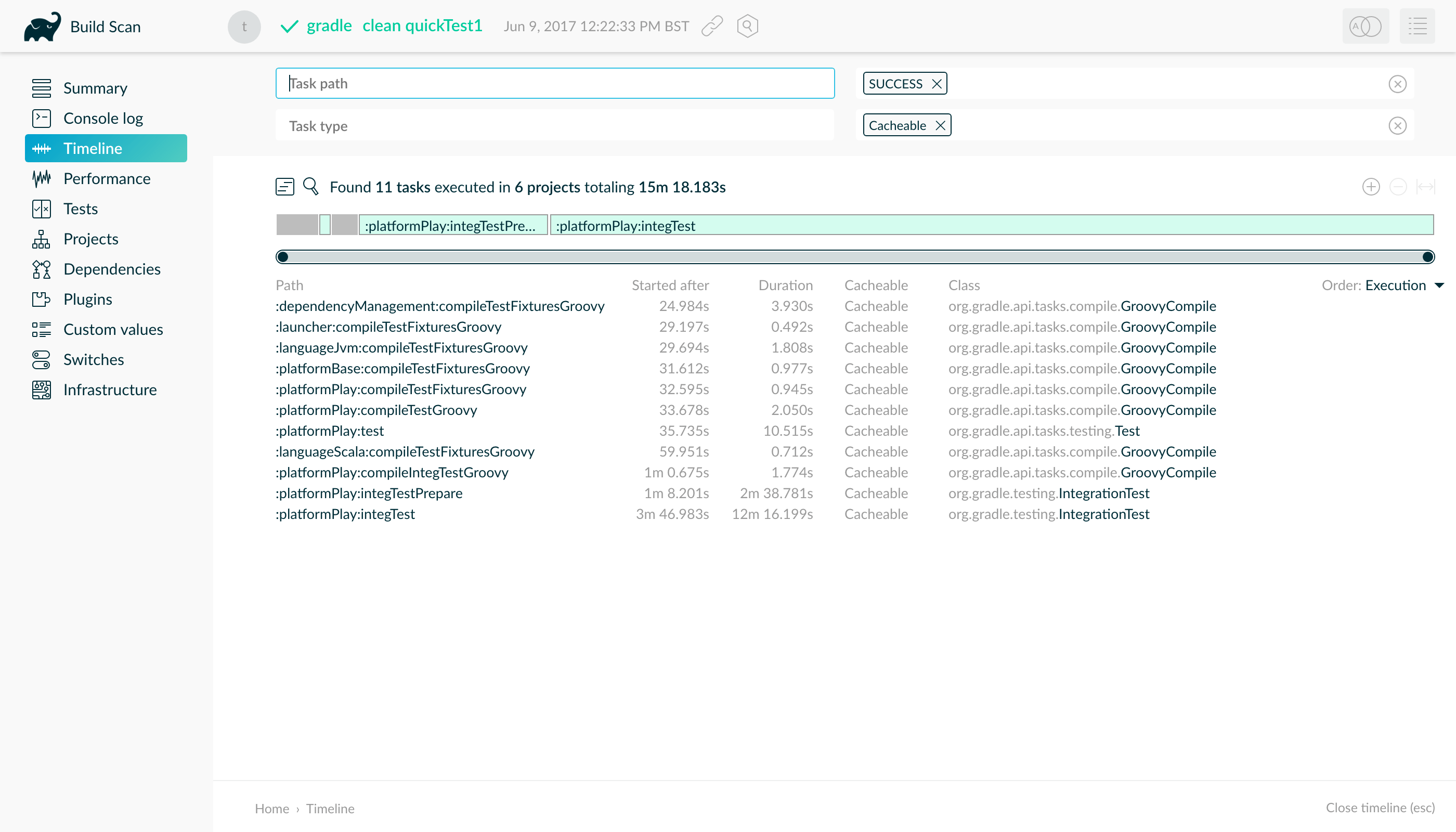

- USING THE BUILD CACHE

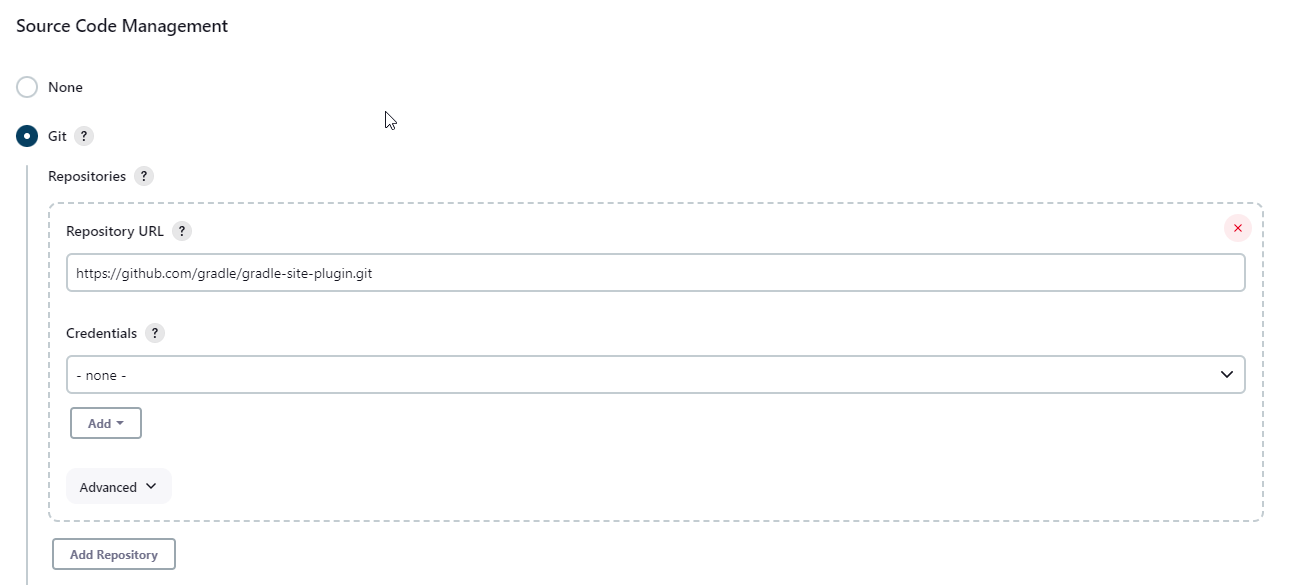

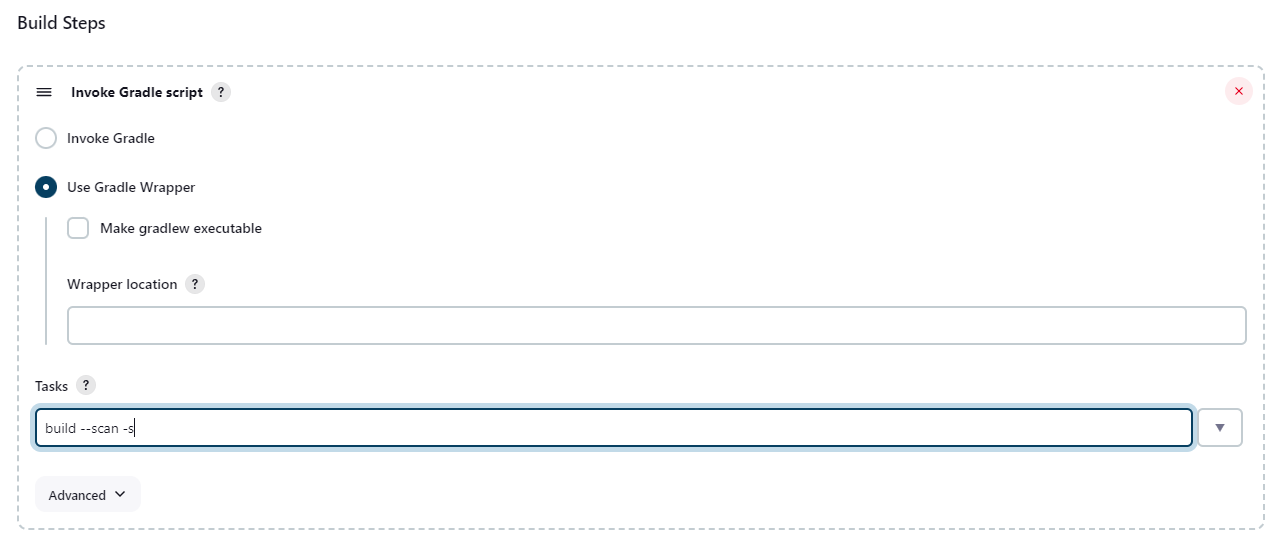

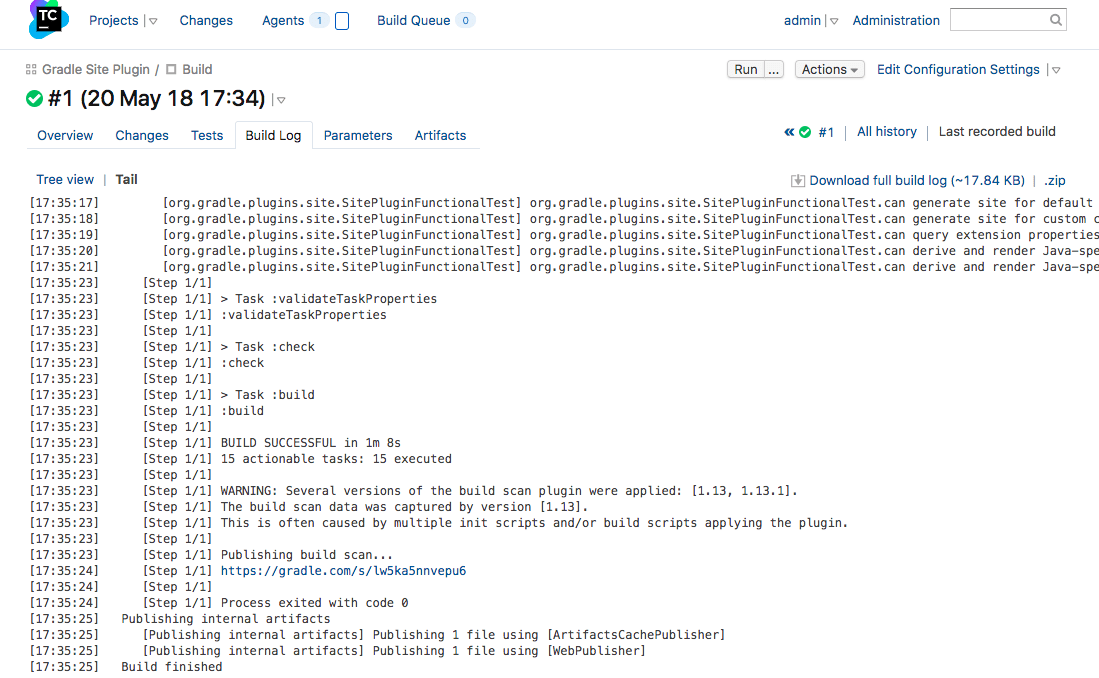

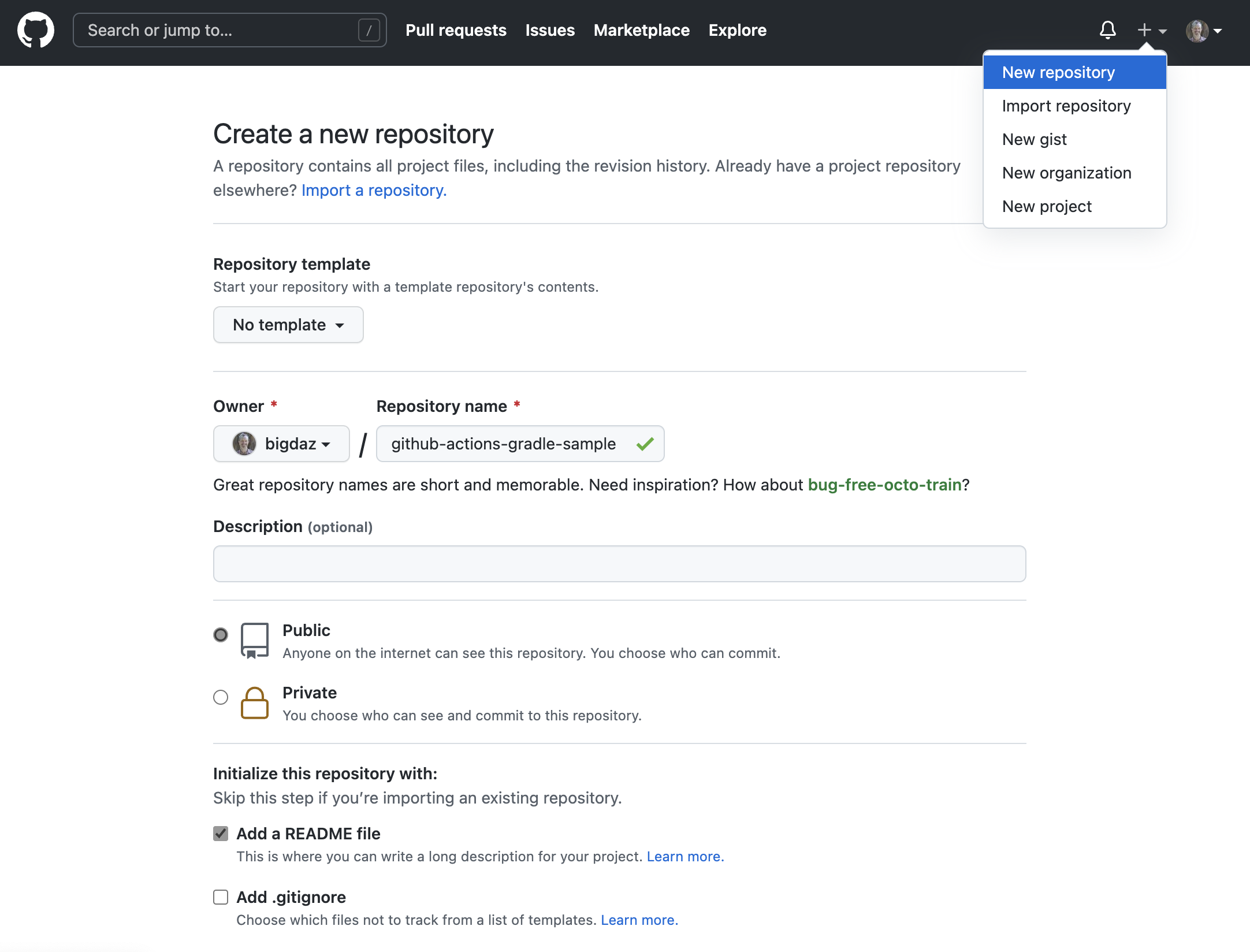

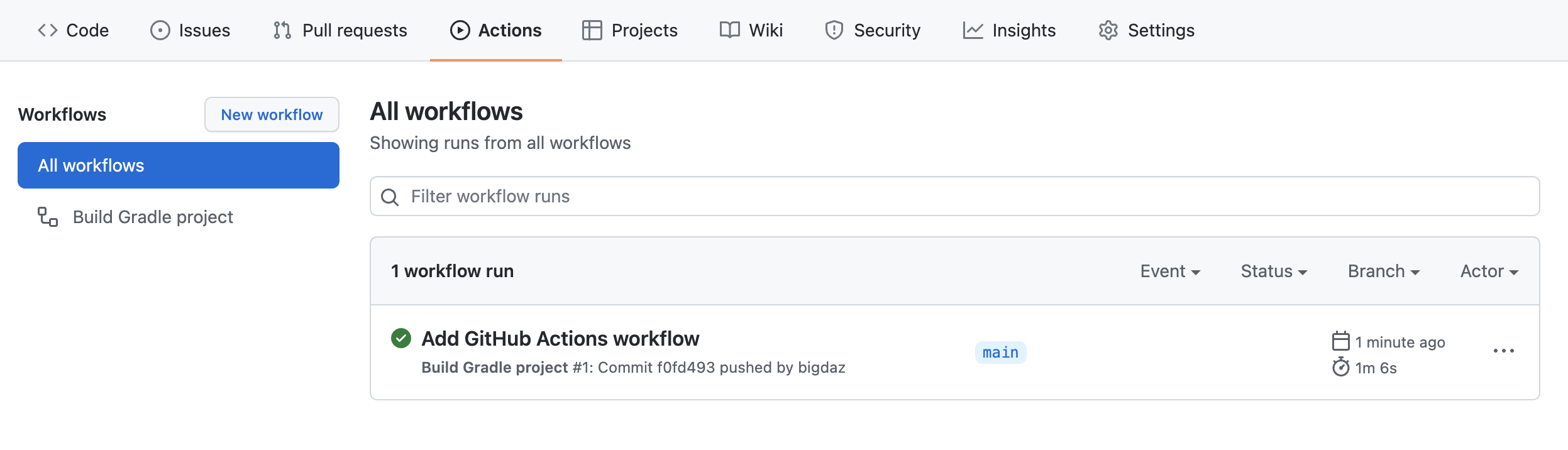

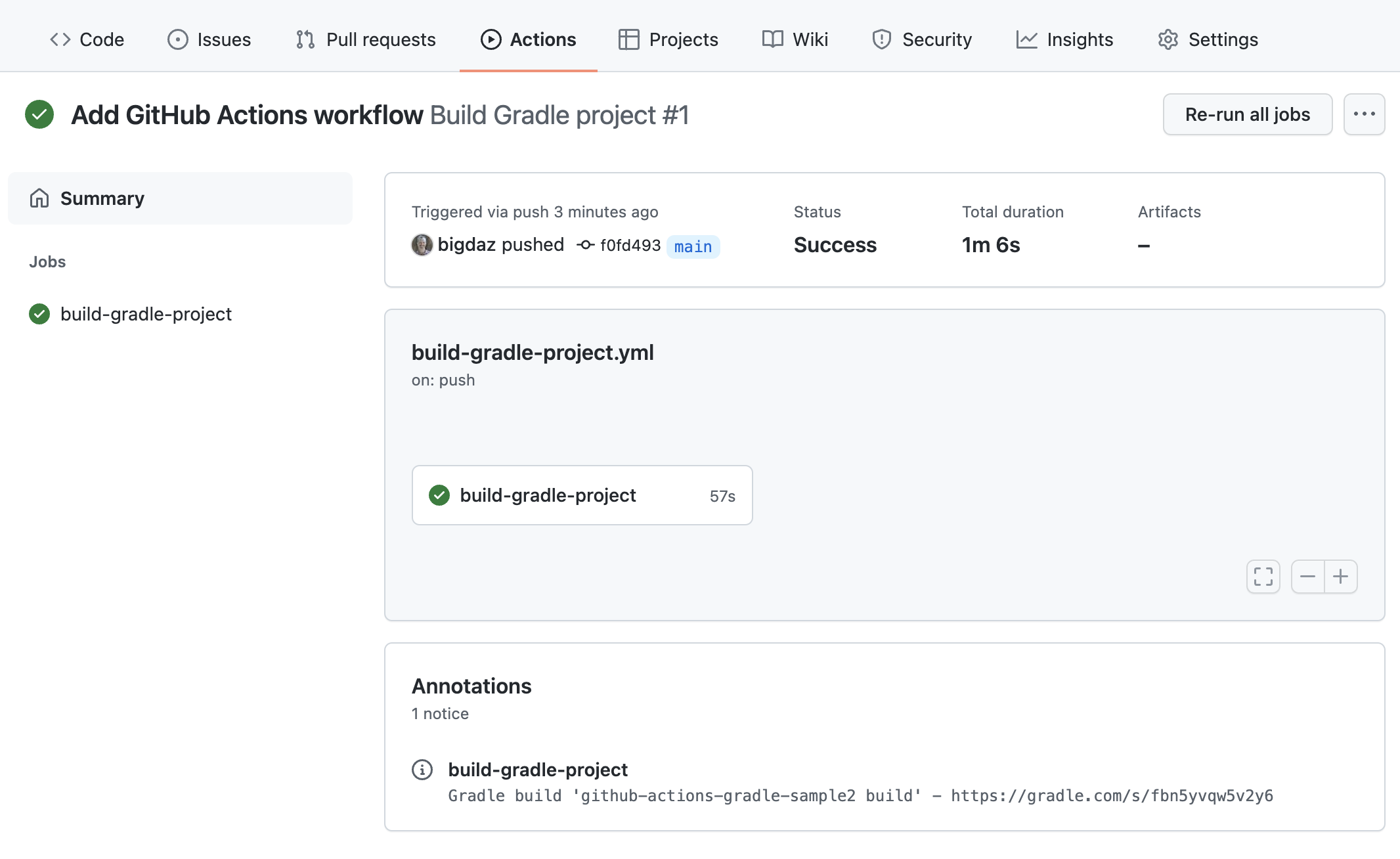

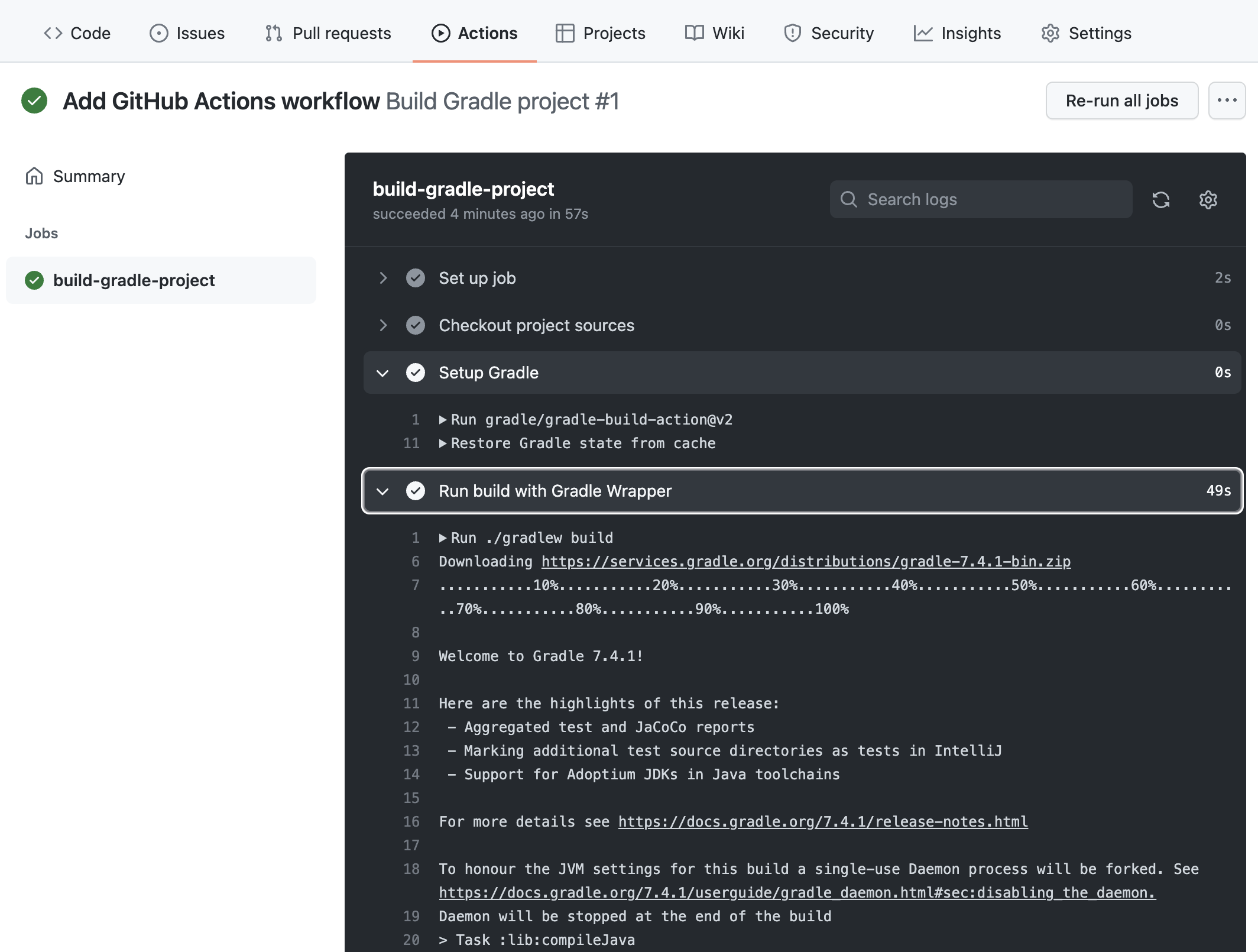

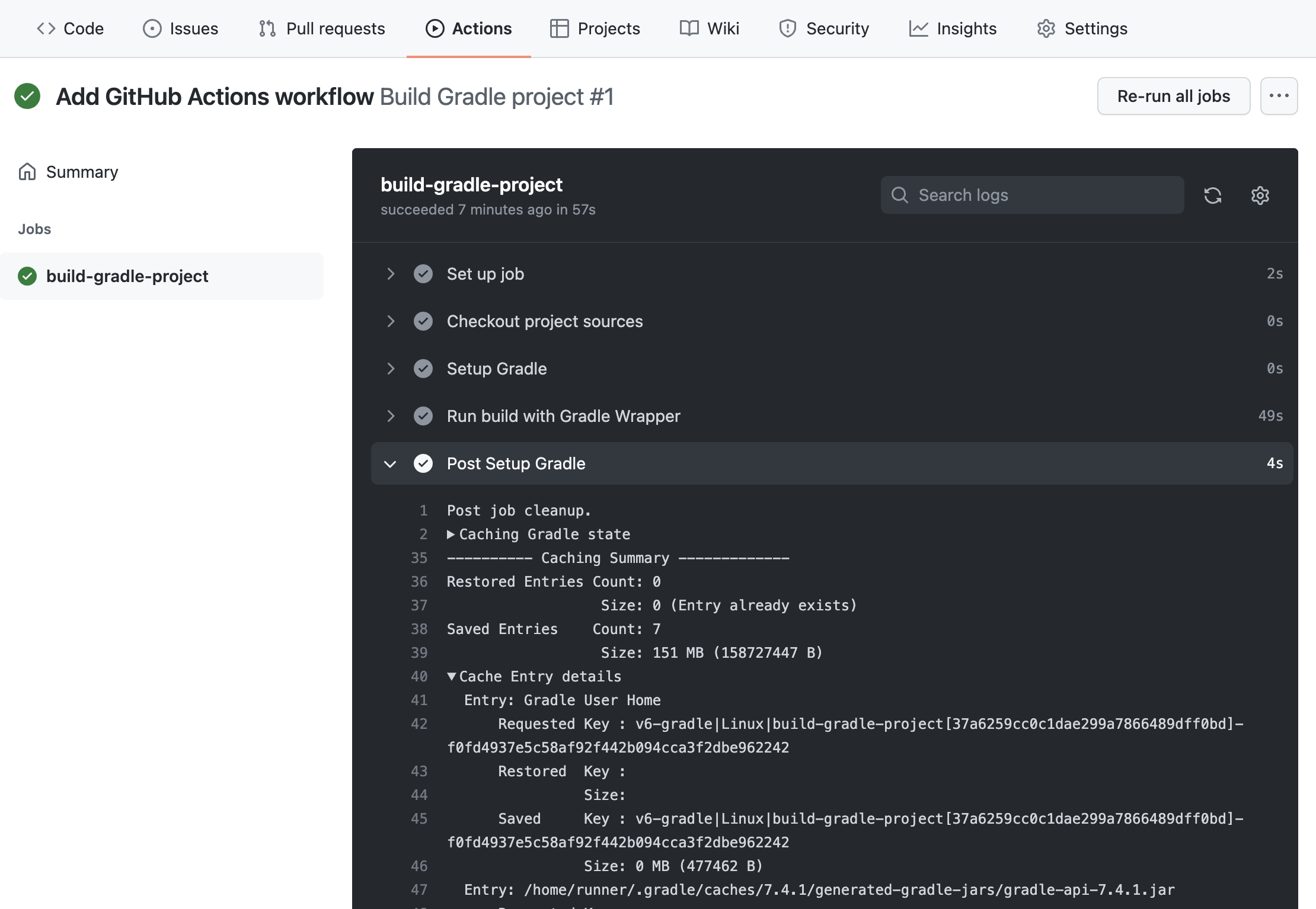

- GRADLE ON CI

- REFERENCE

- GRADLE DSLs and API

- LICENSE INFORMATION

OVERVIEW

Gradle User Manual

Gradle Build Tool

Gradle Build Tool is a fast, dependable, and adaptable open-source build automation tool with an elegant and extensible declarative build language.

Gradle Build Tool is a fast, dependable, and adaptable open-source build automation tool with an elegant and extensible declarative build language.

In this User Manual, Gradle Build Tool is abbreviated Gradle.

Why Gradle?

Gradle is a widely used and mature tool with an active community and a strong developer ecosystem.

-

Gradle is the most popular build system for the JVM and is the default system for Android and Kotlin Multi-Platform projects. It has a rich community plugin ecosystem.

-

Gradle can automate a wide range of software build scenarios using either its built-in functionality, third-party plugins, or custom build logic.

-

Gradle provides a high-level, declarative, and expressive build language that makes it easy to read and write build logic.

-

Gradle is fast, scalable, and can build projects of any size and complexity.

-

Gradle produces dependable results while benefiting from optimizations such as incremental builds, build caching, and parallel execution.

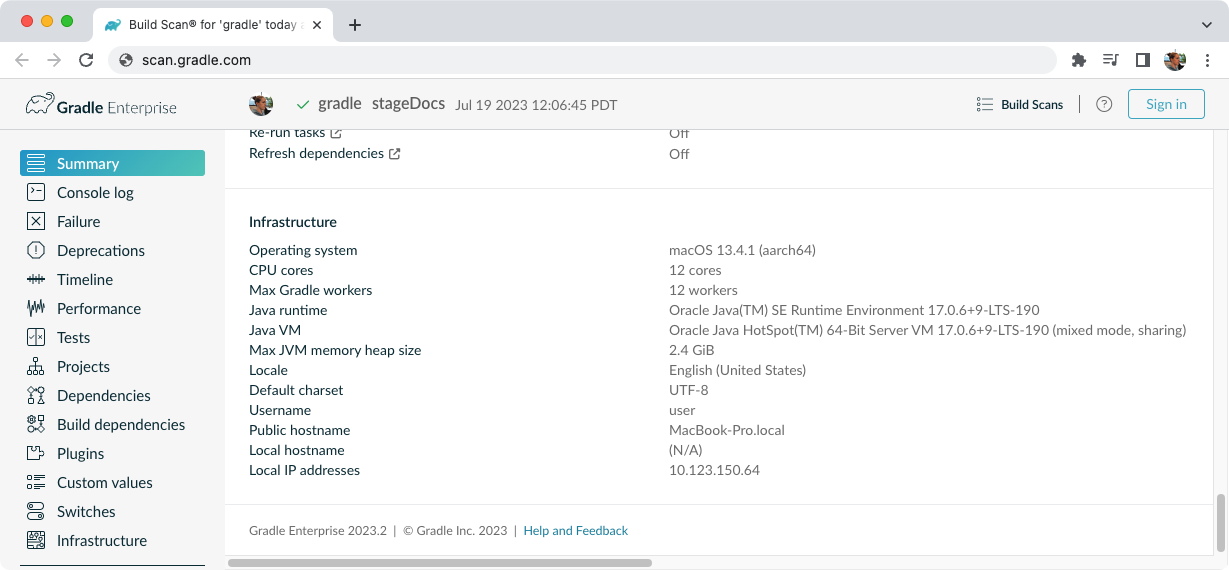

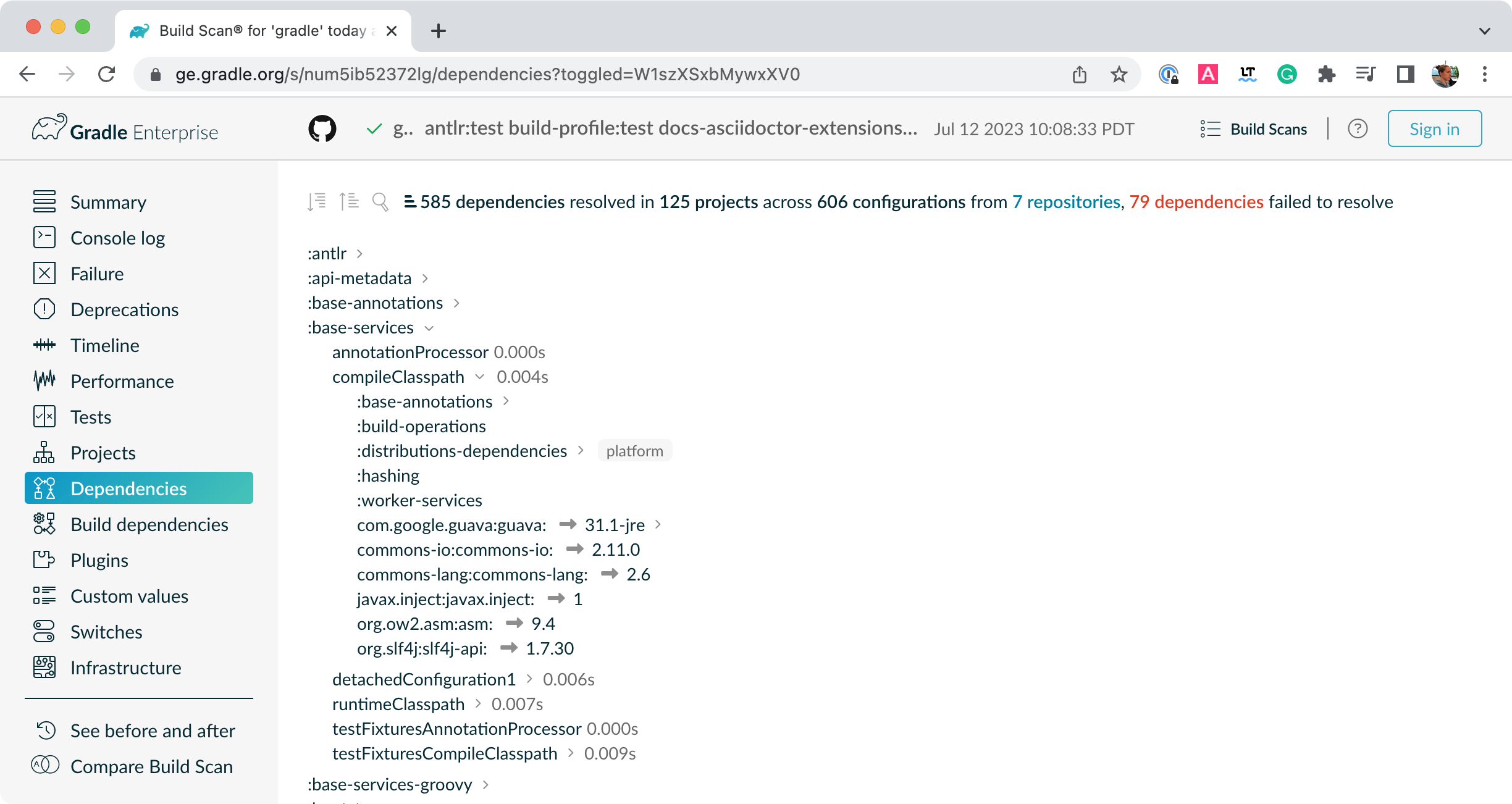

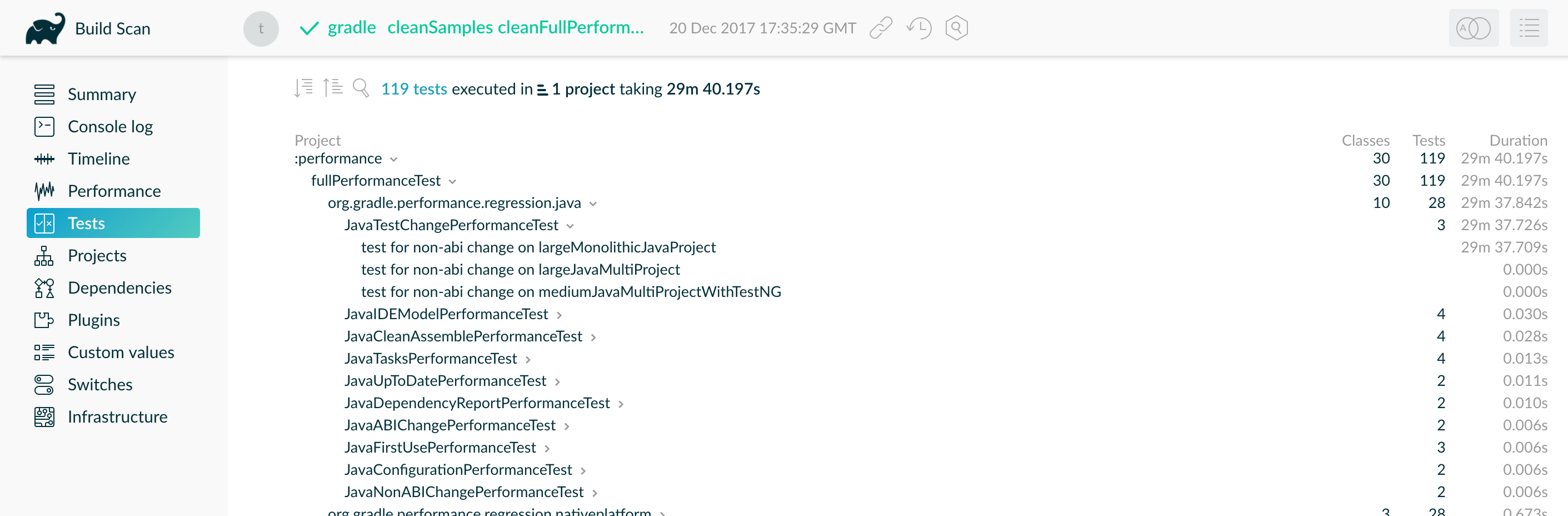

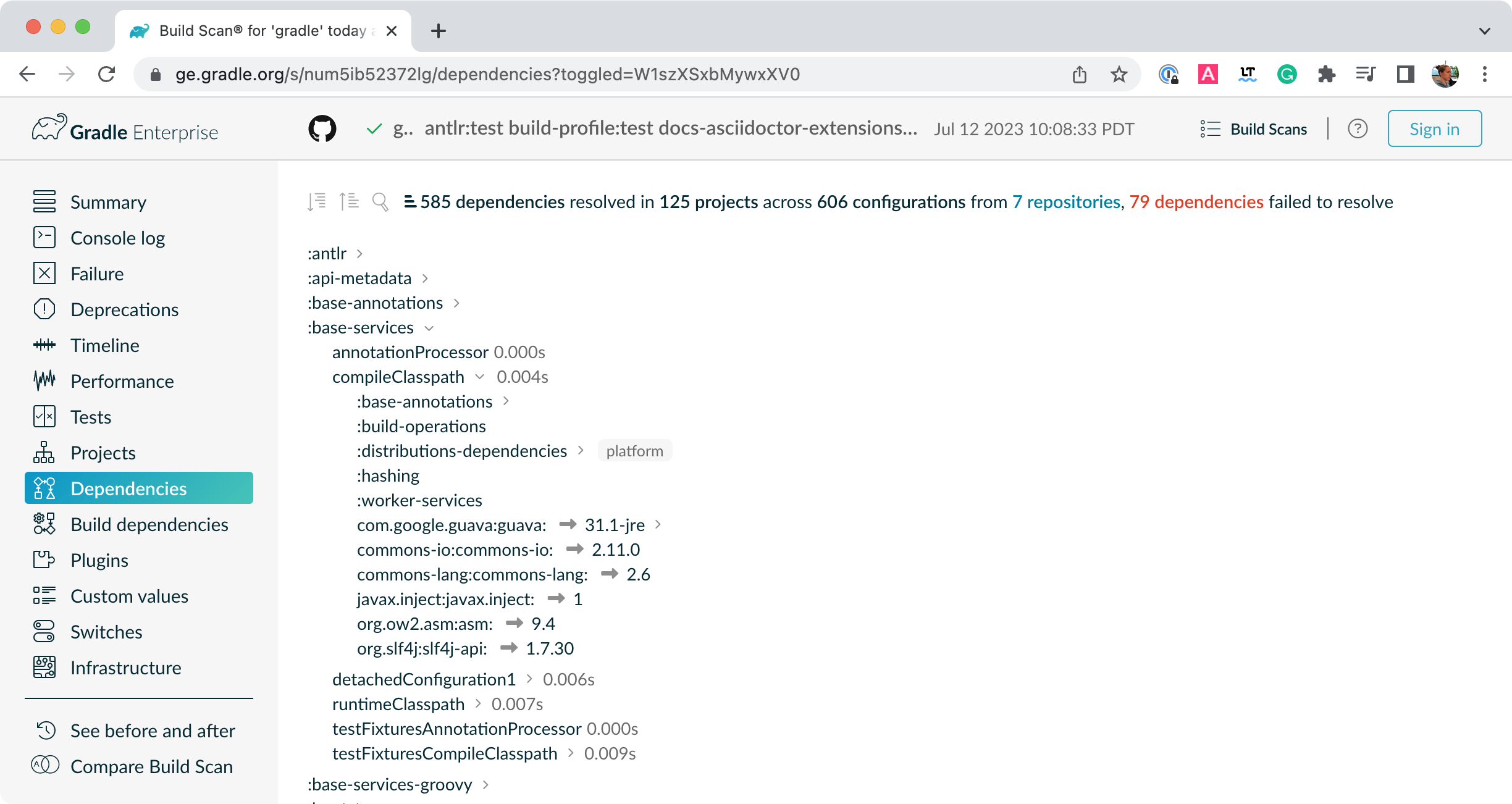

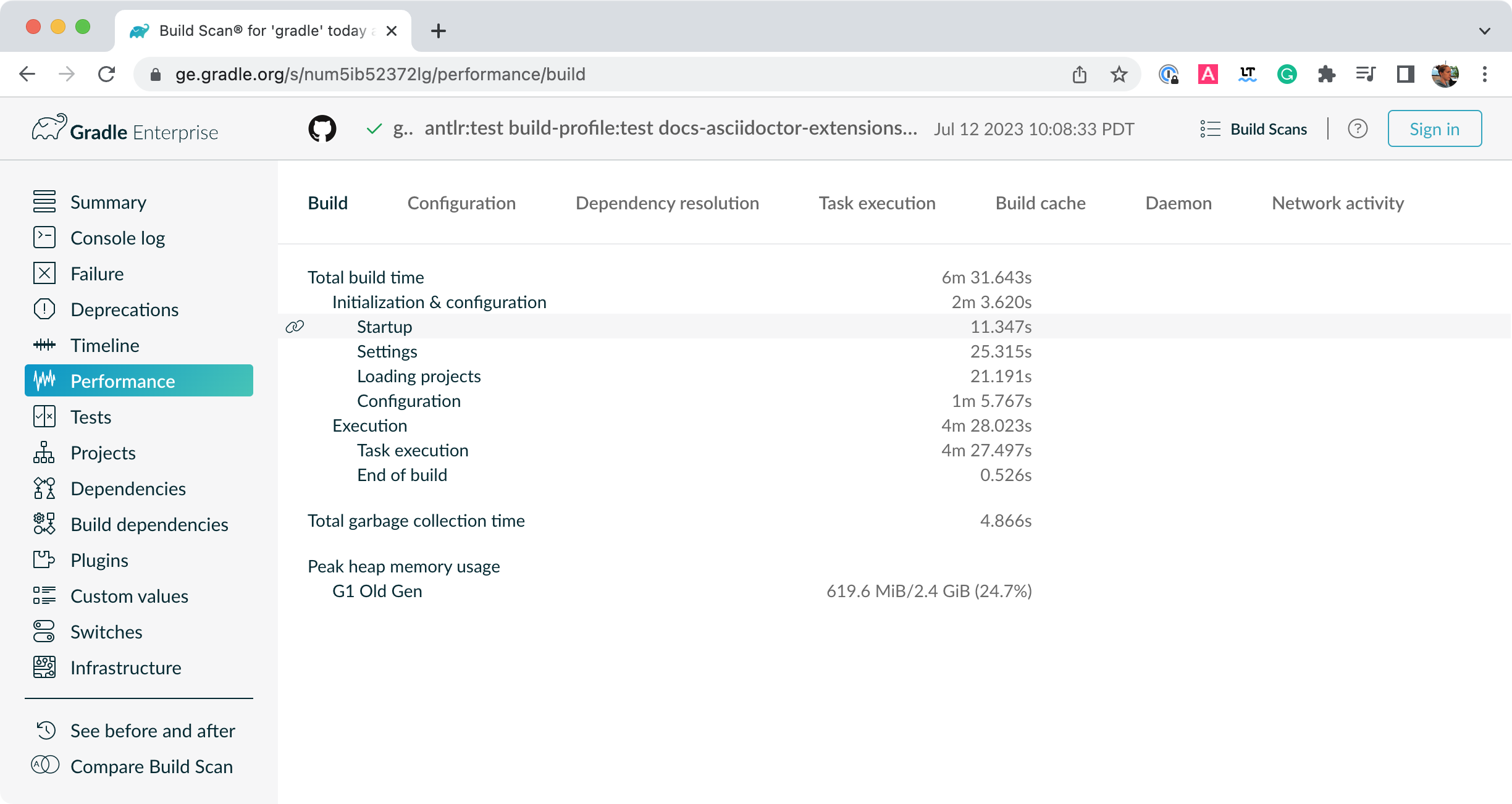

Gradle, Inc. provides a free service called Build Scan® that provides extensive information and insights about your builds. You can view scans to identify problems or share them for debugging help.

Supported Languages and Frameworks

Gradle supports Android, Java, Kotlin Multiplatform, Groovy, Scala, Javascript, and C/C++.

Compatible IDEs

All major IDEs support Gradle, including Android Studio, IntelliJ IDEA, Visual Studio Code, Eclipse, and NetBeans.

You can also invoke Gradle via its command-line interface (CLI) in your terminal or through your continuous integration (CI) server.

Education

The Gradle User Manual is the official documentation for the Gradle Build Tool.

-

Getting Started Tutorial — Learn Gradle basics and the benefits of building your App with Gradle.

-

Training Courses — Head over to the courses page to sign up for free Gradle training.

Support

-

Forum — The fastest way to get help is through the Gradle Forum.

-

Slack — Community members and core contributors answer questions directly on our Slack Channel.

Licenses

Gradle Build Tool source code is open and licensed under the Apache License 2.0. Gradle user manual and DSL reference manual are licensed under Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

The User Manual

Explore our guides and examples to use Gradle.

Releases

Information on Gradle releases and how to install Gradle is found on the Installation page.

Content

The Gradle User Manual is broken down into the following sections:

- Running Gradle Builds

-

Learn Gradle basics and how to use Gradle to build your project.

- Authoring Gradle Builds

-

Develop tasks and plugins to customize your build.

- Authoring JVM Builds

-

Use Gradle with your Java project.

- Working with Dependencies

-

Add dependencies to your build.

- Optimizing Builds

-

Use caches to optimize your build and understand the Gradle daemon, incremental builds and file system watching.

- Gradle on CI

-

Gradle integration with popular continuous integration (CI) servers.

RELEASES

Installing Gradle

Gradle Installation

If all you want to do is run an existing Gradle project, then you don’t need to install Gradle if the build uses the Gradle Wrapper.

This is identifiable by the presence of the gradlew or gradlew.bat files in the root of the project:

. // (1) ├── gradle │ └── wrapper // (2) ├── gradlew // (3) ├── gradlew.bat // (3) └── ⋮

-

Project root directory.

-

Scripts for executing Gradle builds.

If the gradlew or gradlew.bat files are already present in your project, you do not need to install Gradle.

But you need to make sure your system satisfies Gradle’s prerequisites.

You can follow the steps in the Upgrading Gradle section if you want to update the Gradle version for your project. Please use the Gradle Wrapper to upgrade Gradle.

Android Studio comes with a working installation of Gradle, so you don’t need to install Gradle separately when only working within that IDE.

If you do not meet the criteria above and decide to install Gradle on your machine, first check if Gradle is already installed by running gradle -v in your terminal.

If the command does not return anything, then Gradle is not installed, and you can follow the instructions below.

You can install Gradle Build Tool on Linux, macOS, or Windows. The installation can be done manually or using a package manager like SDKMAN! or Homebrew.

You can find all Gradle releases and their checksums on the releases page.

Prerequisites

Gradle runs on all major operating systems. It requires Java Development Kit (JDK) version 8 or higher to run. You can check the compatibility matrix for more information.

To check, run java -version:

❯ java -version openjdk version "11.0.18" 2023-01-17 OpenJDK Runtime Environment Homebrew (build 11.0.18+0) OpenJDK 64-Bit Server VM Homebrew (build 11.0.18+0, mixed mode)

❯ java version "1.8.0_151" Java(TM) SE Runtime Environment (build 1.8.0_151-b12) Java HotSpot(TM) 64-Bit Server VM (build 25.151-b12, mixed mode)

Gradle uses the JDK it finds in your path, the JDK used by your IDE, or the JDK specified by your project. In this example, the $PATH points to JDK17:

❯ echo $PATH /opt/homebrew/opt/openjdk@17/bin

You can also set the JAVA_HOME environment variable to point to a specific JDK installation directory.

This is especially useful when multiple JDKs are installed:

❯ echo %JAVA_HOME% C:\Program Files\Java\jdk1.7.0_80

❯ echo $JAVA_HOME /Library/Java/JavaVirtualMachines/jdk-16.jdk/Contents/Home

Linux installation

Installing with a package manager

SDKMAN! is a tool for managing parallel versions of multiple Software Development Kits on most Unix-like systems (macOS, Linux, Cygwin, Solaris and FreeBSD). Gradle is deployed and maintained by SDKMAN!:

❯ sdk install gradle

Other package managers are available, but the version of Gradle distributed by them is not controlled by Gradle, Inc. Linux package managers may distribute a modified version of Gradle that is incompatible or incomplete when compared to the official version.

Installing manually

Step 1 - Download the latest Gradle distribution

The distribution ZIP file comes in two flavors:

-

Binary-only (bin)

-

Complete (all) with docs and sources

We recommend downloading the bin file; it is a smaller file that is quick to download (and the latest documentation is available online).

Step 2 - Unpack the distribution

Unzip the distribution zip file in the directory of your choosing, e.g.:

❯ mkdir /opt/gradle ❯ unzip -d /opt/gradle gradle-8.7-bin.zip ❯ ls /opt/gradle/gradle-8.7 LICENSE NOTICE bin README init.d lib media

Step 3 - Configure your system environment

To install Gradle, the path to the unpacked files needs to be in your Path.

Configure your PATH environment variable to include the bin directory of the unzipped distribution, e.g.:

❯ export PATH=$PATH:/opt/gradle/gradle-8.7/bin

Alternatively, you could also add the environment variable GRADLE_HOME and point this to the unzipped distribution.

Instead of adding a specific version of Gradle to your PATH, you can add $GRADLE_HOME/bin to your PATH.

When upgrading to a different version of Gradle, simply change the GRADLE_HOME environment variable.

export GRADLE_HOME=/opt/gradle/gradle-8.7

export PATH=${GRADLE_HOME}/bin:${PATH}

macOS installation

Installing with a package manager

SDKMAN! is a tool for managing parallel versions of multiple Software Development Kits on most Unix-like systems (macOS, Linux, Cygwin, Solaris and FreeBSD). Gradle is deployed and maintained by SDKMAN!:

❯ sdk install gradle

Using Homebrew:

❯ brew install gradle

Using MacPorts:

❯ sudo port install gradle

Other package managers are available, but the version of Gradle distributed by them is not controlled by Gradle, Inc.

Installing manually

Step 1 - Download the latest Gradle distribution

The distribution ZIP file comes in two flavors:

-

Binary-only (bin)

-

Complete (all) with docs and sources

We recommend downloading the bin file; it is a smaller file that is quick to download (and the latest documentation is available online).

Step 2 - Unpack the distribution

Unzip the distribution zip file in the directory of your choosing, e.g.:

❯ mkdir /usr/local/gradle ❯ unzip gradle-8.7-bin.zip -d /usr/local/gradle ❯ ls /usr/local/gradle/gradle-8.7 LICENSE NOTICE README bin init.d lib

Step 3 - Configure your system environment

To install Gradle, the path to the unpacked files needs to be in your Path.

Configure your PATH environment variable to include the bin directory of the unzipped distribution, e.g.:

❯ export PATH=$PATH:/usr/local/gradle/gradle-8.7/bin

Alternatively, you could also add the environment variable GRADLE_HOME and point this to the unzipped distribution.

Instead of adding a specific version of Gradle to your PATH, you can add $GRADLE_HOME/bin to your PATH.

When upgrading to a different version of Gradle, simply change the GRADLE_HOME environment variable.

It’s a good idea to edit .bash_profile in your home directory to add GRADLE_HOME variable:

export GRADLE_HOME=/usr/local/gradle/gradle-8.7 export PATH=$GRADLE_HOME/bin:$PATH

Windows installation

Installing manually

Step 1 - Download the latest Gradle distribution

The distribution ZIP file comes in two flavors:

-

Binary-only (bin)

-

Complete (all) with docs and sources

We recommend downloading the bin file.

Step 2 - Unpack the distribution

Create a new directory C:\Gradle with File Explorer.

Open a second File Explorer window and go to the directory where the Gradle distribution was downloaded. Double-click the ZIP archive to expose the content.

Drag the content folder gradle-8.7 to your newly created C:\Gradle folder.

Alternatively, you can unpack the Gradle distribution ZIP into C:\Gradle using the archiver tool of your choice.

Step 3 - Configure your system environment

To install Gradle, the path to the unpacked files needs to be in your Path.

In File Explorer right-click on the This PC (or Computer) icon, then click Properties → Advanced System Settings → Environmental Variables.

Under System Variables select Path, then click Edit.

Add an entry for C:\Gradle\gradle-8.7\bin.

Click OK to save.

Alternatively, you can add the environment variable GRADLE_HOME and point this to the unzipped distribution.

Instead of adding a specific version of Gradle to your Path, you can add %GRADLE_HOME%\bin to your Path.

When upgrading to a different version of Gradle, just change the GRADLE_HOME environment variable.

Verify the installation

Open a console (or a Windows command prompt) and run gradle -v to run gradle and display the version, e.g.:

❯ gradle -v ------------------------------------------------------------ Gradle 8.7 ------------------------------------------------------------ Build time: 2023-03-03 16:41:37 UTC Revision: 7d6581558e226a580d91d399f7dfb9e3095c2b1d Kotlin: 1.8.10 Groovy: 3.0.13 Ant: Apache Ant(TM) version 1.10.11 compiled on July 10 2021 JVM: 17.0.6 (Homebrew 17.0.6+0) OS: Mac OS X 13.2.1 aarch64

You can verify the integrity of the Gradle distribution by downloading the SHA-256 file (available from the releases page) and following these verification instructions.

Compatibility Matrix

The sections below describe Gradle’s compatibility with several integrations. Versions not listed here may or may not work.

Java

A Java version between 8 and 21 is required to execute Gradle. Java 22 and later versions are not yet supported.

Java 6 and 7 can be used for compilation but are deprecated for use with testing. Testing with Java 6 and 7 will not be supported in Gradle 9.0.

Any fully supported version of Java can be used for compilation or testing. However, the latest Java version may only be supported for compilation or testing, not for running Gradle. Support is achieved using toolchains and applies to all tasks supporting toolchains.

See the table below for the Java version supported by a specific Gradle release:

| Java version | Support for toolchains | Support for running Gradle |

|---|---|---|

8 |

N/A |

2.0 |

9 |

N/A |

4.3 |

10 |

N/A |

4.7 |

11 |

N/A |

5.0 |

12 |

N/A |

5.4 |

13 |

N/A |

6.0 |

14 |

N/A |

6.3 |

15 |

6.7 |

6.7 |

16 |

7.0 |

7.0 |

17 |

7.3 |

7.3 |

18 |

7.5 |

7.5 |

19 |

7.6 |

7.6 |

20 |

8.1 |

8.3 |

21 |

8.4 |

8.5 |

22 |

8.7 |

N/A |

23 |

N/A |

N/A |

Kotlin

Gradle is tested with Kotlin 1.6.10 through 2.0.0-Beta3. Beta and RC versions may or may not work.

| Minimum Gradle version | Embedded Kotlin version | Kotlin Language version |

|---|---|---|

5.0 |

1.3.10 |

1.3 |

5.1 |

1.3.11 |

1.3 |

5.2 |

1.3.20 |

1.3 |

5.3 |

1.3.21 |

1.3 |

5.5 |

1.3.31 |

1.3 |

5.6 |

1.3.41 |

1.3 |

6.0 |

1.3.50 |

1.3 |

6.1 |

1.3.61 |

1.3 |

6.3 |

1.3.70 |

1.3 |

6.4 |

1.3.71 |

1.3 |

6.5 |

1.3.72 |

1.3 |

6.8 |

1.4.20 |

1.3 |

7.0 |

1.4.31 |

1.4 |

7.2 |

1.5.21 |

1.4 |

7.3 |

1.5.31 |

1.4 |

7.5 |

1.6.21 |

1.4 |

7.6 |

1.7.10 |

1.4 |

8.0 |

1.8.10 |

1.8 |

8.2 |

1.8.20 |

1.8 |

8.3 |

1.9.0 |

1.8 |

8.4 |

1.9.10 |

1.8 |

8.5 |

1.9.20 |

1.8 |

8.7 |

1.9.22 |

1.8 |

Groovy

Gradle is tested with Groovy 1.5.8 through 4.0.0.

Gradle plugins written in Groovy must use Groovy 3.x for compatibility with Gradle and Groovy DSL build scripts.

Android

Gradle is tested with Android Gradle Plugin 7.3 through 8.2. Alpha and beta versions may or may not work.

The Feature Lifecycle

Gradle is under constant development. New versions are delivered on a regular and frequent basis (approximately every six weeks) as described in the section on end-of-life support.

Continuous improvement combined with frequent delivery allows new features to be available to users early. Early users provide invaluable feedback, which is incorporated into the development process.

Getting new functionality into the hands of users regularly is a core value of the Gradle platform.

At the same time, API and feature stability are taken very seriously and considered a core value of the Gradle platform. Design choices and automated testing are engineered into the development process and formalized by the section on backward compatibility.

The Gradle feature lifecycle has been designed to meet these goals. It also communicates to users of Gradle what the state of a feature is. The term feature typically means an API or DSL method or property in this context, but it is not restricted to this definition. Command line arguments and modes of execution (e.g. the Build Daemon) are two examples of other features.

1. Internal

Internal features are not designed for public use and are only intended to be used by Gradle itself. They can change in any way at any point in time without any notice. Therefore, we recommend avoiding the use of such features. Internal features are not documented. If it appears in this User Manual, the DSL Reference, or the API Reference, then the feature is not internal.

Internal features may evolve into public features.

2. Incubating

Features are introduced in the incubating state to allow real-world feedback to be incorporated into the feature before making it public. It also gives users willing to test potential future changes early access.

A feature in an incubating state may change in future Gradle versions until it is no longer incubating. Changes to incubating features for a Gradle release will be highlighted in the release notes for that release. The incubation period for new features varies depending on the feature’s scope, complexity, and nature.

Features in incubation are indicated. In the source code, all methods/properties/classes that are incubating are annotated with incubating. This results in a special mark for them in the DSL and API references.

If an incubating feature is discussed in this User Manual, it will be explicitly said to be in the incubating state.

Feature Preview API

The feature preview API allows certain incubating features to be activated by adding enableFeaturePreview('FEATURE') in your settings file.

Individual preview features will be announced in release notes.

When incubating features are either promoted to public or removed, the feature preview flags for them become obsolete, have no effect, and should be removed from the settings file.

3. Public

The default state for a non-internal feature is public. Anything documented in the User Manual, DSL Reference, or API reference that is not explicitly said to be incubating or deprecated is considered public. Features are said to be promoted from an incubating state to public. The release notes for each release indicate which previously incubating features are being promoted by the release.

A public feature will never be removed or intentionally changed without undergoing deprecation. All public features are subject to the backward compatibility policy.

4. Deprecated

Some features may be replaced or become irrelevant due to the natural evolution of Gradle. Such features will eventually be removed from Gradle after being deprecated. A deprecated feature may become stale until it is finally removed according to the backward compatibility policy.

Deprecated features are indicated to be so. In the source code, all methods/properties/classes that are deprecated are annotated with “@java.lang.Deprecated” which is reflected in the DSL and API References. In most cases, there is a replacement for the deprecated element, which will be described in the documentation. Using a deprecated feature will result in a runtime warning in Gradle’s output.

The use of deprecated features should be avoided. The release notes for each release indicate any features being deprecated by the release.

Backward compatibility policy

Gradle provides backward compatibility across major versions (e.g., 1.x, 2.x, etc.).

Once a public feature is introduced in a Gradle release, it will remain indefinitely unless deprecated.

Once deprecated, it may be removed in the next major release.

Deprecated features may be supported across major releases, but this is not guaranteed.

Release end-of-life Policy

Every day, a new nightly build of Gradle is created.

This contains all of the changes made through Gradle’s extensive continuous integration tests during that day. Nightly builds may contain new changes that may or may not be stable.

The Gradle team creates a pre-release distribution called a release candidate (RC) for each minor or major release. When no problems are found after a short time (usually a week), the release candidate is promoted to a general availability (GA) release. If a regression is found in the release candidate, a new RC distribution is created, and the process repeats. Release candidates are supported for as long as the release window is open, but they are not intended to be used for production. Bug reports are greatly appreciated during the RC phase.

The Gradle team may create additional patch releases to replace the final release due to critical bug fixes or regressions. For instance, Gradle 5.2.1 replaces the Gradle 5.2 release.

Once a release candidate has been made, all feature development moves on to the next release for the latest major version. As such, each minor Gradle release causes the previous minor releases in the same major version to become end-of-life (EOL). EOL releases do not receive bug fixes or feature backports.

For major versions, Gradle will backport critical fixes and security fixes to the last minor in the previous major version. For example, when Gradle 7 was the latest major version, several releases were made in the 6.x line, including Gradle 6.9 (and subsequent releases).

As such, each major Gradle release causes:

-

The previous major version becomes maintenance only. It will only receive critical bug fixes and security fixes.

-

The major version before the previous one to become end-of-life (EOL), and that release line will not receive any new fixes.

CORE CONCEPTS

Gradle Basics

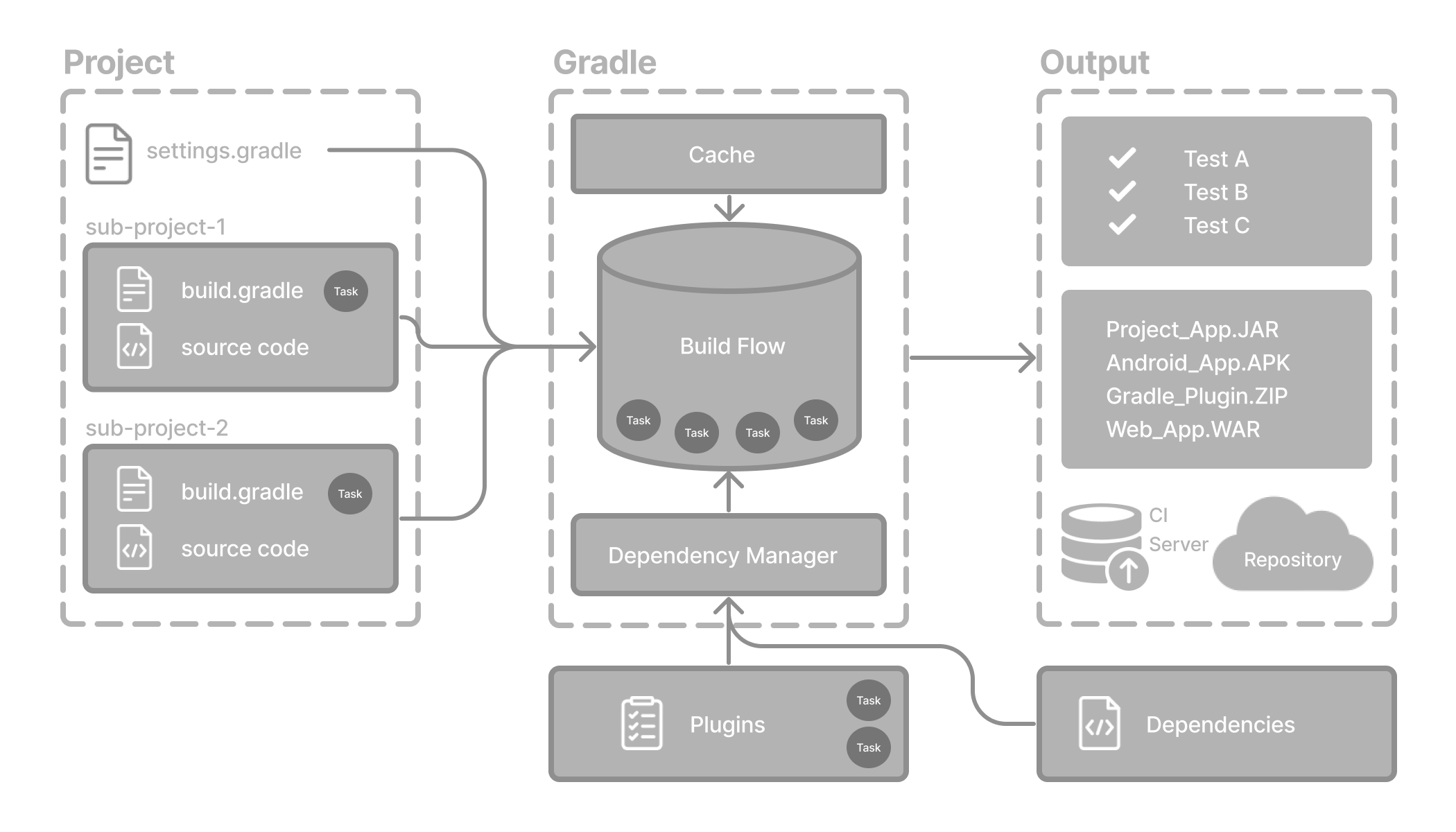

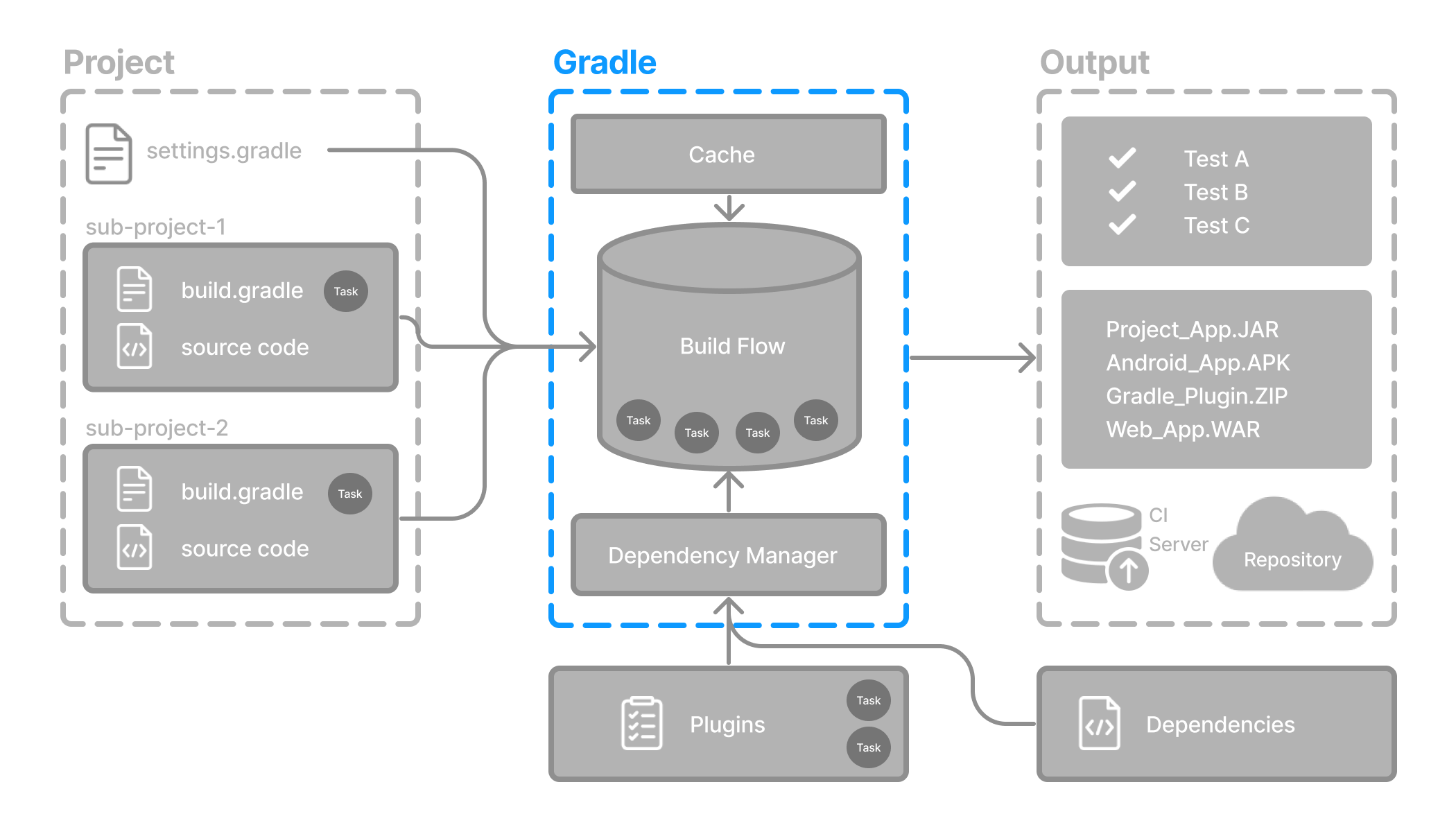

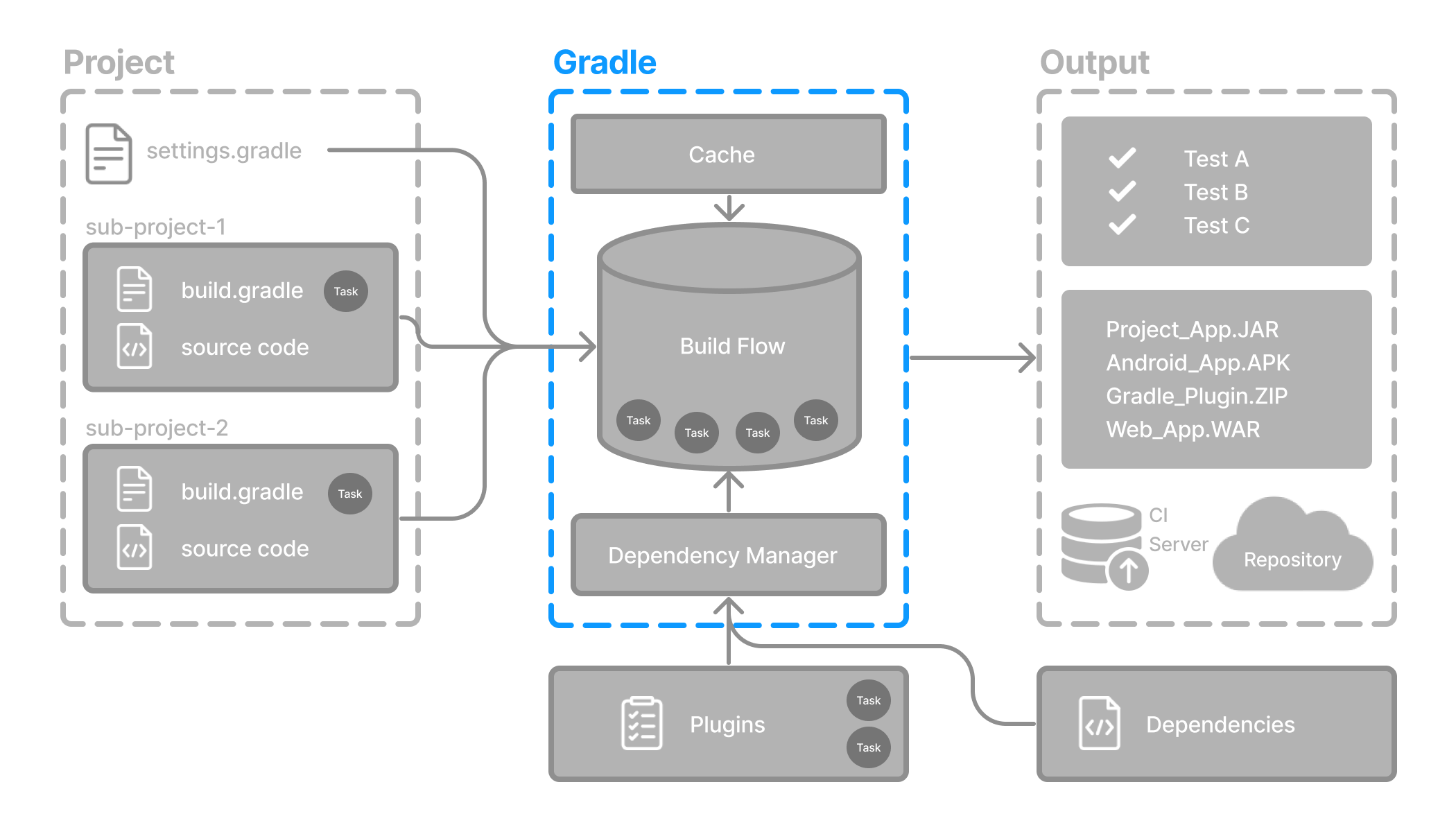

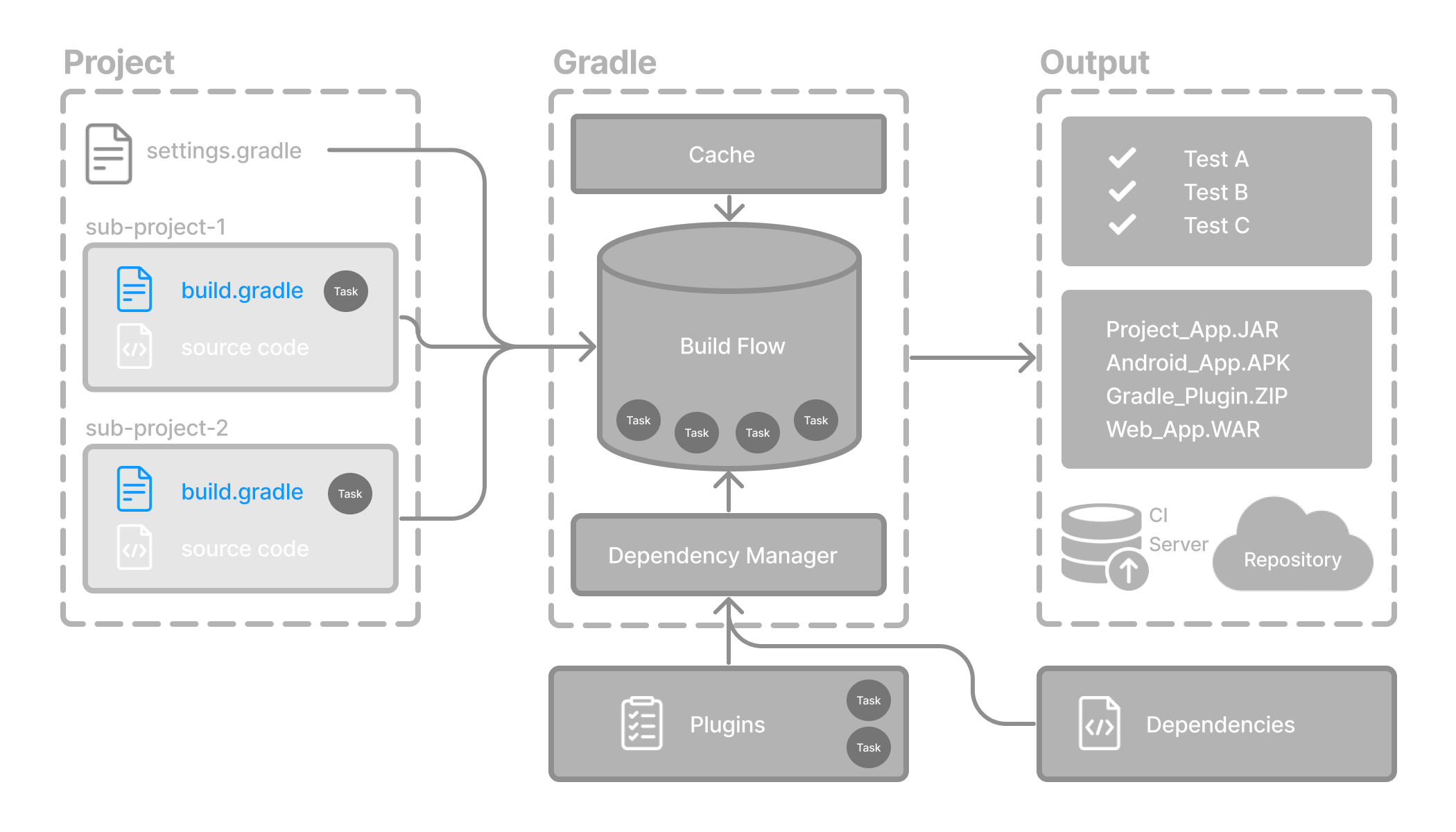

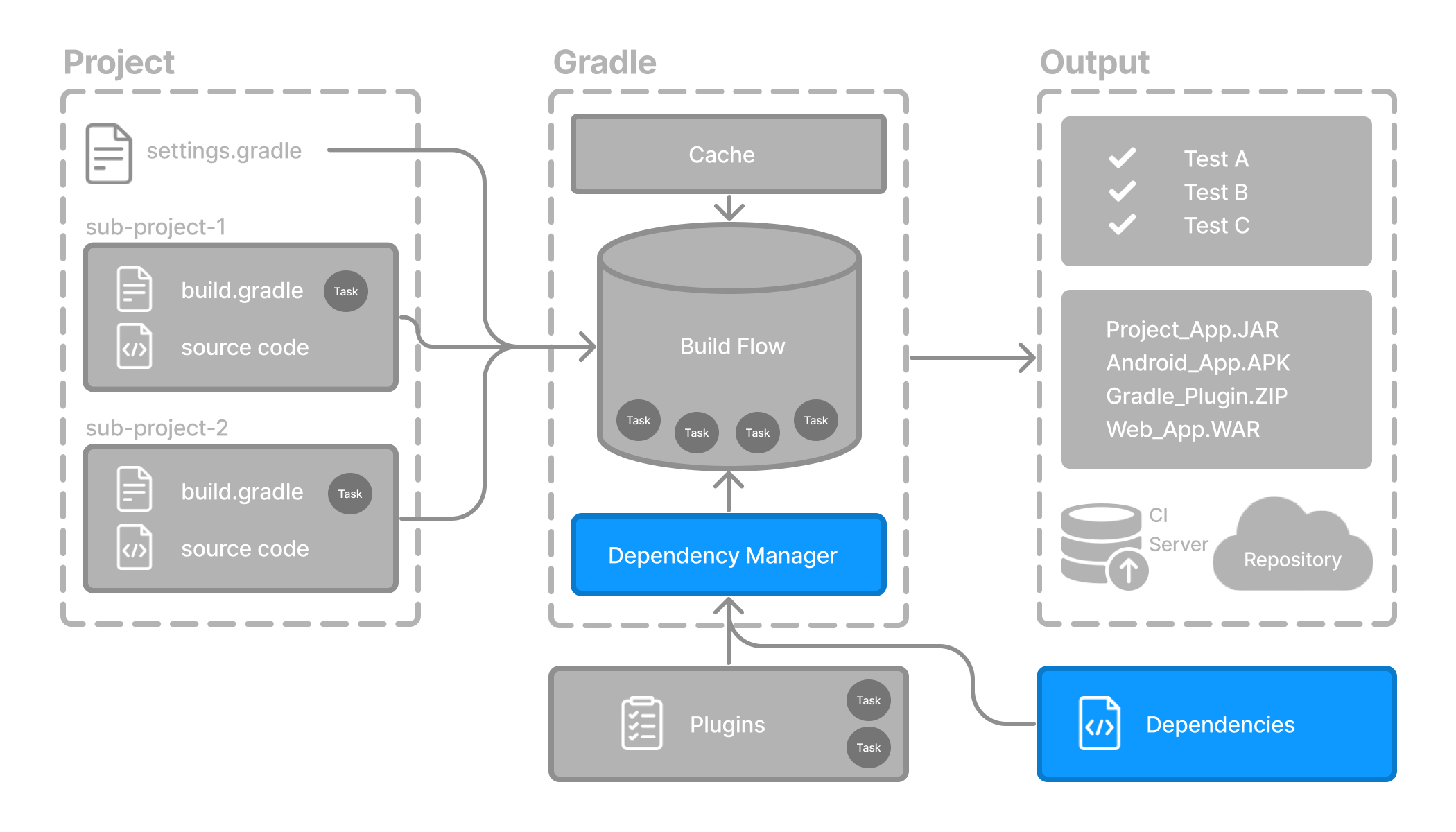

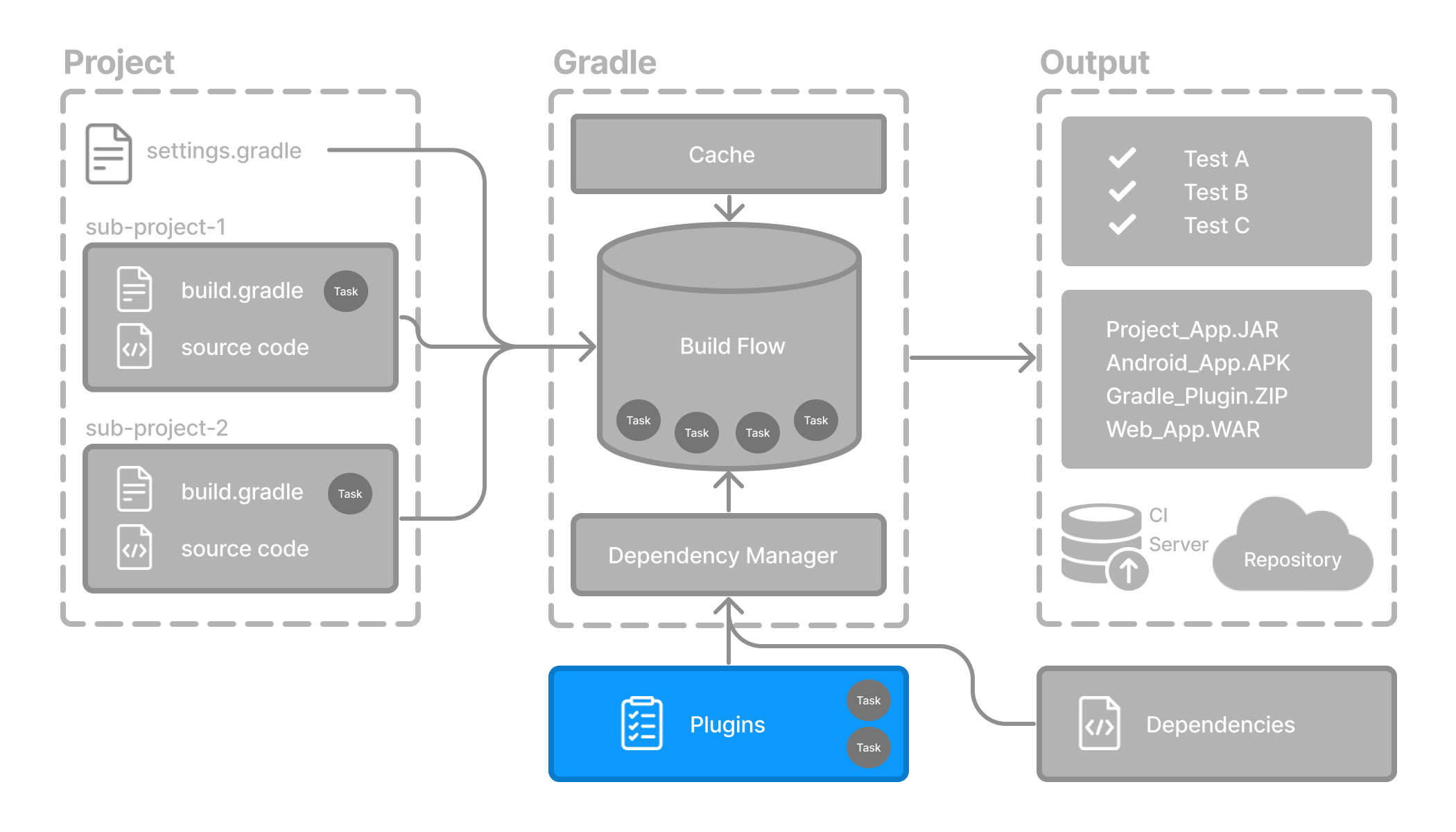

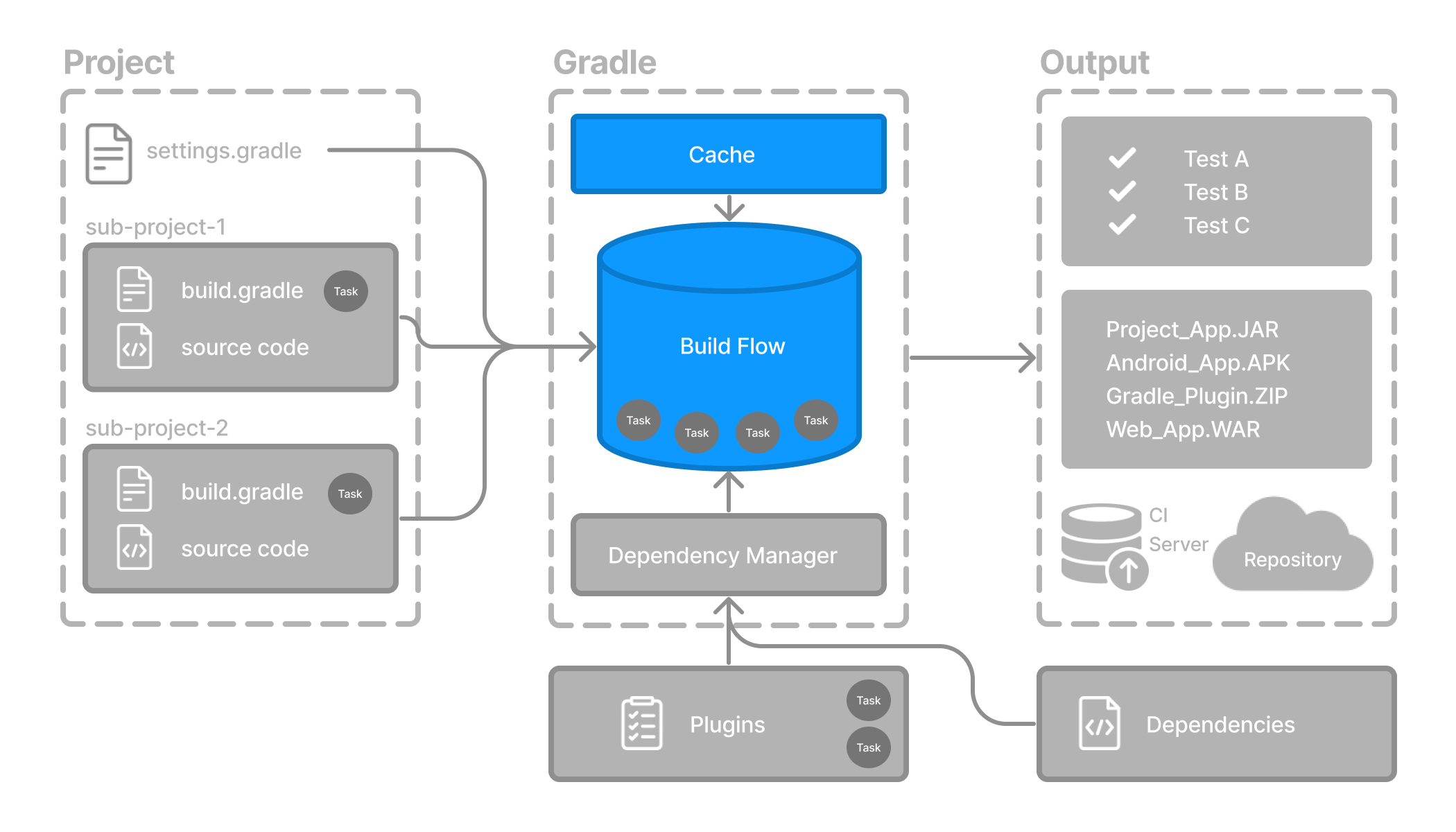

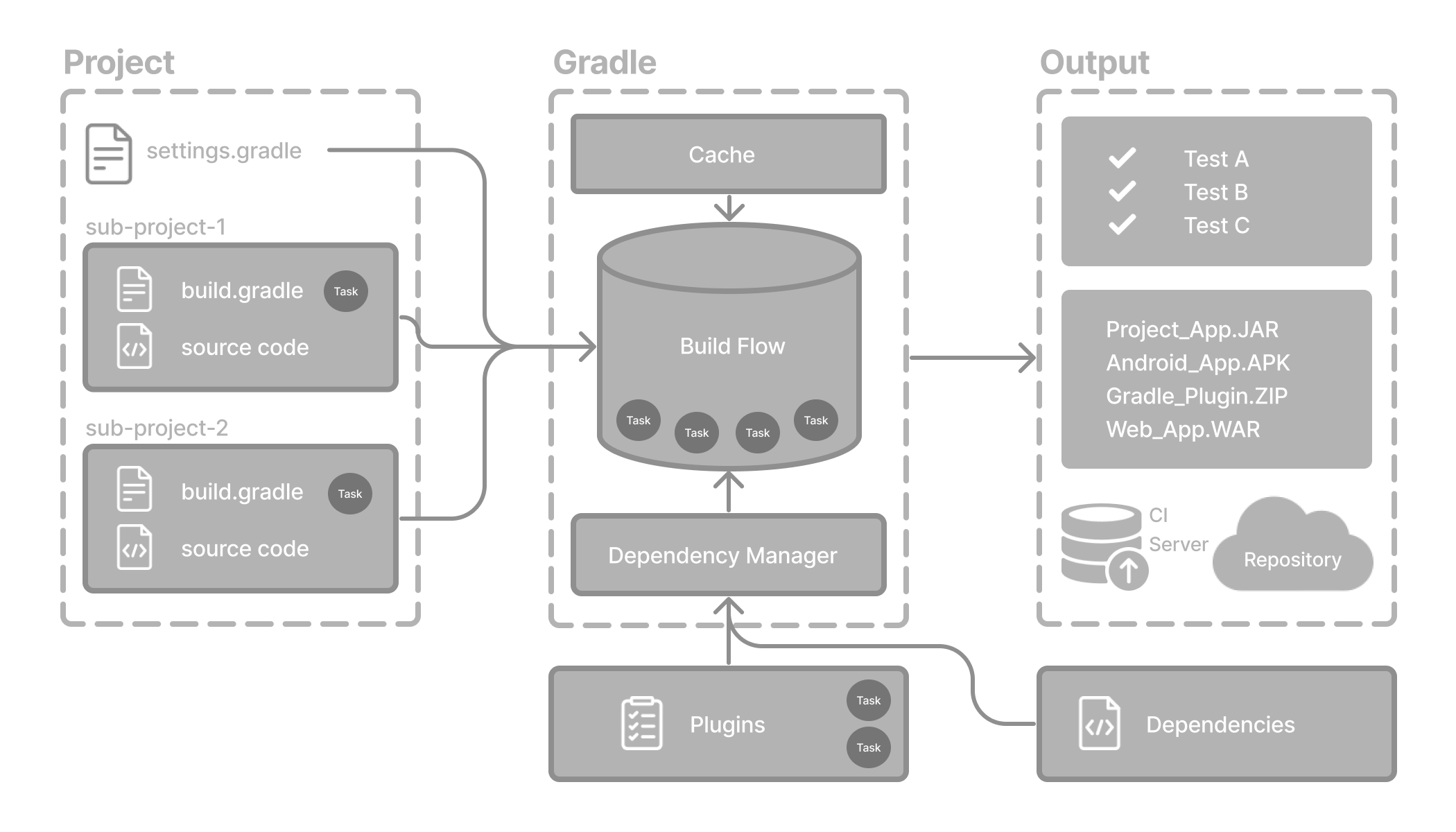

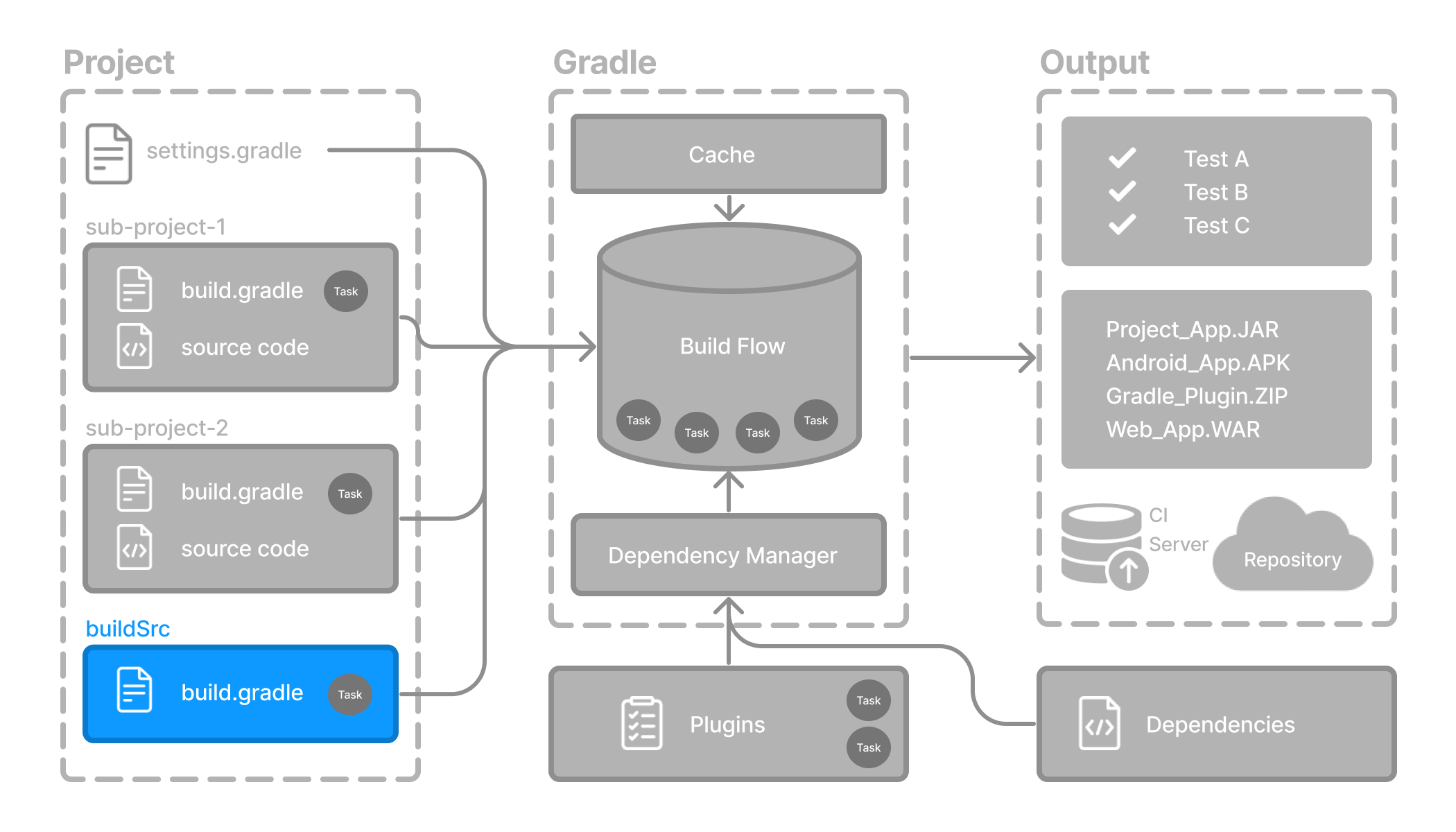

Gradle automates building, testing, and deployment of software from information in build scripts.

Gradle core concepts

Projects

A Gradle project is a piece of software that can be built, such as an application or a library.

Single project builds include a single project called the root project.

Multi-project builds include one root project and any number of subprojects.

Build Scripts

Build scripts detail to Gradle what steps to take to build the project.

Each project can include one or more build scripts.

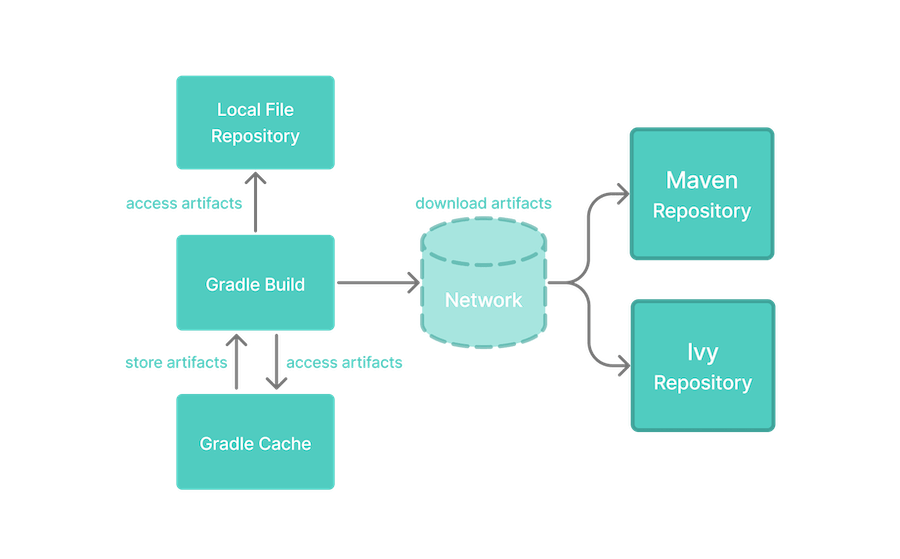

Dependency Management

Dependency management is an automated technique for declaring and resolving external resources required by a project.

Each project typically includes a number of external dependencies that Gradle will resolve during the build.

Tasks

Tasks are a basic unit of work such as compiling code or running your test.

Each project contains one or more tasks defined inside a build script or a plugin.

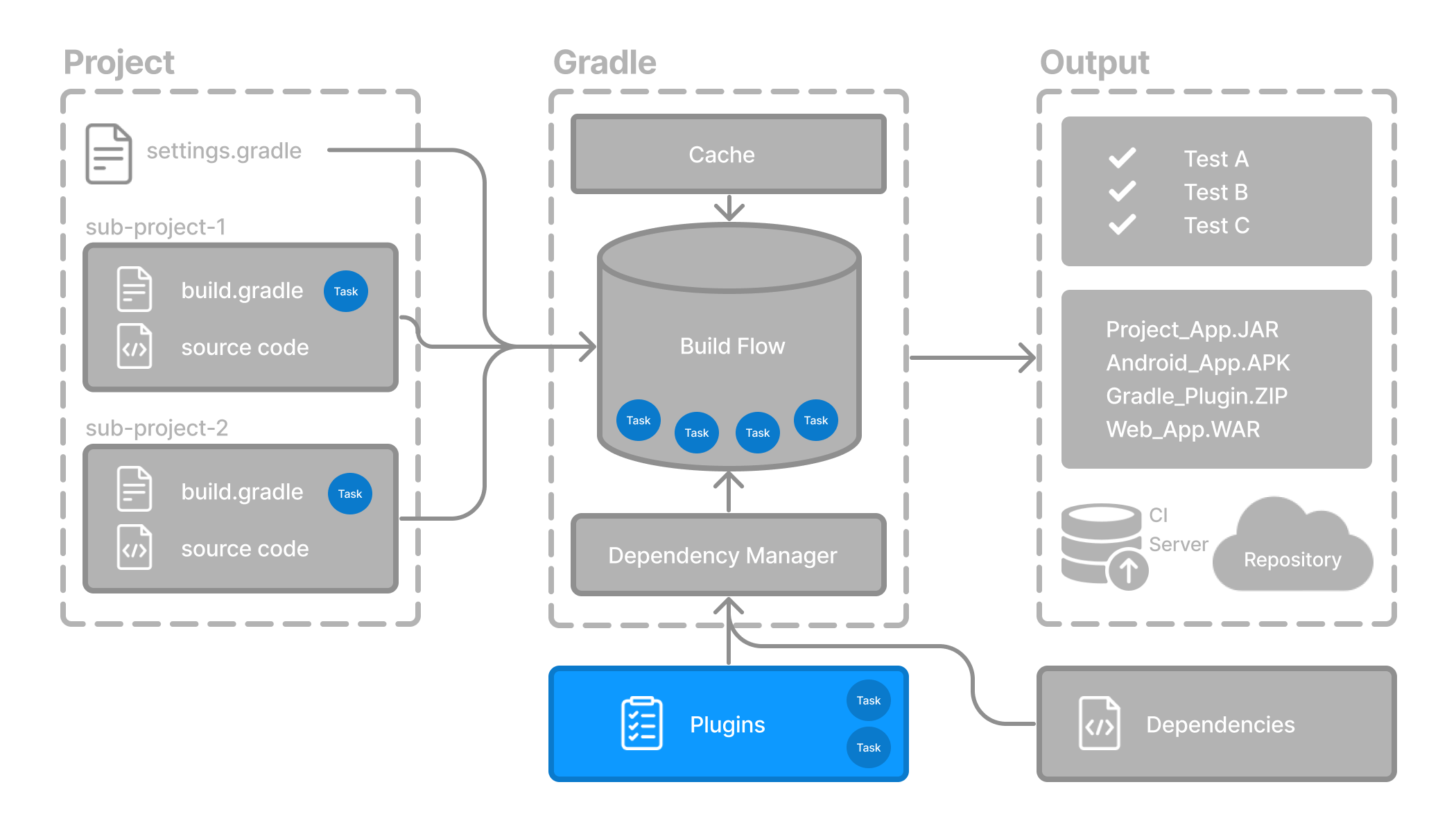

Plugins

Plugins are used to extend Gradle’s capability and optionally contribute tasks to a project.

Gradle project structure

Many developers will interact with Gradle for the first time through an existing project.

The presence of the gradlew and gradlew.bat files in the root directory of a project is a clear indicator that Gradle is used.

A Gradle project will look similar to the following:

project

├── gradle // (1)

│ ├── libs.versions.toml // (2)

│ └── wrapper

│ ├── gradle-wrapper.jar

│ └── gradle-wrapper.properties

├── gradlew // (3)

├── gradlew.bat // (3)

├── settings.gradle(.kts) // (4)

├── subproject-a

│ ├── build.gradle(.kts) // (5)

│ └── src // (6)

└── subproject-b

├── build.gradle(.kts) // (5)

└── src // (6)-

Gradle directory to store wrapper files and more

-

Gradle version catalog for dependency management

-

Gradle wrapper scripts

-

Gradle settings file to define a root project name and subprojects

-

Gradle build scripts of the two subprojects -

subproject-aandsubproject-b -

Source code and/or additional files for the projects

Invoking Gradle

IDE

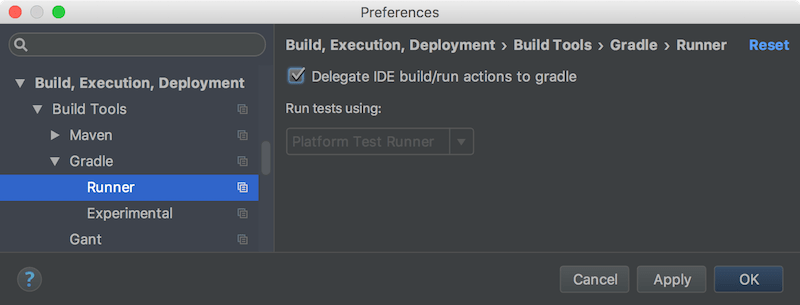

Gradle is built-in to many IDEs including Android Studio, IntelliJ IDEA, Visual Studio Code, Eclipse, and NetBeans.

Gradle can be automatically invoked when you build, clean, or run your app in the IDE.

It is recommended that you consult the manual for the IDE of your choice to learn more about how Gradle can be used and configured.

Command line

Gradle can be invoked in the command line once installed. For example:

$ gradle build|

Note

|

Most projects do not use the installed version of Gradle. |

Gradle Wrapper

The Wrapper is a script that invokes a declared version of Gradle and is the recommended way to execute a Gradle build.

It is found in the project root directory as a gradlew or gradlew.bat file:

$ gradlew build // Linux or OSX

$ gradlew.bat build // WindowsNext Step: Learn about the Gradle Wrapper >>

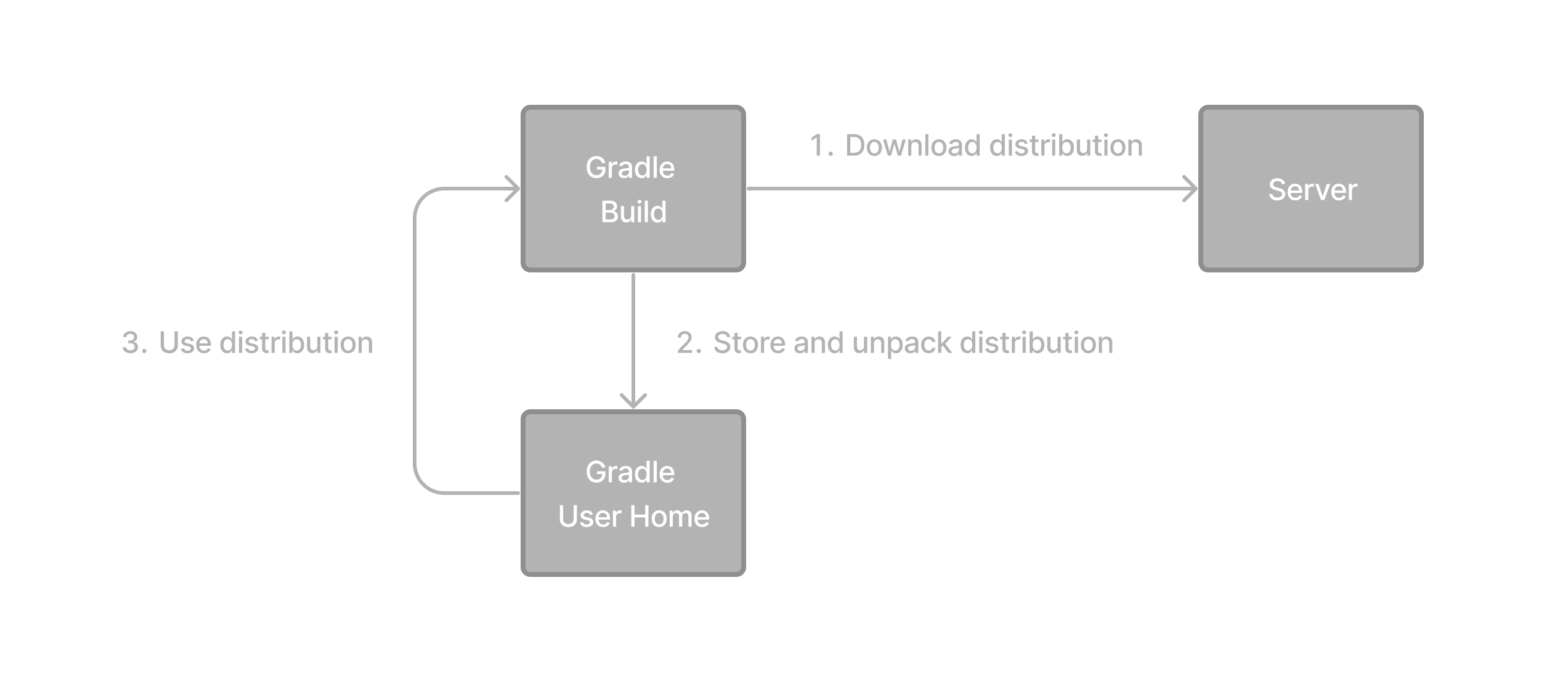

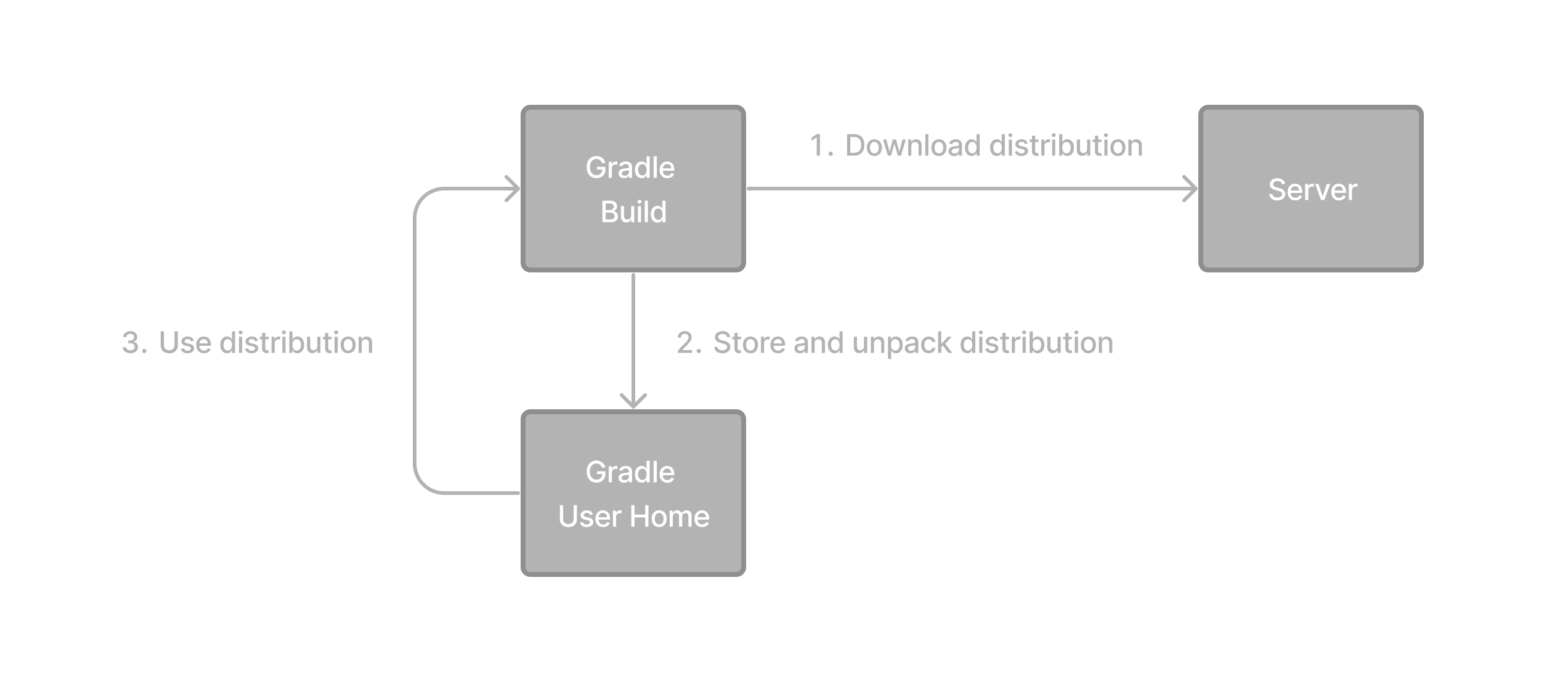

Gradle Wrapper Basics

The recommended way to execute any Gradle build is with the Gradle Wrapper.

The Wrapper script invokes a declared version of Gradle, downloading it beforehand if necessary.

The Wrapper is available as a gradlew or gradlew.bat file.

The Wrapper provides the following benefits:

-

Standardizes a project on a given Gradle version.

-

Provisions the same Gradle version for different users.

-

Provisions the Gradle version for different execution environments (IDEs, CI servers…).

Using the Gradle Wrapper

It is always recommended to execute a build with the Wrapper to ensure a reliable, controlled, and standardized execution of the build.

Depending on the operating system, you run gradlew or gradlew.bat instead of the gradle command.

Typical Gradle invocation:

$ gradle build

To run the Wrapper on a Linux or OSX machine:

$ ./gradlew build

To run the Wrapper on Windows PowerShell:

$ .\gradlew.bat build

The command is run in the same directory that the Wrapper is located in. If you want to run the command in a different directory, you must provide the relative path to the Wrapper:

$ ../gradlew build

The following console output demonstrates the use of the Wrapper on a Windows machine, in the command prompt (cmd), for a Java-based project:

$ gradlew.bat build Downloading https://services.gradle.org/distributions/gradle-5.0-all.zip ..................................................................................... Unzipping C:\Documents and Settings\Claudia\.gradle\wrapper\dists\gradle-5.0-all\ac27o8rbd0ic8ih41or9l32mv\gradle-5.0-all.zip to C:\Documents and Settings\Claudia\.gradle\wrapper\dists\gradle-5.0-al\ac27o8rbd0ic8ih41or9l32mv Set executable permissions for: C:\Documents and Settings\Claudia\.gradle\wrapper\dists\gradle-5.0-all\ac27o8rbd0ic8ih41or9l32mv\gradle-5.0\bin\gradle BUILD SUCCESSFUL in 12s 1 actionable task: 1 executed

Consult the Gradle Wrapper reference to learn more.

Next Step: Learn about the Gradle CLI >>

Command-Line Interface Basics

The command-line interface is the primary method of interacting with Gradle outside the IDE.

Use of the Gradle Wrapper is highly encouraged.

Substitute ./gradlew (in macOS / Linux) or gradlew.bat (in Windows) for gradle in the following examples.

Executing Gradle on the command line conforms to the following structure:

gradle [taskName...] [--option-name...]

Options are allowed before and after task names.

gradle [--option-name...] [taskName...]

If multiple tasks are specified, you should separate them with a space.

gradle [taskName1 taskName2...] [--option-name...]

Options that accept values can be specified with or without = between the option and argument. The use of = is recommended.

gradle [...] --console=plain

Options that enable behavior have long-form options with inverses specified with --no-. The following are opposites.

gradle [...] --build-cache gradle [...] --no-build-cache

Many long-form options have short-option equivalents. The following are equivalent:

gradle --help gradle -h

Command-line usage

The following sections describe the use of the Gradle command-line interface. Some plugins also add their own command line options.

Executing tasks

To execute a task called taskName on the root project, type:

$ gradle :taskName

This will run the single taskName and all of its dependencies.

Specify options for tasks

To pass an option to a task, prefix the option name with -- after the task name:

$ gradle taskName --exampleOption=exampleValue

Consult the Gradle Command Line Interface reference to learn more.

Next Step: Learn about the Settings file >>

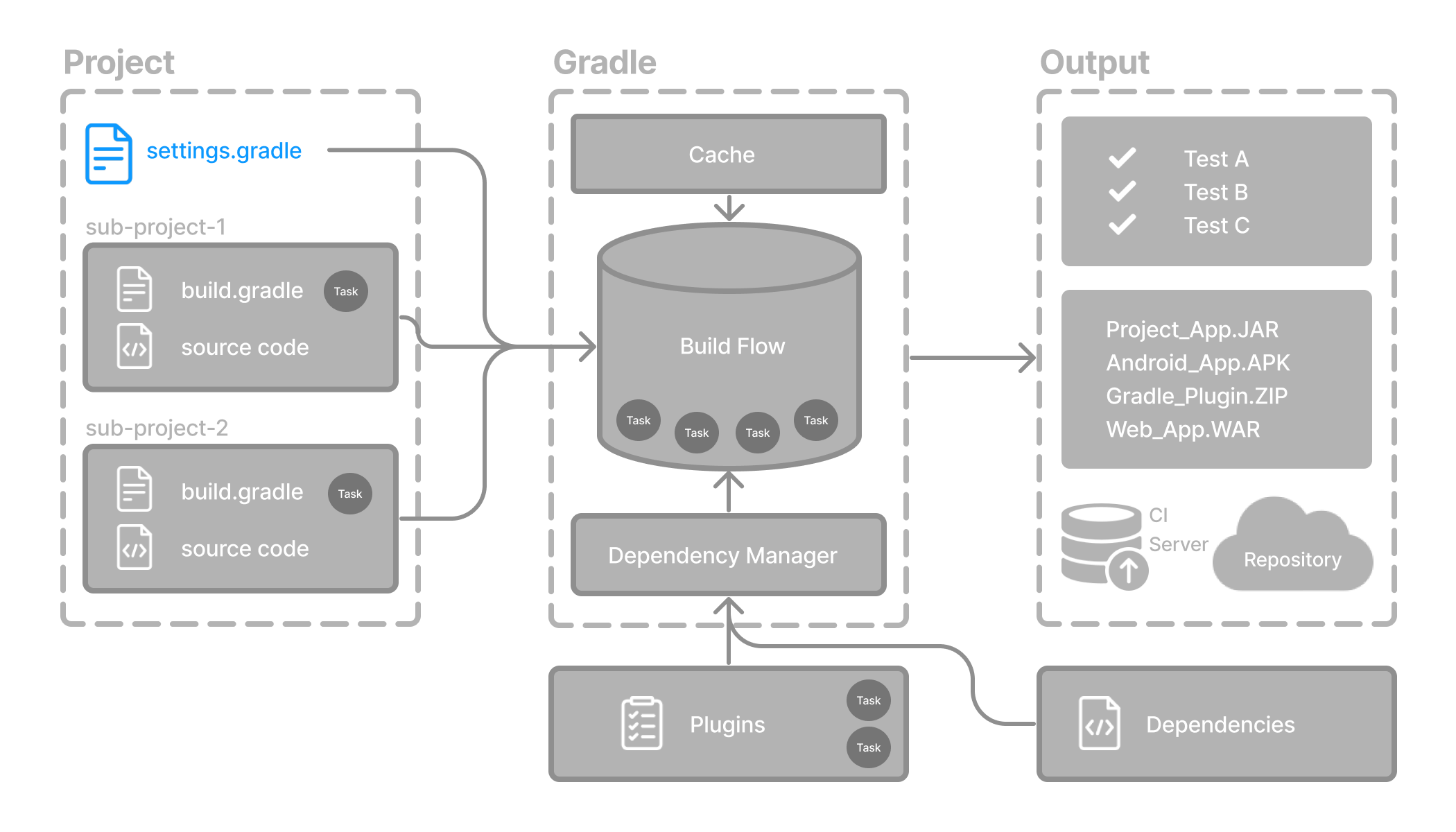

Settings File Basics

The settings file is the entry point of every Gradle project.

The primary purpose of the settings file is to add subprojects to your build.

Gradle supports single and multi-project builds.

-

For single-project builds, the settings file is optional.

-

For multi-project builds, the settings file is mandatory and declares all subprojects.

Settings script

The settings file is a script.

It is either a settings.gradle file written in Groovy or a settings.gradle.kts file in Kotlin.

The Groovy DSL and the Kotlin DSL are the only accepted languages for Gradle scripts.

The settings file is typically found in the root directory of the project.

Let’s take a look at an example and break it down:

rootProject.name = "root-project" // (1)

include("sub-project-a") // (2)

include("sub-project-b")

include("sub-project-c")-

Define the project name.

-

Add subprojects.

rootProject.name = 'root-project' // (1)

include('sub-project-a') // (2)

include('sub-project-b')

include('sub-project-c')-

Define the project name.

-

Add subprojects.

1. Define the project name

The settings file defines your project name:

rootProject.name = "root-project"There is only one root project per build.

2. Add subprojects

The settings file defines the structure of the project by including subprojects, if there are any:

include("app")

include("business-logic")

include("data-model")Consult the Writing Settings File page to learn more.

Next Step: Learn about the Build scripts >>

Build File Basics

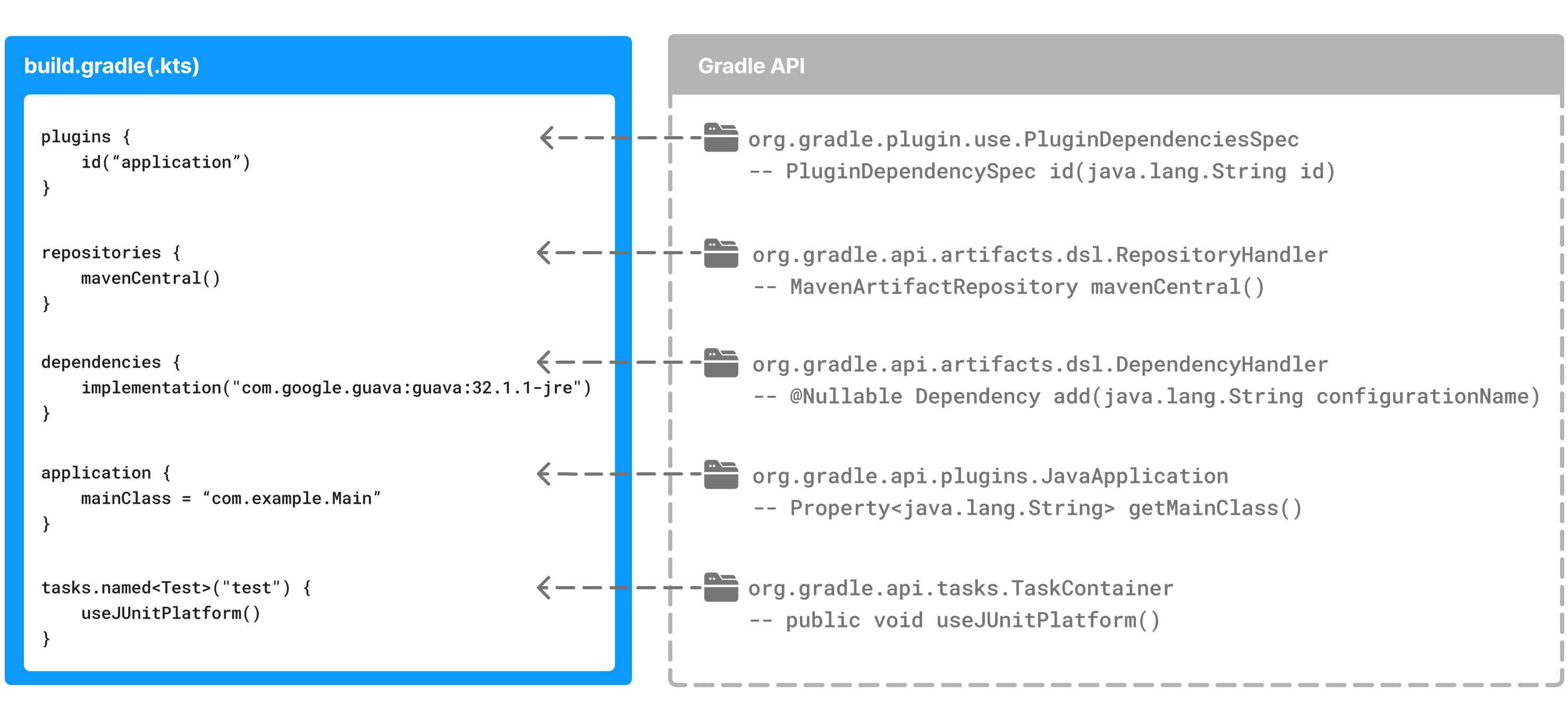

Generally, a build script details build configuration, tasks, and plugins.

Every Gradle build comprises at least one build script.

In the build file, two types of dependencies can be added:

-

The libraries and/or plugins on which Gradle and the build script depend.

-

The libraries on which the project sources (i.e., source code) depend.

Build scripts

The build script is either a build.gradle file written in Groovy or a build.gradle.kts file in Kotlin.

The Groovy DSL and the Kotlin DSL are the only accepted languages for Gradle scripts.

Let’s take a look at an example and break it down:

plugins {

id("application") // (1)

}

application {

mainClass = "com.example.Main" // (2)

}-

Add plugins.

-

Use convention properties.

plugins {

id 'application' // (1)

}

application {

mainClass = 'com.example.Main' // (2)

}-

Add plugins.

-

Use convention properties.

1. Add plugins

Plugins extend Gradle’s functionality and can contribute tasks to a project.

Adding a plugin to a build is called applying a plugin and makes additional functionality available.

plugins {

id("application")

}The application plugin facilitates creating an executable JVM application.

Applying the Application plugin also implicitly applies the Java plugin.

The java plugin adds Java compilation along with testing and bundling capabilities to a project.

2. Use convention properties

A plugin adds tasks to a project. It also adds properties and methods to a project.

The application plugin defines tasks that package and distribute an application, such as the run task.

The Application plugin provides a way to declare the main class of a Java application, which is required to execute the code.

application {

mainClass = "com.example.Main"

}In this example, the main class (i.e., the point where the program’s execution begins) is com.example.Main.

Consult the Writing Build Scripts page to learn more.

Next Step: Learn about Dependency Management >>

Dependency Management Basics

Gradle has built-in support for dependency management.

Dependency management is an automated technique for declaring and resolving external resources required by a project.

Gradle build scripts define the process to build projects that may require external dependencies. Dependencies refer to JARs, plugins, libraries, or source code that support building your project.

Version Catalog

Version catalogs provide a way to centralize your dependency declarations in a libs.versions.toml file.

The catalog makes sharing dependencies and version configurations between subprojects simple. It also allows teams to enforce versions of libraries and plugins in large projects.

The version catalog typically contains four sections:

-

[versions] to declare the version numbers that plugins and libraries will reference.

-

[libraries] to define the libraries used in the build files.

-

[bundles] to define a set of dependencies.

-

[plugins] to define plugins.

[versions]

androidGradlePlugin = "7.4.1"

mockito = "2.16.0"

[libraries]

googleMaterial = { group = "com.google.android.material", name = "material", version = "1.1.0-alpha05" }

mockitoCore = { module = "org.mockito:mockito-core", version.ref = "mockito" }

[plugins]

androidApplication = { id = "com.android.application", version.ref = "androidGradlePlugin" }The file is located in the gradle directory so that it can be used by Gradle and IDEs automatically.

The version catalog should be checked into source control: gradle/libs.versions.toml.

Declaring Your Dependencies

To add a dependency to your project, specify a dependency in the dependencies block of your build.gradle(.kts) file.

The following build.gradle.kts file adds a plugin and two dependencies to the project using the version catalog above:

plugins {

alias(libs.plugins.androidApplication) // (1)

}

dependencies {

// Dependency on a remote binary to compile and run the code

implementation(libs.googleMaterial) // (2)

// Dependency on a remote binary to compile and run the test code

testImplementation(libs.mockitoCore) // (3)

}-

Applies the Android Gradle plugin to this project, which adds several features that are specific to building Android apps.

-

Adds the Material dependency to the project. Material Design provides components for creating a user interface in an Android App. This library will be used to compile and run the Kotlin source code in this project.

-

Adds the Mockito dependency to the project. Mockito is a mocking framework for testing Java code. This library will be used to compile and run the test source code in this project.

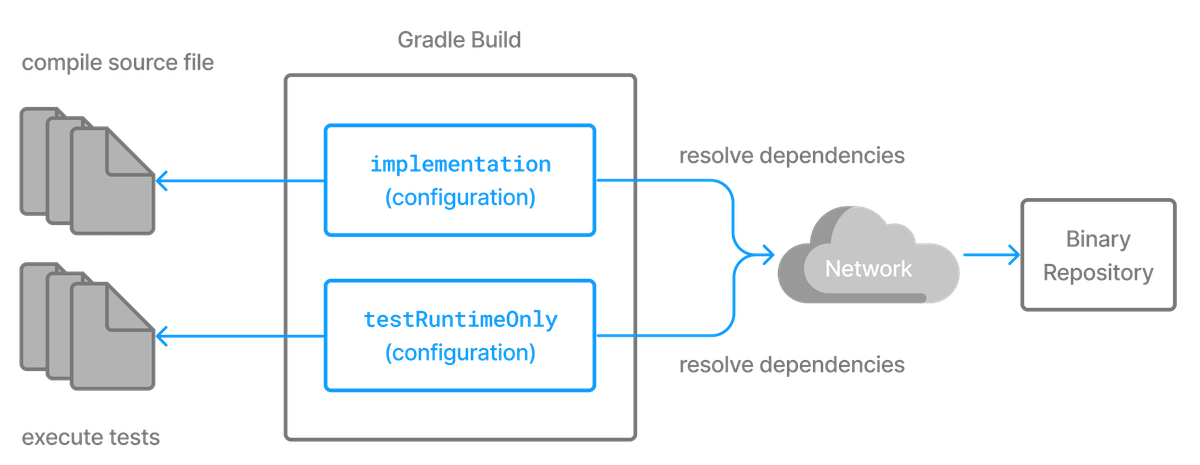

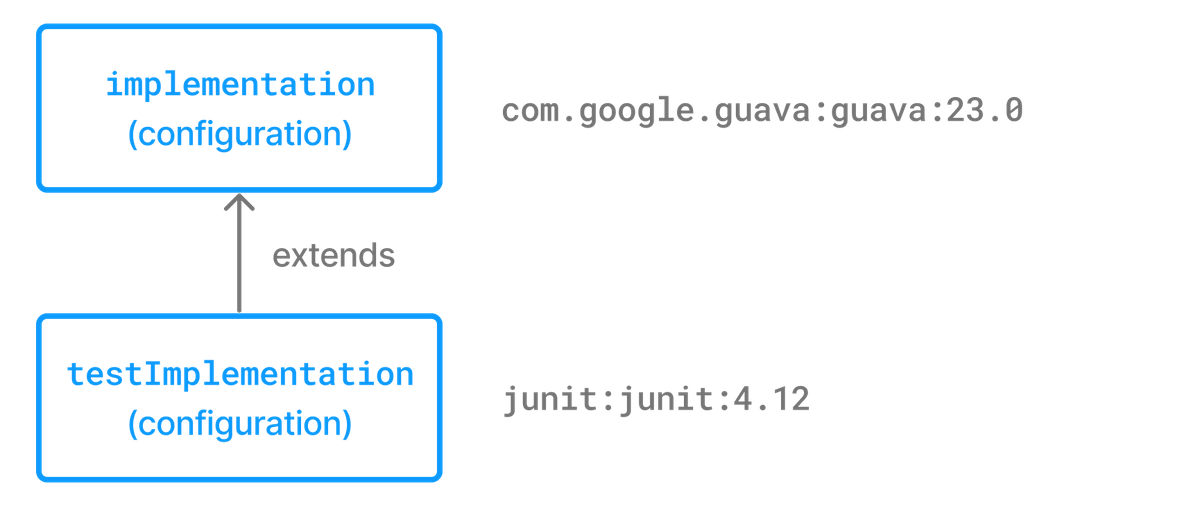

Dependencies in Gradle are grouped by configurations.

-

The

materiallibrary is added to theimplementationconfiguration, which is used for compiling and running production code. -

The

mockito-corelibrary is added to thetestImplementationconfiguration, which is used for compiling and running test code.

|

Note

|

There are many more configurations available. |

Viewing Project Dependencies

You can view your dependency tree in the terminal using the ./gradlew :app:dependencies command:

$ ./gradlew :app:dependencies

> Task :app:dependencies

------------------------------------------------------------

Project ':app'

------------------------------------------------------------

implementation - Implementation only dependencies for source set 'main'. (n)

\--- com.google.android.material:material:1.1.0-alpha05 (n)

testImplementation - Implementation only dependencies for source set 'test'. (n)

\--- org.mockito:mockito-core:2.16.0 (n)

...Consult the Dependency Management chapter to learn more.

Next Step: Learn about Tasks >>

Task Basics

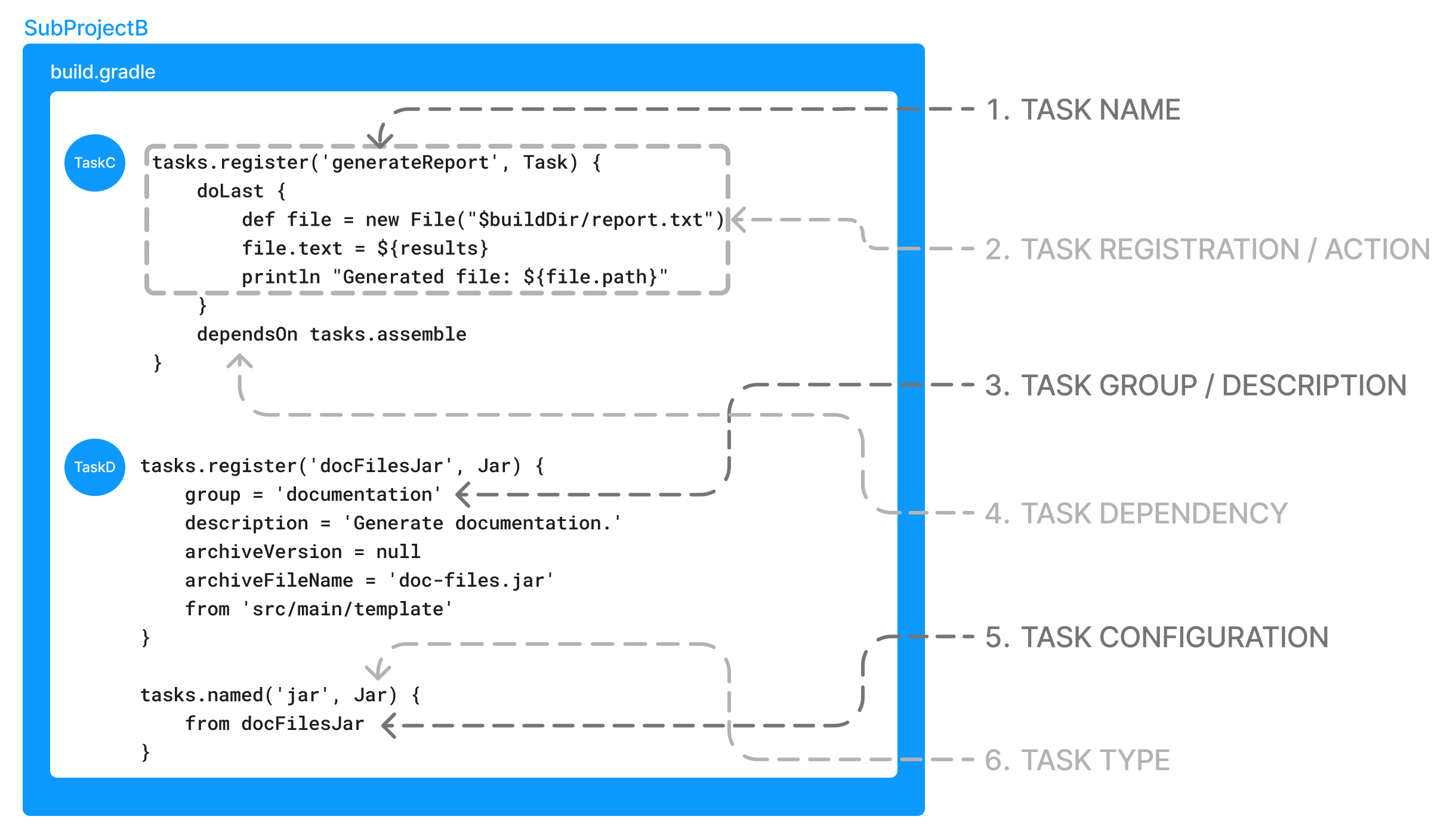

A task represents some independent unit of work that a build performs, such as compiling classes, creating a JAR, generating Javadoc, or publishing archives to a repository.

You run a Gradle build task using the gradle command or by invoking the Gradle Wrapper (./gradlew or gradlew.bat) in your project directory:

$ ./gradlew buildAvailable tasks

All available tasks in your project come from Gradle plugins and build scripts.

You can list all the available tasks in the project by running the following command in the terminal:

$ ./gradlew tasksApplication tasks

-----------------

run - Runs this project as a JVM application

Build tasks

-----------

assemble - Assembles the outputs of this project.

build - Assembles and tests this project.

...

Documentation tasks

-------------------

javadoc - Generates Javadoc API documentation for the main source code.

...

Other tasks

-----------

compileJava - Compiles main Java source.

...Running tasks

The run task is executed with ./gradlew run:

$ ./gradlew run

> Task :app:compileJava

> Task :app:processResources NO-SOURCE

> Task :app:classes

> Task :app:run

Hello World!

BUILD SUCCESSFUL in 904ms

2 actionable tasks: 2 executedIn this example Java project, the output of the run task is a Hello World statement printed on the console.

Task dependency

Many times, a task requires another task to run first.

For example, for Gradle to execute the build task, the Java code must first be compiled.

Thus, the build task depends on the compileJava task.

This means that the compileJava task will run before the build task:

$ ./gradlew build

> Task :app:compileJava

> Task :app:processResources NO-SOURCE

> Task :app:classes

> Task :app:jar

> Task :app:startScripts

> Task :app:distTar

> Task :app:distZip

> Task :app:assemble

> Task :app:compileTestJava

> Task :app:processTestResources NO-SOURCE

> Task :app:testClasses

> Task :app:test

> Task :app:check

> Task :app:build

BUILD SUCCESSFUL in 764ms

7 actionable tasks: 7 executedBuild scripts can optionally define task dependencies. Gradle then automatically determines the task execution order.

Consult the Task development chapter to learn more.

Next Step: Learn about Plugins >>

Plugin Basics

Gradle is built on a plugin system. Gradle itself is primarily composed of infrastructure, such as a sophisticated dependency resolution engine. The rest of its functionality comes from plugins.

A plugin is a piece of software that provides additional functionality to the Gradle build system.

Plugins can be applied to a Gradle build script to add new tasks, configurations, or other build-related capabilities:

- The Java Library Plugin -

java-library -

Used to define and build Java libraries. It compiles Java source code with the

compileJavatask, generates Javadoc with thejavadoctask, and packages the compiled classes into a JAR file with thejartask. - The Google Services Gradle Plugin -

com.google.gms:google-services -

Enables Google APIs and Firebase services in your Android application with a configuration block called

googleServices{}and a task calledgenerateReleaseAssets. - The Gradle Bintray Plugin -

com.jfrog.bintray -

Allows you to publish artifacts to Bintray by configuring the plugin using the

bintray{}block.

Plugin distribution

Plugins are distributed in three ways:

-

Core plugins - Gradle develops and maintains a set of Core Plugins.

-

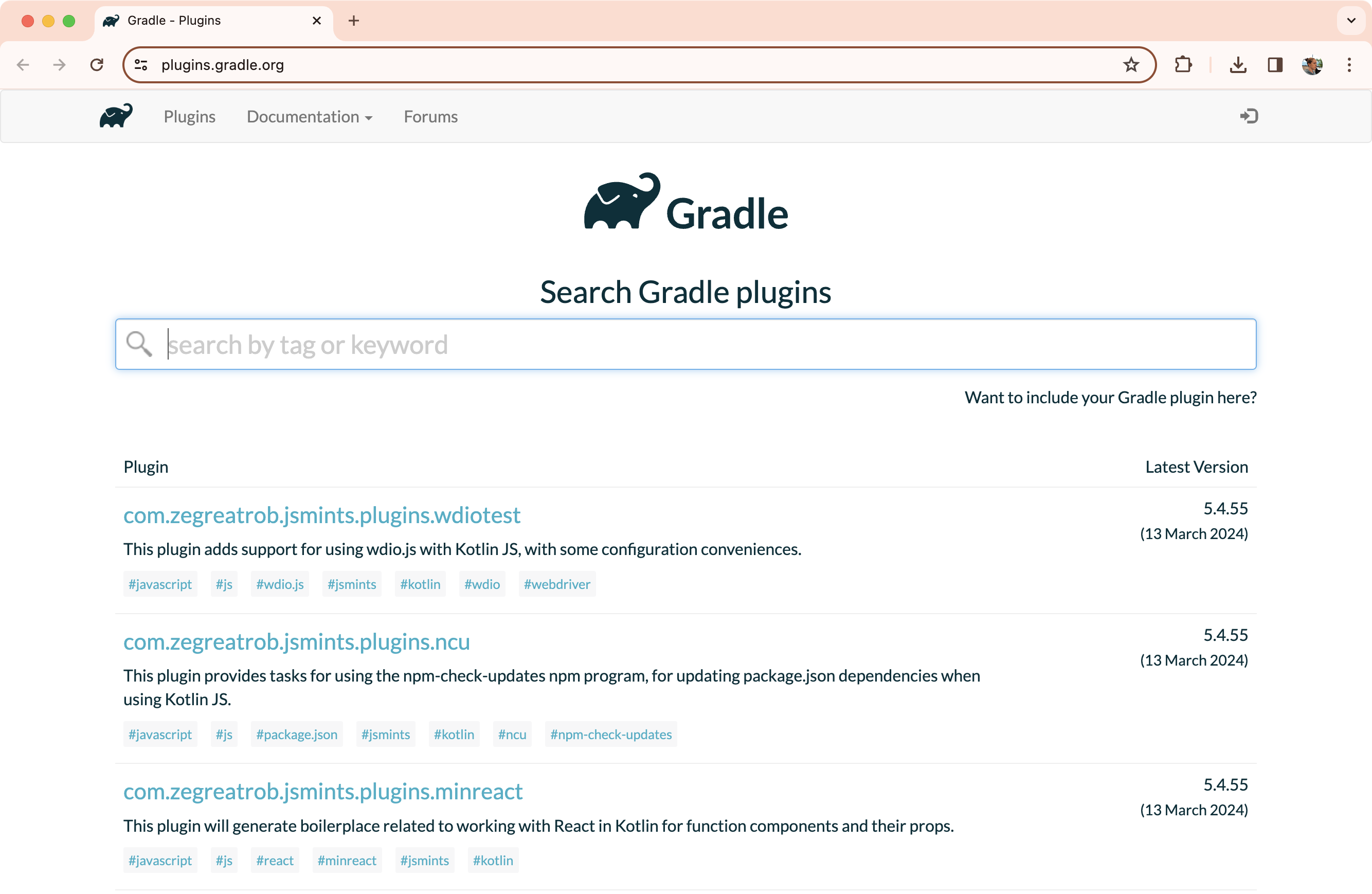

Community plugins - Gradle’s community shares plugins via the Gradle Plugin Portal.

-

Local plugins - Gradle enables users to create custom plugins using APIs.

Applying plugins

Applying a plugin to a project allows the plugin to extend the project’s capabilities.

You apply plugins in the build script using a plugin id (a globally unique identifier / name) and a version:

plugins {

id «plugin id» version «plugin version»

}1. Core plugins

Gradle Core plugins are a set of plugins that are included in the Gradle distribution itself. These plugins provide essential functionality for building and managing projects.

Some examples of core plugins include:

-

java: Provides support for building Java projects.

-

groovy: Adds support for compiling and testing Groovy source files.

-

ear: Adds support for building EAR files for enterprise applications.

Core plugins are unique in that they provide short names, such as java for the core JavaPlugin, when applied in build scripts.

They also do not require versions.

To apply the java plugin to a project:

plugins {

id("java")

}There are many Gradle Core Plugins users can take advantage of.

2. Community plugins

Community plugins are plugins developed by the Gradle community, rather than being part of the core Gradle distribution. These plugins provide additional functionality that may be specific to certain use cases or technologies.

The Spring Boot Gradle plugin packages executable JAR or WAR archives, and runs Spring Boot Java applications.

To apply the org.springframework.boot plugin to a project:

plugins {

id("org.springframework.boot") version "3.1.5"

}Community plugins can be published at the Gradle Plugin Portal, where other Gradle users can easily discover and use them.

3. Local plugins

Custom or local plugins are developed and used within a specific project or organization. These plugins are not shared publicly and are tailored to the specific needs of the project or organization.

Local plugins can encapsulate common build logic, provide integrations with internal systems or tools, or abstract complex functionality into reusable components.

Gradle provides users with the ability to develop custom plugins using APIs. To create your own plugin, you’ll typically follow these steps:

-

Define the plugin class: create a new class that implements the

Plugin<Project>interface.// Define a 'HelloPlugin' plugin class HelloPlugin : Plugin<Project> { override fun apply(project: Project) { // Define the 'hello' task val helloTask = project.tasks.register("hello") { doLast { println("Hello, Gradle!") } } } } -

Build and optionally publish your plugin: generate a JAR file containing your plugin code and optionally publish this JAR to a repository (local or remote) to be used in other projects.

// Publish the plugin plugins { `maven-publish` } publishing { publications { create<MavenPublication>("mavenJava") { from(components["java"]) } } repositories { mavenLocal() } } -

Apply your plugin: when you want to use the plugin, include the plugin ID and version in the

plugins{}block of the build file.// Apply the plugin plugins { id("com.example.hello") version "1.0" }

Consult the Plugin development chapter to learn more.

Next Step: Learn about Incremental Builds and Build Caching >>

Gradle Incremental Builds and Build Caching

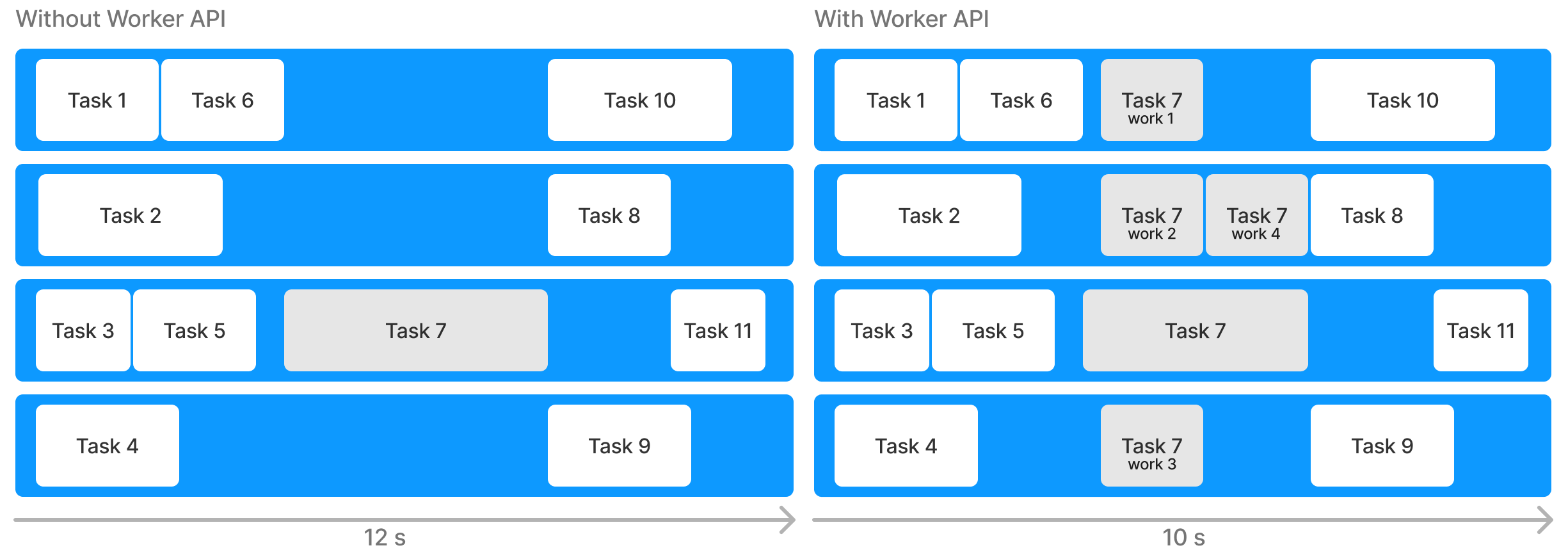

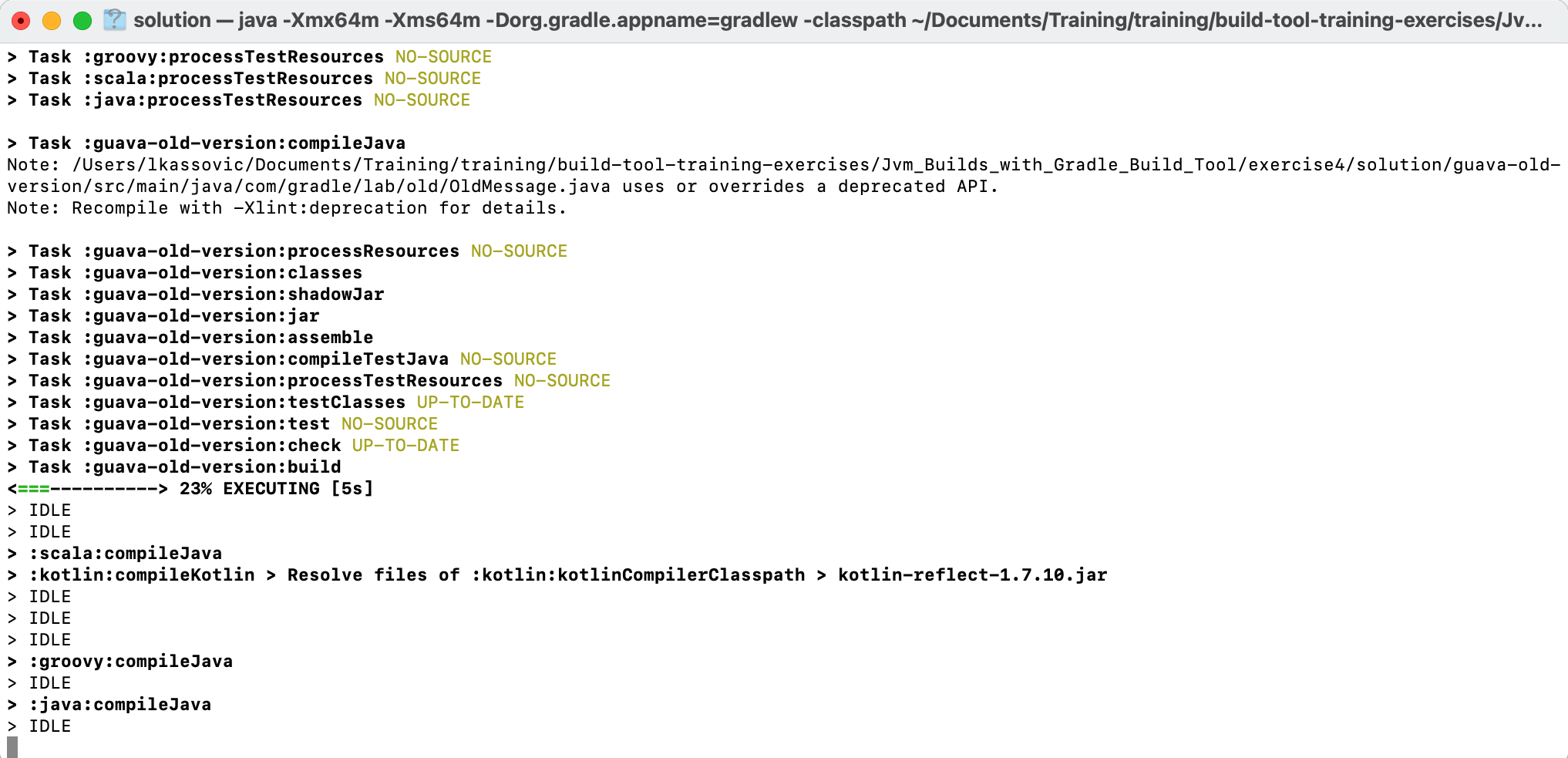

Gradle uses two main features to reduce build time: incremental builds and build caching.

Incremental builds

An incremental build is a build that avoids running tasks whose inputs have not changed since the previous build. Re-executing such tasks is unnecessary if they would only re-produce the same output.

For incremental builds to work, tasks must define their inputs and outputs. Gradle will determine whether the input or outputs have changed at build time. If they have changed, Gradle will execute the task. Otherwise, it will skip execution.

Incremental builds are always enabled, and the best way to see them in action is to turn on verbose mode. With verbose mode, each task state is labeled during a build:

$ ./gradlew compileJava --console=verbose

> Task :buildSrc:generateExternalPluginSpecBuilders UP-TO-DATE

> Task :buildSrc:extractPrecompiledScriptPluginPlugins UP-TO-DATE

> Task :buildSrc:compilePluginsBlocks UP-TO-DATE

> Task :buildSrc:generatePrecompiledScriptPluginAccessors UP-TO-DATE

> Task :buildSrc:generateScriptPluginAdapters UP-TO-DATE

> Task :buildSrc:compileKotlin UP-TO-DATE

> Task :buildSrc:compileJava NO-SOURCE

> Task :buildSrc:compileGroovy NO-SOURCE

> Task :buildSrc:pluginDescriptors UP-TO-DATE

> Task :buildSrc:processResources UP-TO-DATE

> Task :buildSrc:classes UP-TO-DATE

> Task :buildSrc:jar UP-TO-DATE

> Task :list:compileJava UP-TO-DATE

> Task :utilities:compileJava UP-TO-DATE

> Task :app:compileJava UP-TO-DATE

BUILD SUCCESSFUL in 374ms

12 actionable tasks: 12 up-to-dateWhen you run a task that has been previously executed and hasn’t changed, then UP-TO-DATE is printed next to the task.

|

Tip

|

To permanently enable verbose mode, add org.gradle.console=verbose to your gradle.properties file.

|

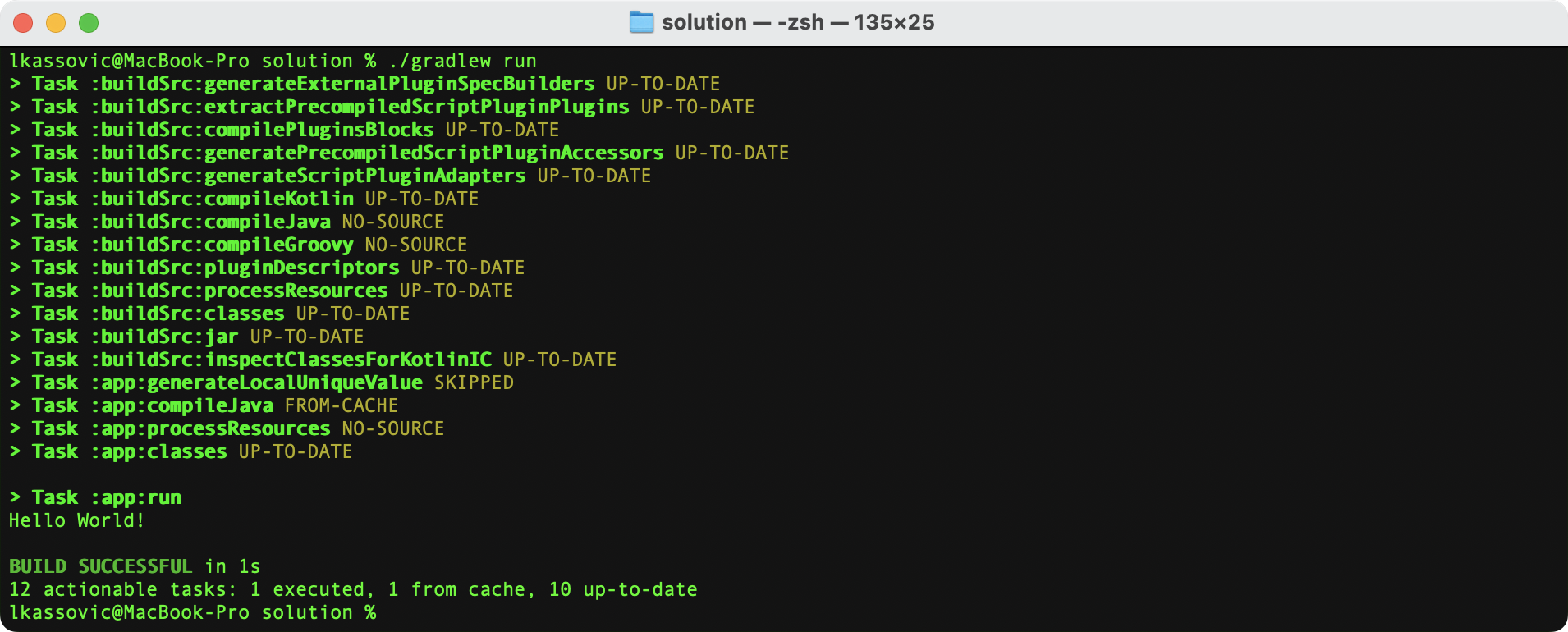

Build caching

Incremental Builds are a great optimization that helps avoid work already done. If a developer continuously changes a single file, there is likely no need to rebuild all the other files in the project.

However, what happens when the same developer switches to a new branch created last week? The files are rebuilt, even though the developer is building something that has been built before.

This is where a build cache is helpful.

The build cache stores previous build results and restores them when needed. It prevents the redundant work and cost of executing time-consuming and expensive processes.

When the build cache has been used to repopulate the local directory, the tasks are marked as FROM-CACHE:

$ ./gradlew compileJava --build-cache

> Task :buildSrc:generateExternalPluginSpecBuilders UP-TO-DATE

> Task :buildSrc:extractPrecompiledScriptPluginPlugins UP-TO-DATE

> Task :buildSrc:compilePluginsBlocks UP-TO-DATE

> Task :buildSrc:generatePrecompiledScriptPluginAccessors UP-TO-DATE

> Task :buildSrc:generateScriptPluginAdapters UP-TO-DATE

> Task :buildSrc:compileKotlin UP-TO-DATE

> Task :buildSrc:compileJava NO-SOURCE

> Task :buildSrc:compileGroovy NO-SOURCE

> Task :buildSrc:pluginDescriptors UP-TO-DATE

> Task :buildSrc:processResources UP-TO-DATE

> Task :buildSrc:classes UP-TO-DATE

> Task :buildSrc:jar UP-TO-DATE

> Task :list:compileJava FROM-CACHE

> Task :utilities:compileJava FROM-CACHE

> Task :app:compileJava FROM-CACHE

BUILD SUCCESSFUL in 364ms

12 actionable tasks: 3 from cache, 9 up-to-dateOnce the local directory has been repopulated, the next execution will mark tasks as UP-TO-DATE and not FROM-CACHE.

The build cache allows you to share and reuse unchanged build and test outputs across teams. This speeds up local and CI builds since cycles are not wasted re-building binaries unaffected by new code changes.

Consult the Build cache chapter to learn more.

Next Step: Learn about Build Scans >>

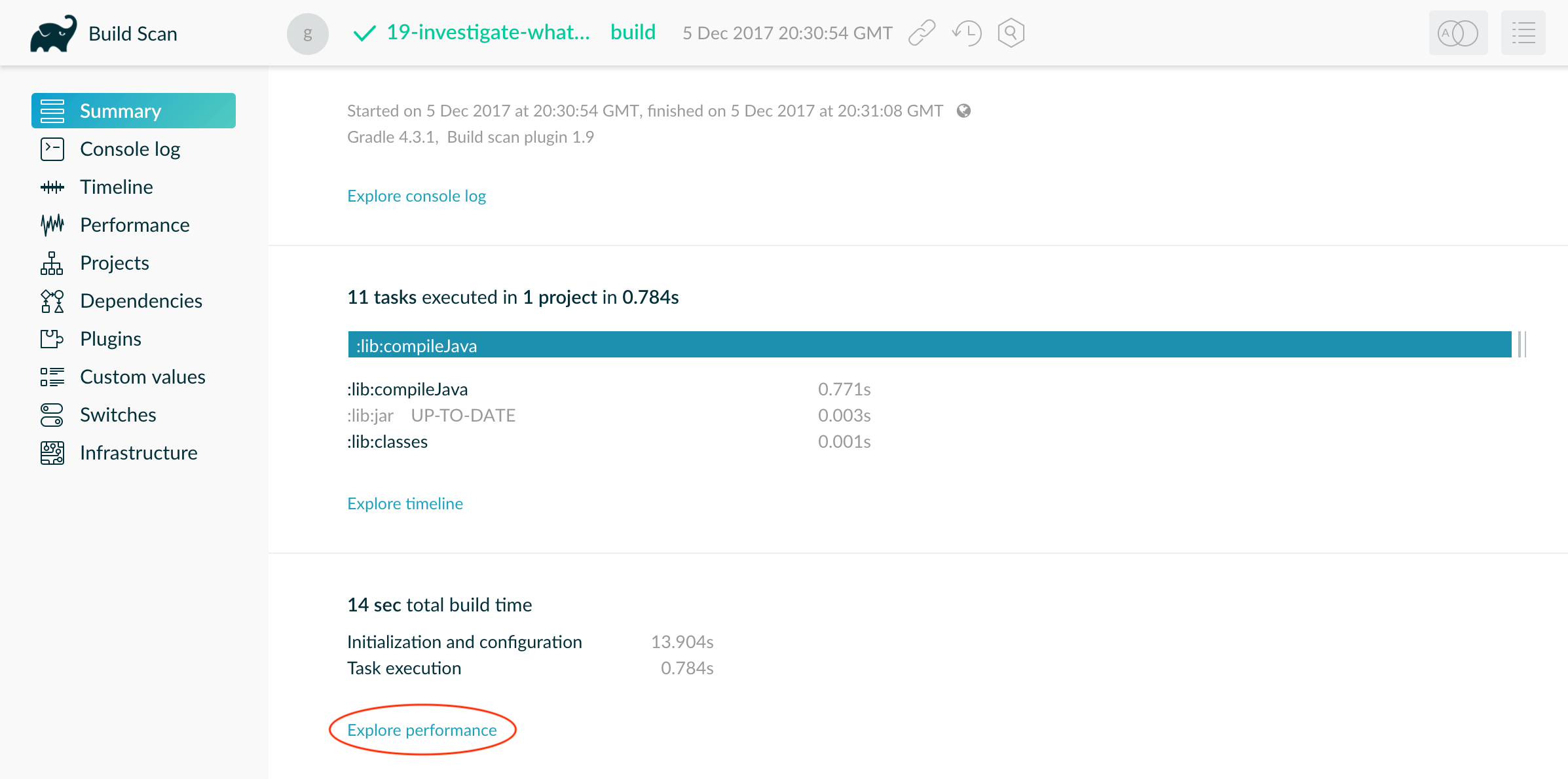

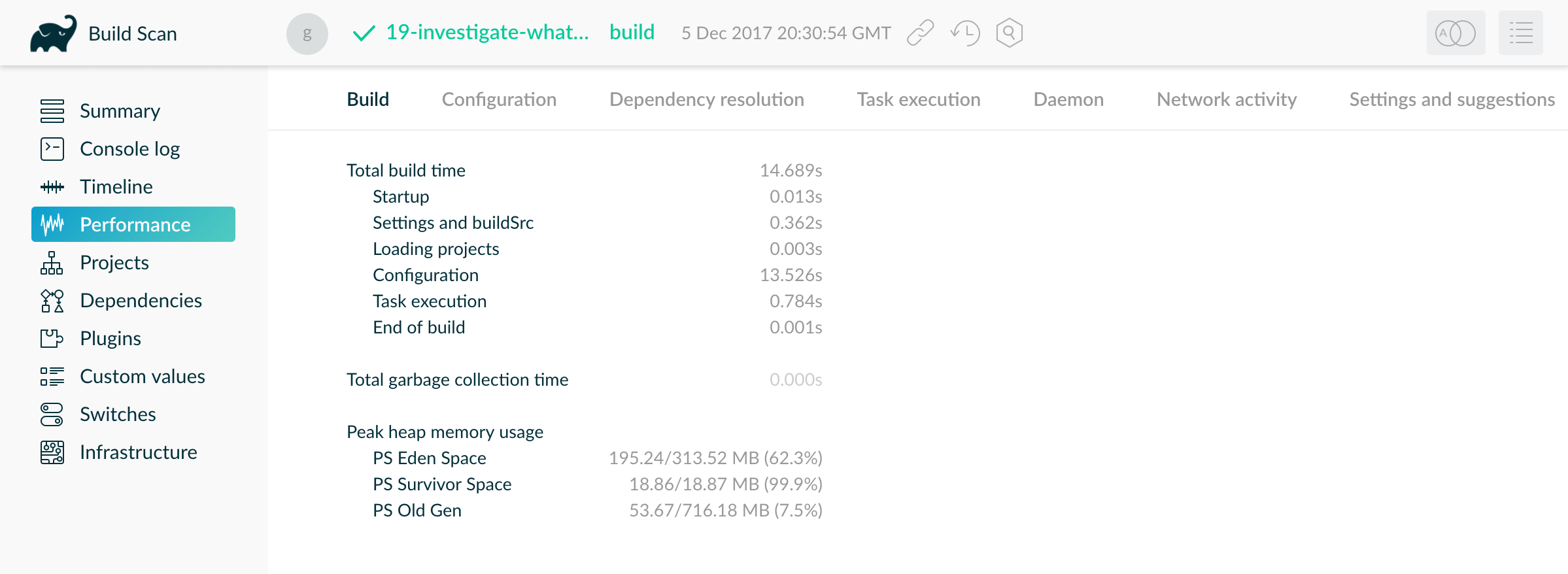

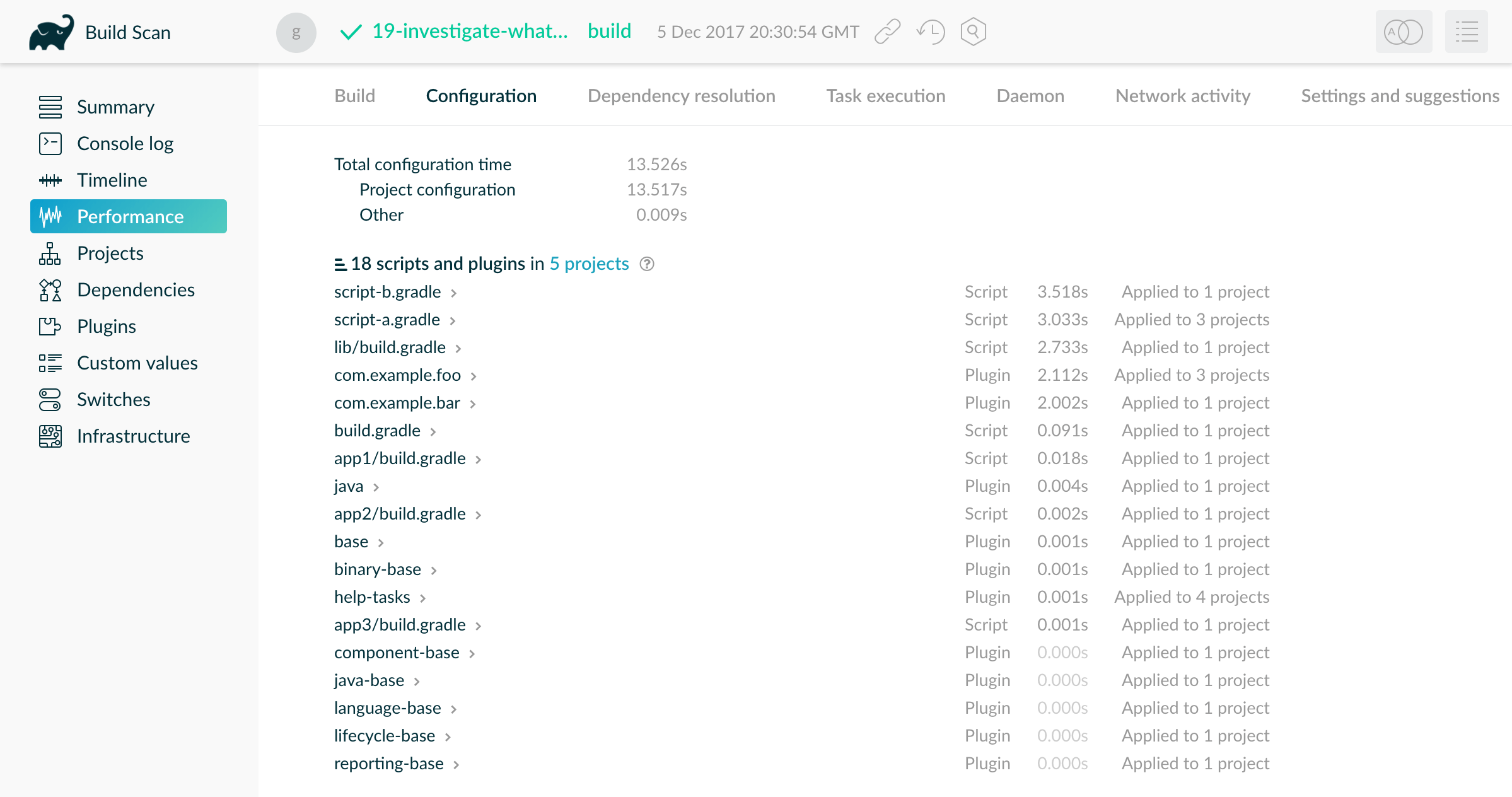

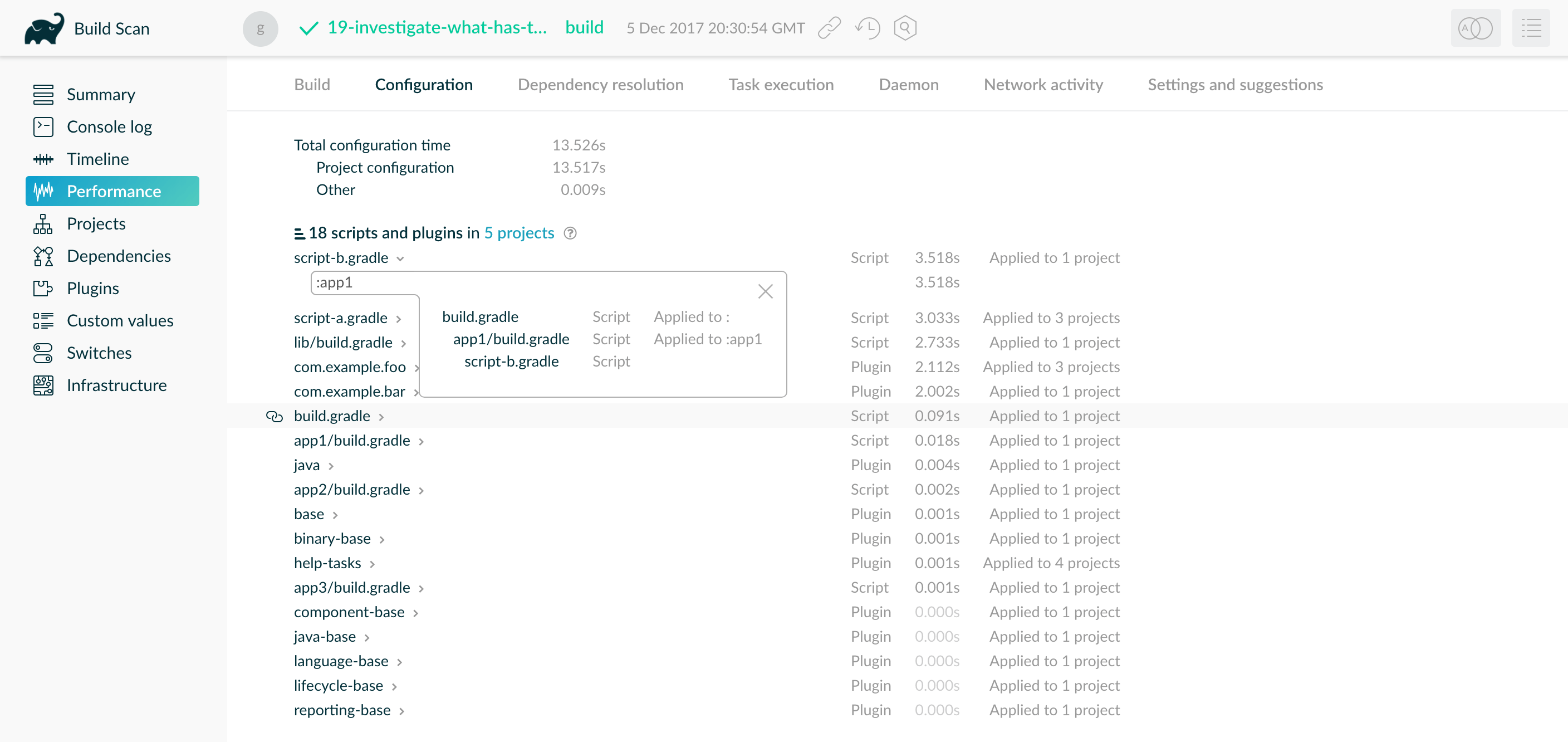

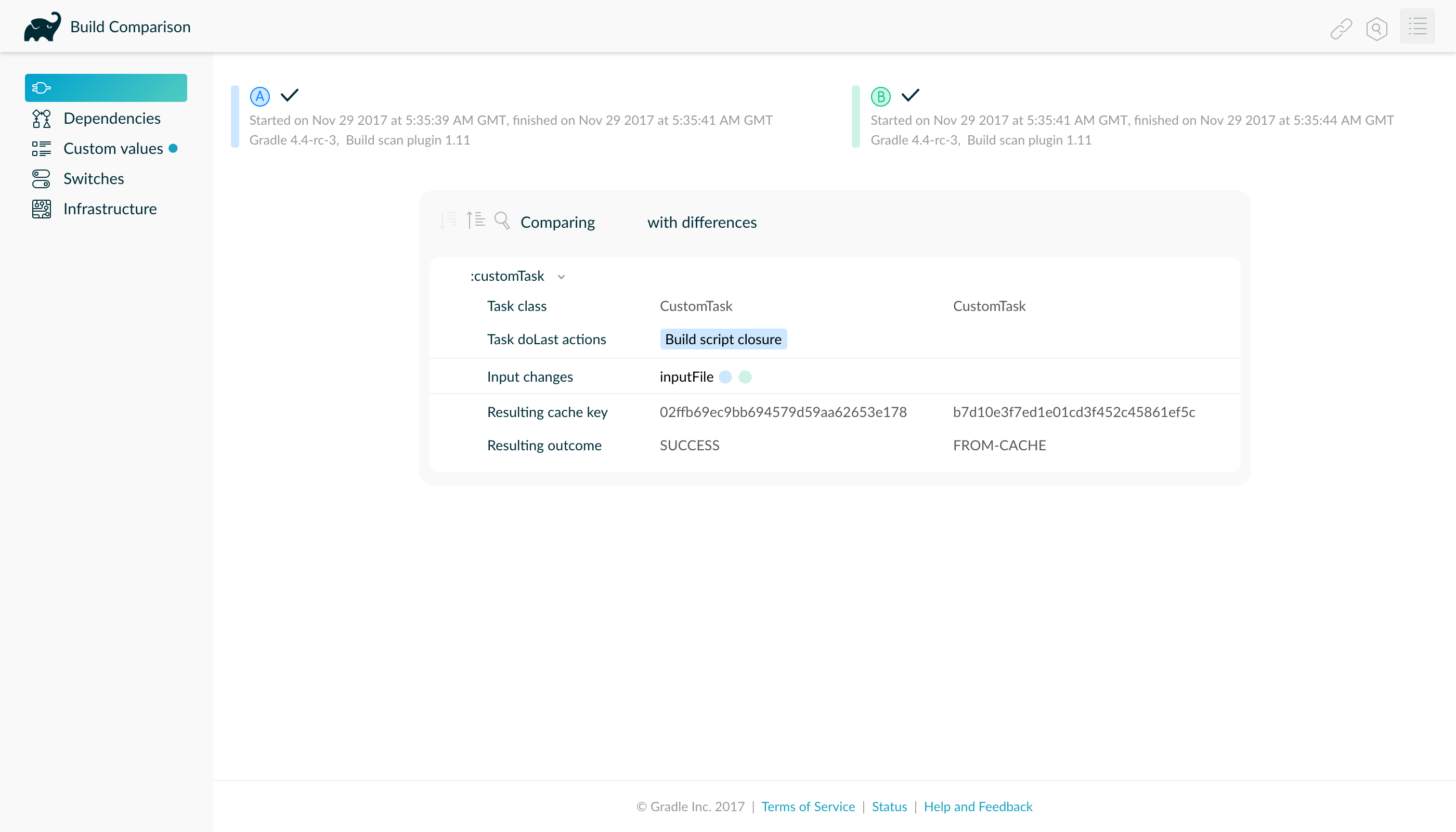

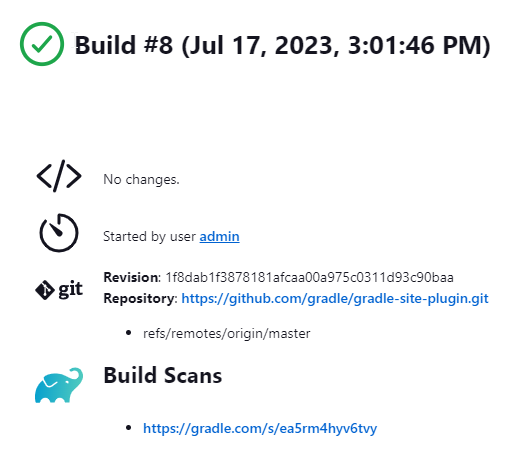

Build Scans

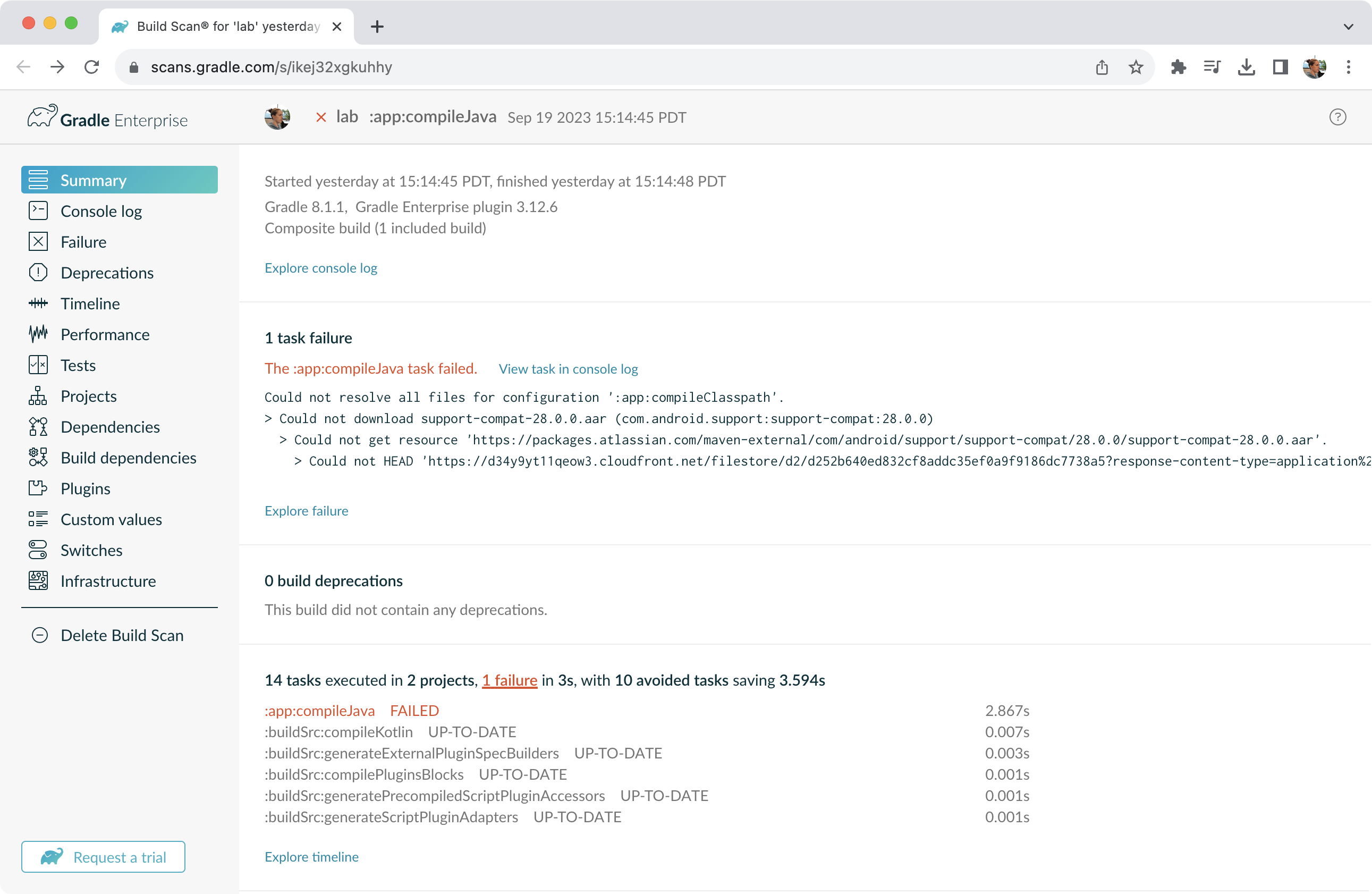

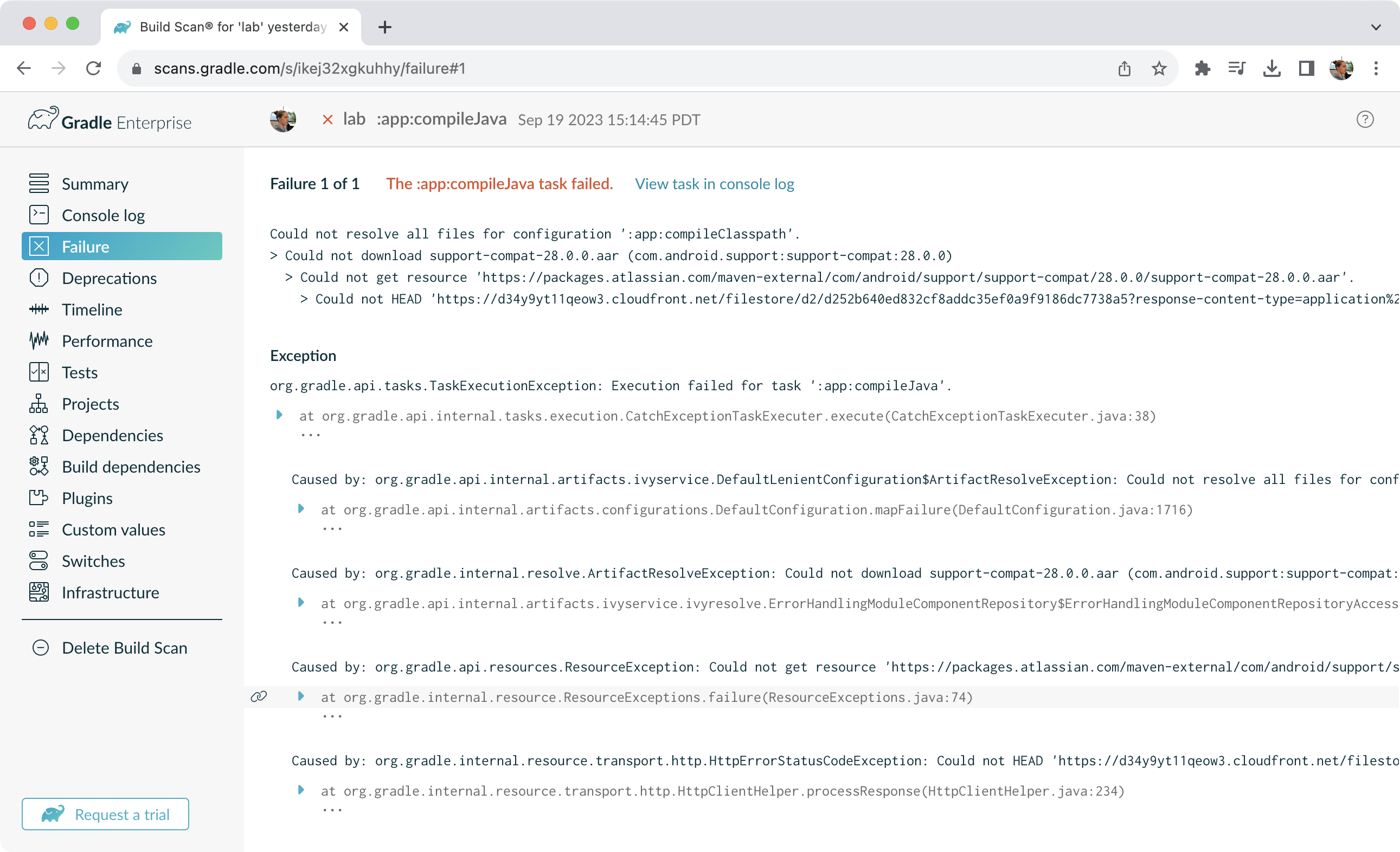

A build scan is a representation of metadata captured as you run your build.

Build Scans

Gradle captures your build metadata and sends it to the Build Scan Service. The service then transforms the metadata into information you can analyze and share with others.

The information that scans collect can be an invaluable resource when troubleshooting, collaborating on, or optimizing the performance of your builds.

For example, with a build scan, it’s no longer necessary to copy and paste error messages or include all the details about your environment each time you want to ask a question on Stack Overflow, Slack, or the Gradle Forum. Instead, copy the link to your latest build scan.

Enable Build Scans

To enable build scans on a gradle command, add --scan to the command line option:

./gradlew build --scanYou may be prompted to agree to the terms to use Build Scans.

Vist the Build Scans page to learn more.

Next Step: Start the Tutorial >>

OTHER TOPICS

Continuous Builds

Continuous Build allows you to automatically re-execute the requested tasks when file inputs change.

You can execute the build in this mode using the -t or --continuous command-line option.

For example, you can continuously run the test task and all dependent tasks by running:

$ gradle test --continuous

Gradle will behave as if you ran gradle test after a change to sources or tests that contribute to the requested tasks.

This means unrelated changes (such as changes to build scripts) will not trigger a rebuild.

To incorporate build logic changes, the continuous build must be restarted manually.

Continuous build uses file system watching to detect changes to the inputs.

If file system watching does not work on your system, then continuous build won’t work either.

In particular, continuous build does not work when using --no-daemon.

When Gradle detects a change to the inputs, it will not trigger the build immediately.

Instead, it will wait until no additional changes are detected for a certain period of time - the quiet period.

You can configure the quiet period in milliseconds by the Gradle property org.gradle.continuous.quietperiod.

Terminating Continuous Build

If Gradle is attached to an interactive input source, such as a terminal, the continuous build can be exited by pressing CTRL-D (On Microsoft Windows, it is required to also press ENTER or RETURN after CTRL-D).

If Gradle is not attached to an interactive input source (e.g. is running as part of a script), the build process must be terminated (e.g. using the kill command or similar).

If the build is being executed via the Tooling API, the build can be cancelled using the Tooling API’s cancellation mechanism.

Limitations

Under some circumstances, continuous build may not detect changes to inputs.

Creating input directories

Sometimes, creating an input directory that was previously missing does not trigger a build, due to the way file system watching works.

For example, creating the src/main/java directory may not trigger a build.

Similarly, if the input is a filtered file tree and no files are matching the filter, the creation of matching files may not trigger a build.

Inputs of untracked tasks

Changes to the inputs of untracked tasks or tasks that have no outputs may not trigger a build.

Changes to files outside of project directories

Gradle only watches for changes to files inside the project directory. Changes to files outside the project directory will go undetected and not trigger a build.

Build cycles

Gradle starts watching for changes just before a task executes. If a task modifies its own inputs while executing, Gradle will detect the change and trigger a new build. If every time the task executes, the inputs are modified again, the build will be triggered again. This isn’t unique to continuous build. A task that modifies its own inputs will never be considered up-to-date when run "normally" without continuous build.

If your build enters a build cycle like this, you can track down the task by looking at the list of files reported changed by Gradle.

After identifying the file(s) that are changed during each build, you should look for a task that has that file as an input.

In some cases, it may be obvious (e.g., a Java file is compiled with compileJava).

In other cases, you can use --info logging to find the task that is out-of-date due to the identified files.

THE BASICS

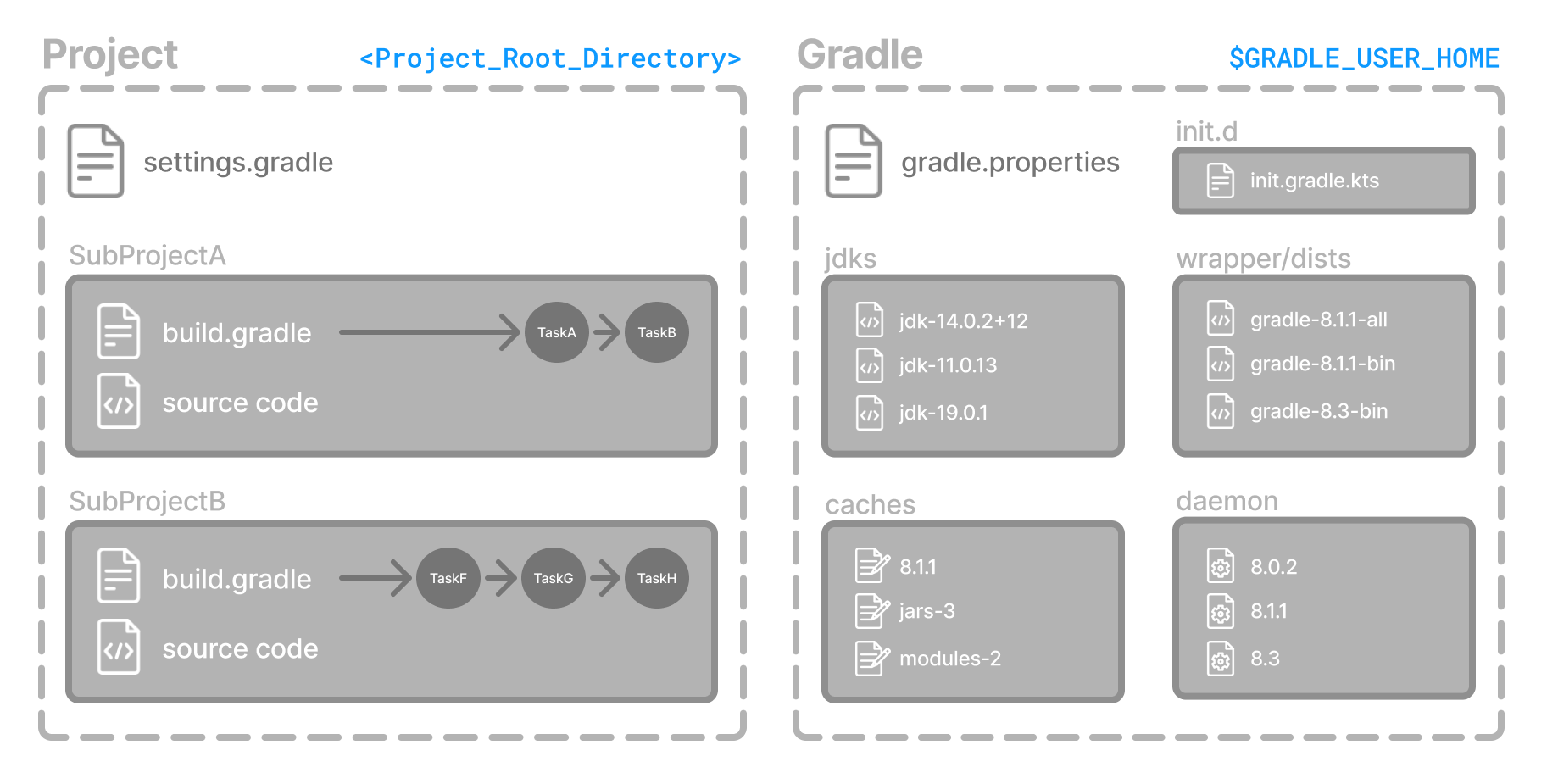

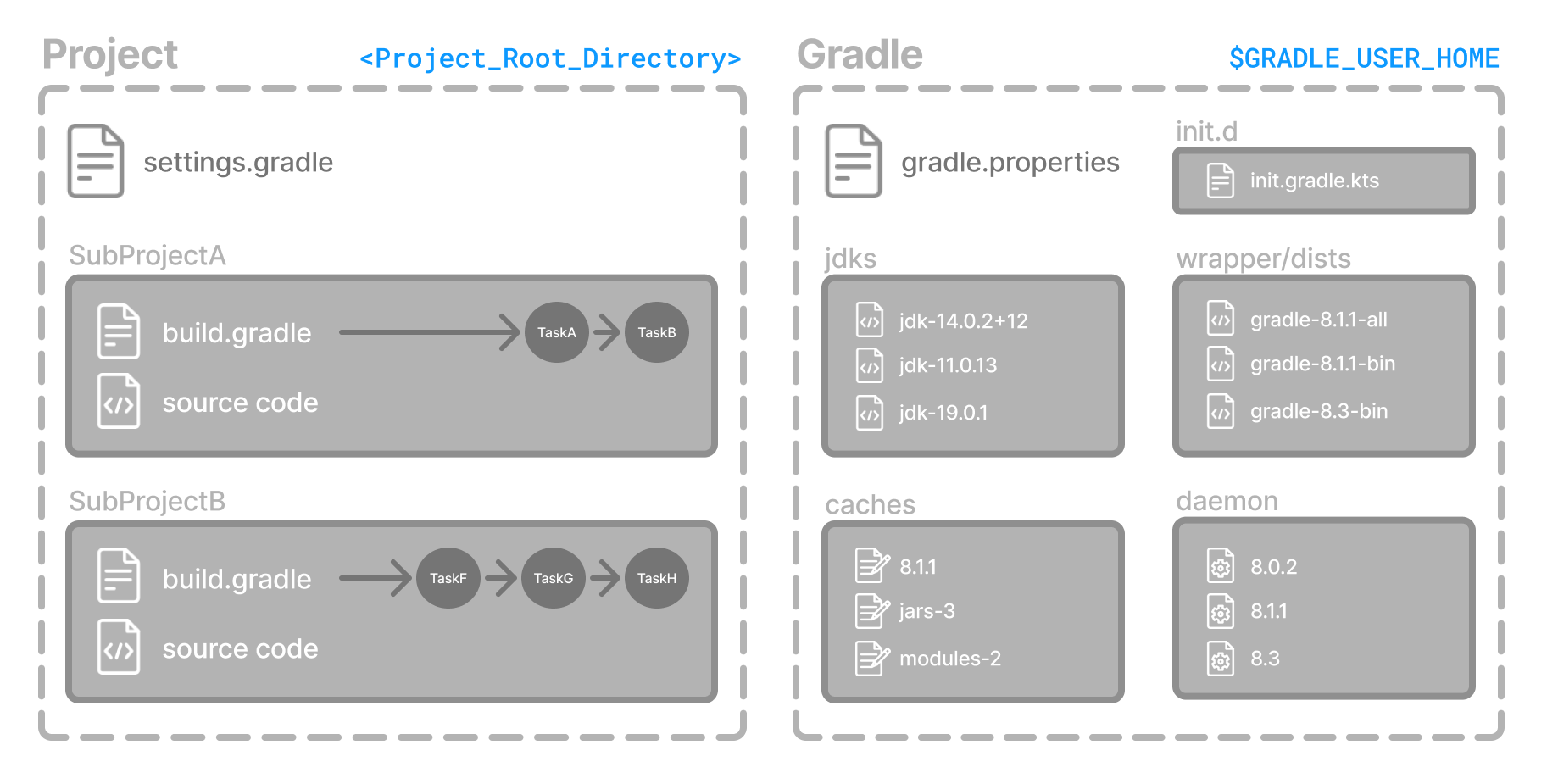

Gradle Directories

Gradle uses two main directories to perform and manage its work: the Gradle User Home directory and the Project Root directory.

Gradle User Home directory

By default, the Gradle User Home (~/.gradle or C:\Users\<USERNAME>\.gradle) stores global configuration properties, initialization scripts, caches, and log files.

It can be set with the environment variable GRADLE_USER_HOME.

|

Tip

|

Not to be confused with the GRADLE_HOME, the optional installation directory for Gradle.

|

It is roughly structured as follows:

├── caches // (1) │ ├── 4.8 // (2) │ ├── 4.9 // (2) │ ├── ⋮ │ ├── jars-3 // (3) │ └── modules-2 // (3) ├── daemon // (4) │ ├── ⋮ │ ├── 4.8 │ └── 4.9 ├── init.d // (5) │ └── my-setup.gradle ├── jdks // (6) │ ├── ⋮ │ └── jdk-14.0.2+12 ├── wrapper │ └── dists // (7) │ ├── ⋮ │ ├── gradle-4.8-bin │ ├── gradle-4.9-all │ └── gradle-4.9-bin └── gradle.properties // (8)

-

Global cache directory (for everything that is not project-specific).

-

Version-specific caches (e.g., to support incremental builds).

-

Shared caches (e.g., for artifacts of dependencies).

-

Registry and logs of the Gradle Daemon.

-

Global initialization scripts.

-

JDKs downloaded by the toolchain support.

-

Distributions downloaded by the Gradle Wrapper.

-

Global Gradle configuration properties.

Consult the Gradle Directories reference to learn more.

Project Root directory

The project root directory contains all source files from your project.

It also contains files and directories Gradle generates, such as .gradle and build.

While .gradle is usually checked into source control, the build directory contains the output of your builds as well as transient files Gradle uses to support features like incremental builds.

The anatomy of a typical project root directory looks as follows:

├── .gradle // (1) │ ├── 4.8 // (2) │ ├── 4.9 // (2) │ └── ⋮ ├── build // (3) ├── gradle │ └── wrapper // (4) ├── gradle.properties // (5) ├── gradlew // (6) ├── gradlew.bat // (6) ├── settings.gradle.kts // (7) ├── subproject-one // (8) | └── build.gradle.kts // (9) ├── subproject-two // (8) | └── build.gradle.kts // (9) └── ⋮

-

Project-specific cache directory generated by Gradle.

-

Version-specific caches (e.g., to support incremental builds).

-

The build directory of this project into which Gradle generates all build artifacts.

-

Contains the JAR file and configuration of the Gradle Wrapper.

-

Project-specific Gradle configuration properties.

-

Scripts for executing builds using the Gradle Wrapper.

-

The project’s settings file where the list of subprojects is defined.

-

Usually, a project is organized into one or multiple subprojects.

-

Each subproject has its own Gradle build script.

Consult the Gradle Directories reference to learn more.

Next Step: Learn how to structure Multi-Project Builds >>

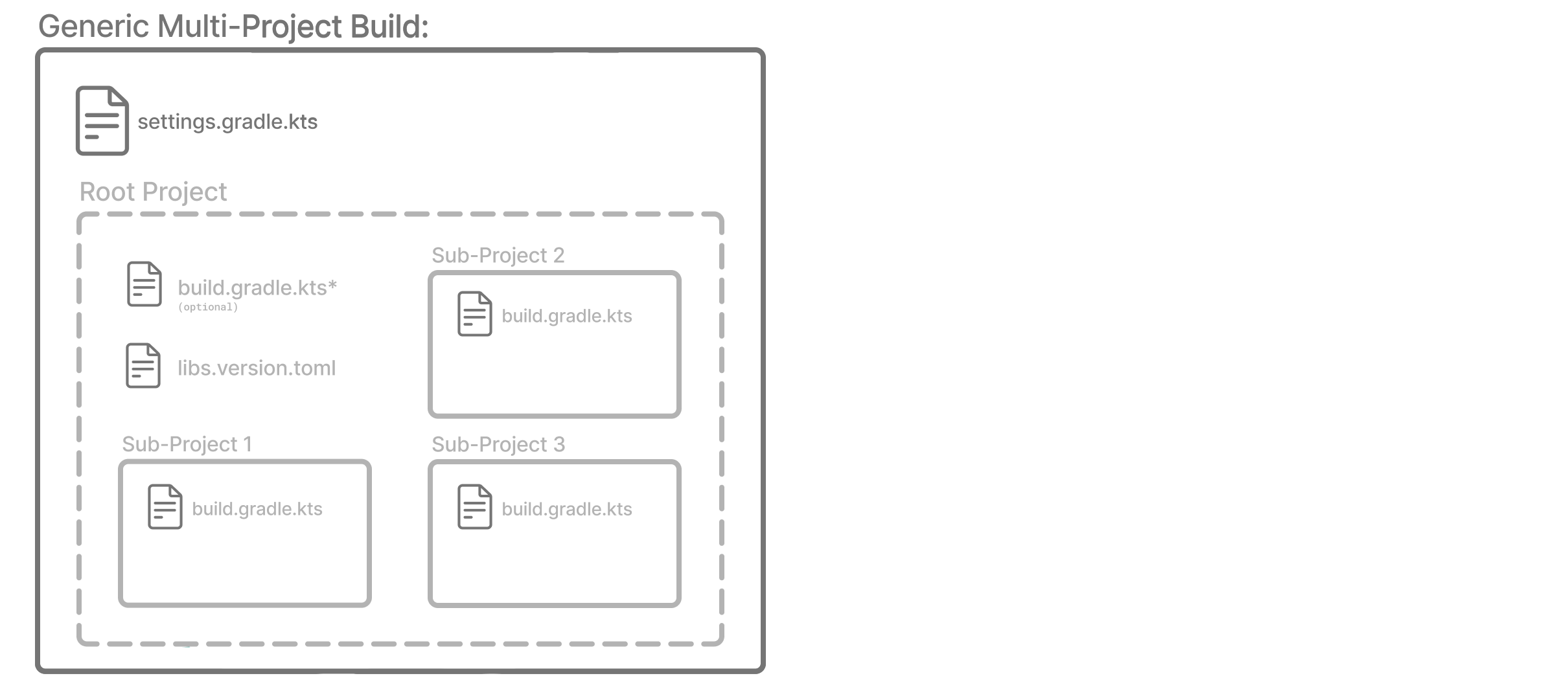

Multi-Project Build Basics

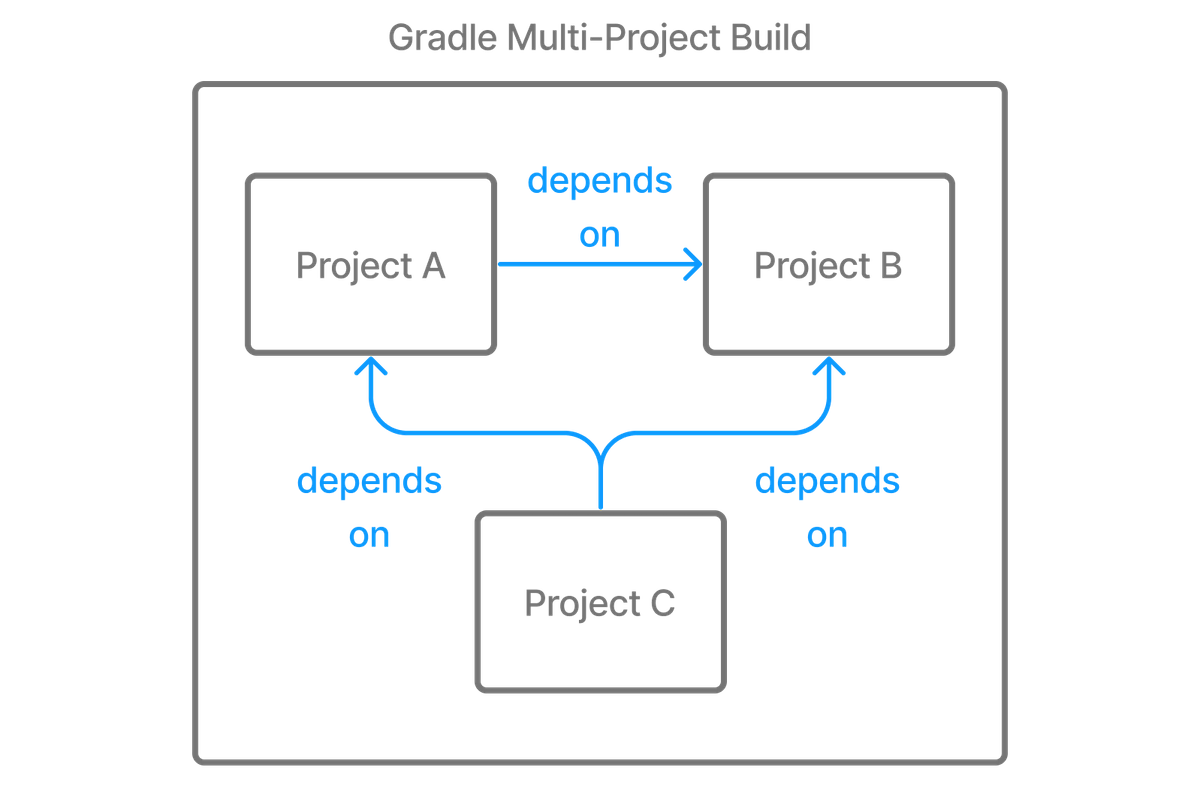

Gradle supports multi-project builds.

While some small projects and monolithic applications may contain a single build file and source tree, it is often more common for a project to have been split into smaller, interdependent modules. The word "interdependent" is vital, as you typically want to link the many modules together through a single build.

Gradle supports this scenario through multi-project builds. This is sometimes referred to as a multi-module project. Gradle refers to modules as subprojects.

A multi-project build consists of one root project and one or more subprojects.

Multi-Project structure

The following represents the structure of a multi-project build that contains two subprojects:

The directory structure should look as follows:

├── .gradle

│ └── ⋮

├── gradle

│ ├── libs.version.toml

│ └── wrapper

├── gradlew

├── gradlew.bat

├── settings.gradle.kts // (1)

├── sub-project-1

│ └── build.gradle.kts // (2)

├── sub-project-2

│ └── build.gradle.kts // (2)

└── sub-project-3

└── build.gradle.kts // (2)

-

The

settings.gradle.ktsfile should include all subprojects. -

Each subproject should have its own

build.gradle.ktsfile.

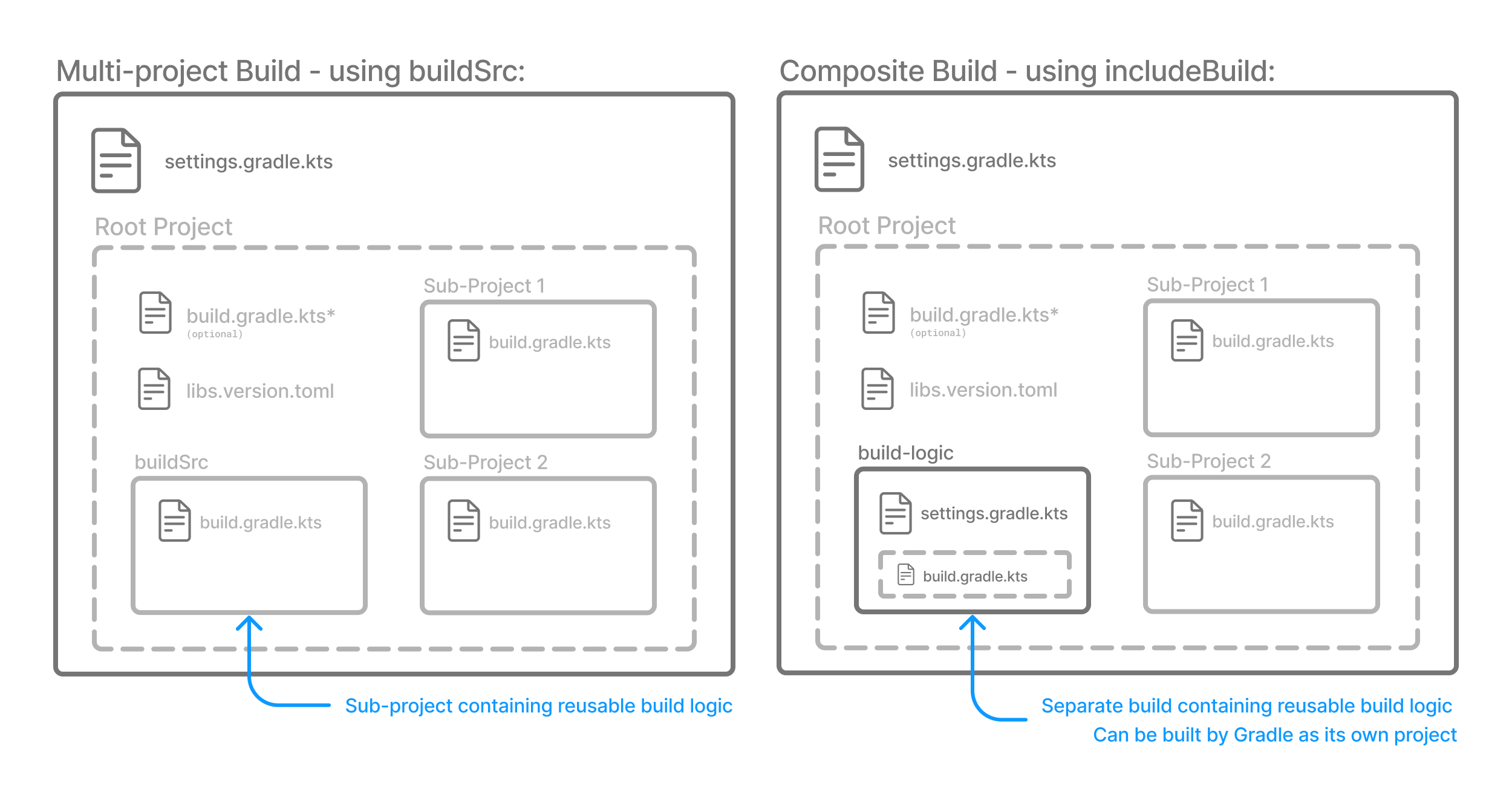

Multi-Project standards

The Gradle community has two standards for multi-project build structures:

-

Multi-Project Builds using buildSrc - where

buildSrcis a subproject-like directory at the Gradle project root containing all the build logic. -

Composite Builds - a build that includes other builds where

build-logicis a build directory at the Gradle project root containing reusable build logic.

1. Multi-Project Builds using buildSrc

Multi-project builds allow you to organize projects with many modules, wire dependencies between those modules, and easily share common build logic amongst them.

For example, a build that has many modules called mobile-app, web-app, api, lib, and documentation could be structured as follows:

.

├── gradle

├── gradlew

├── settings.gradle.kts

├── buildSrc

│ ├── build.gradle.kts

│ └── src/main/kotlin/shared-build-conventions.gradle.kts

├── mobile-app

│ └── build.gradle.kts

├── web-app

│ └── build.gradle.kts

├── api

│ └── build.gradle.kts

├── lib

│ └── build.gradle.kts

└── documentation

└── build.gradle.ktsThe modules will have dependencies between them such as web-app and mobile-app depending on lib.

This means that in order for Gradle to build web-app or mobile-app, it must build lib first.

In this example, the root settings file will look as follows:

include("mobile-app", "web-app", "api", "lib", "documentation")|

Note

|

The order in which the subprojects (modules) are included does not matter. |

The buildSrc directory is automatically recognized by Gradle.

It is a good place to define and maintain shared configuration or imperative build logic, such as custom tasks or plugins.

buildSrc is automatically included in your build as a special subproject if a build.gradle(.kts) file is found under buildSrc.

If the java plugin is applied to the buildSrc project, the compiled code from buildSrc/src/main/java is put in the classpath of the root build script, making it available to any subproject (web-app, mobile-app, lib, etc…) in the build.

Consult how to declare dependencies between subprojects to learn more.

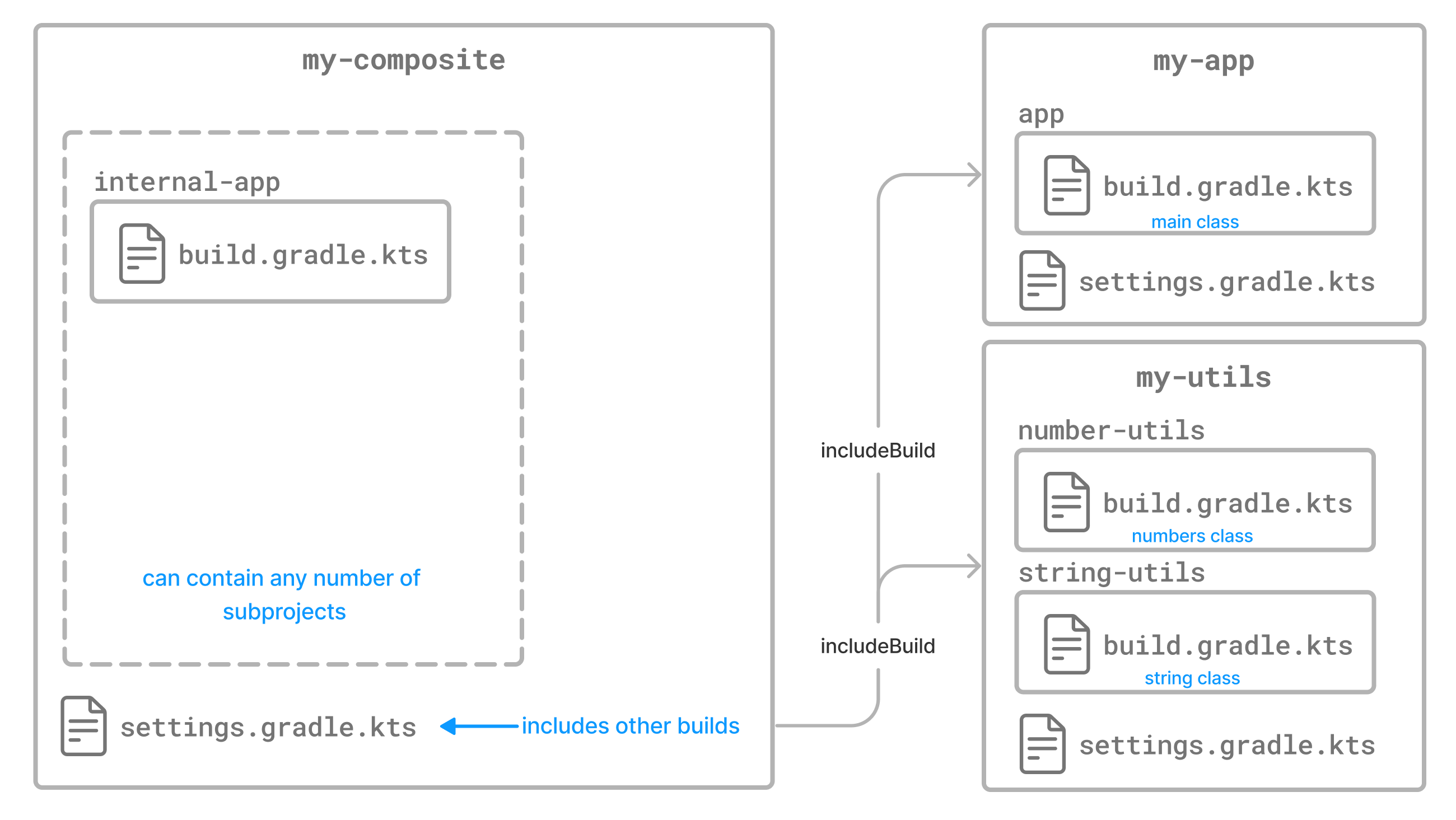

2. Composite Builds

Composite Builds, also referred to as included builds, are best for sharing logic between builds (not subprojects) or isolating access to shared build logic (i.e., convention plugins).

Let’s take the previous example.

The logic in buildSrc has been turned into a project that contains plugins and can be published and worked on independently of the root project build.

The plugin is moved to its own build called build-logic with a build script and settings file:

.

├── gradle

├── gradlew

├── settings.gradle.kts

├── build-logic

│ ├── settings.gradle.kts

│ └── conventions

│ ├── build.gradle.kts

│ └── src/main/kotlin/shared-build-conventions.gradle.kts

├── mobile-app

│ └── build.gradle.kts

├── web-app

│ └── build.gradle.kts

├── api

│ └── build.gradle.kts

├── lib

│ └── build.gradle.kts

└── documentation

└── build.gradle.kts|

Note

|

The fact that build-logic is located in a subdirectory of the root project is irrelevant. The folder could be located outside the root project if desired.

|

The root settings file includes the entire build-logic build:

pluginManagement {

includeBuild("build-logic")

}

include("mobile-app", "web-app", "api", "lib", "documentation")Consult how to create composite builds with includeBuild to learn more.

Multi-Project path

A project path has the following pattern: it starts with an optional colon, which denotes the root project.

The root project, :, is the only project in a path not specified by its name.

The rest of a project path is a colon-separated sequence of project names, where the next project is a subproject of the previous project:

:sub-project-1You can see the project paths when running gradle projects:

------------------------------------------------------------

Root project 'project'

------------------------------------------------------------

Root project 'project'

+--- Project ':sub-project-1'

\--- Project ':sub-project-2'Project paths usually reflect the filesystem layout, but there are exceptions. Most notably for composite builds.

Identifying project structure

You can use the gradle projects command to identify the project structure.

As an example, let’s use a multi-project build with the following structure:

> gradle -q projects

------------------------------------------------------------ Root project 'multiproject' ------------------------------------------------------------ Root project 'multiproject' +--- Project ':api' +--- Project ':services' | +--- Project ':services:shared' | \--- Project ':services:webservice' \--- Project ':shared' To see a list of the tasks of a project, run gradle <project-path>:tasks For example, try running gradle :api:tasks

Multi-project builds are collections of tasks you can run. The difference is that you may want to control which project’s tasks get executed.

The following sections will cover your two options for executing tasks in a multi-project build.

Executing tasks by name

The command gradle test will execute the test task in any subprojects relative to the current working directory that has that task.

If you run the command from the root project directory, you will run test in api, shared, services:shared and services:webservice.

If you run the command from the services project directory, you will only execute the task in services:shared and services:webservice.

The basic rule behind Gradle’s behavior is to execute all tasks down the hierarchy with this name. And complain if there is no such task found in any of the subprojects traversed.

|

Note

|

Some task selectors, like help or dependencies, will only run the task on the project they are invoked on and not on all the subprojects to reduce the amount of information printed on the screen.

|

Executing tasks by fully qualified name

You can use a task’s fully qualified name to execute a specific task in a particular subproject.

For example: gradle :services:webservice:build will run the build task of the webservice subproject.

The fully qualified name of a task is its project path plus the task name.

This approach works for any task, so if you want to know what tasks are in a particular subproject, use the tasks task, e.g. gradle :services:webservice:tasks.

Multi-Project building and testing

The build task is typically used to compile, test, and check a single project.

In multi-project builds, you may often want to do all of these tasks across various projects.

The buildNeeded and buildDependents tasks can help with this.

In this example, the :services:person-service project depends on both the :api and :shared projects.

The :api project also depends on the :shared project.

Assuming you are working on a single project, the :api project, you have been making changes but have not built the entire project since performing a clean.

You want to build any necessary supporting JARs but only perform code quality and unit tests on the parts of the project you have changed.

The build task does this:

$ gradle :api:build > Task :shared:compileJava > Task :shared:processResources > Task :shared:classes > Task :shared:jar > Task :api:compileJava > Task :api:processResources > Task :api:classes > Task :api:jar > Task :api:assemble > Task :api:compileTestJava > Task :api:processTestResources > Task :api:testClasses > Task :api:test > Task :api:check > Task :api:build BUILD SUCCESSFUL in 0s

If you have just gotten the latest version of the source from your version control system, which included changes in other projects that :api depends on, you might want to build all the projects you depend on AND test them too.

The buildNeeded task builds AND tests all the projects from the project dependencies of the testRuntime configuration:

$ gradle :api:buildNeeded > Task :shared:compileJava > Task :shared:processResources > Task :shared:classes > Task :shared:jar > Task :api:compileJava > Task :api:processResources > Task :api:classes > Task :api:jar > Task :api:assemble > Task :api:compileTestJava > Task :api:processTestResources > Task :api:testClasses > Task :api:test > Task :api:check > Task :api:build > Task :shared:assemble > Task :shared:compileTestJava > Task :shared:processTestResources > Task :shared:testClasses > Task :shared:test > Task :shared:check > Task :shared:build > Task :shared:buildNeeded > Task :api:buildNeeded BUILD SUCCESSFUL in 0s

You may want to refactor some part of the :api project used in other projects.

If you make these changes, testing only the :api project is insufficient.

You must test all projects that depend on the :api project.

The buildDependents task tests ALL the projects that have a project dependency (in the testRuntime configuration) on the specified project:

$ gradle :api:buildDependents > Task :shared:compileJava > Task :shared:processResources > Task :shared:classes > Task :shared:jar > Task :api:compileJava > Task :api:processResources > Task :api:classes > Task :api:jar > Task :api:assemble > Task :api:compileTestJava > Task :api:processTestResources > Task :api:testClasses > Task :api:test > Task :api:check > Task :api:build > Task :services:person-service:compileJava > Task :services:person-service:processResources > Task :services:person-service:classes > Task :services:person-service:jar > Task :services:person-service:assemble > Task :services:person-service:compileTestJava > Task :services:person-service:processTestResources > Task :services:person-service:testClasses > Task :services:person-service:test > Task :services:person-service:check > Task :services:person-service:build > Task :services:person-service:buildDependents > Task :api:buildDependents BUILD SUCCESSFUL in 0s

Finally, you can build and test everything in all projects. Any task you run in the root project folder will cause that same-named task to be run on all the children.

You can run gradle build to build and test ALL projects.

Consult the Structuring Builds chapter to learn more.

Next Step: Learn about the Gradle Build Lifecycle >>

Build Lifecycle

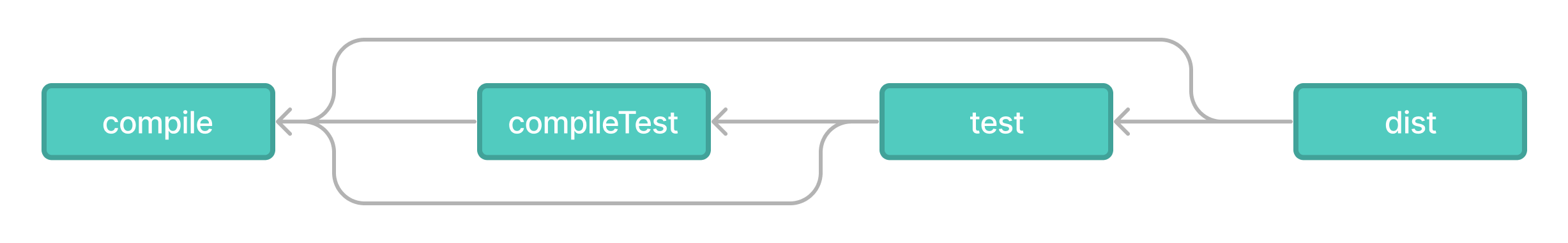

As a build author, you define tasks and dependencies between tasks. Gradle guarantees that these tasks will execute in order of their dependencies.

Your build scripts and plugins configure this dependency graph.

For example, if your project tasks include build, assemble, createDocs, your build script(s) can ensure that they are executed in the order build → assemble → createDoc.

Task Graphs

Gradle builds the task graph before executing any task.

Across all projects in the build, tasks form a Directed Acyclic Graph (DAG).

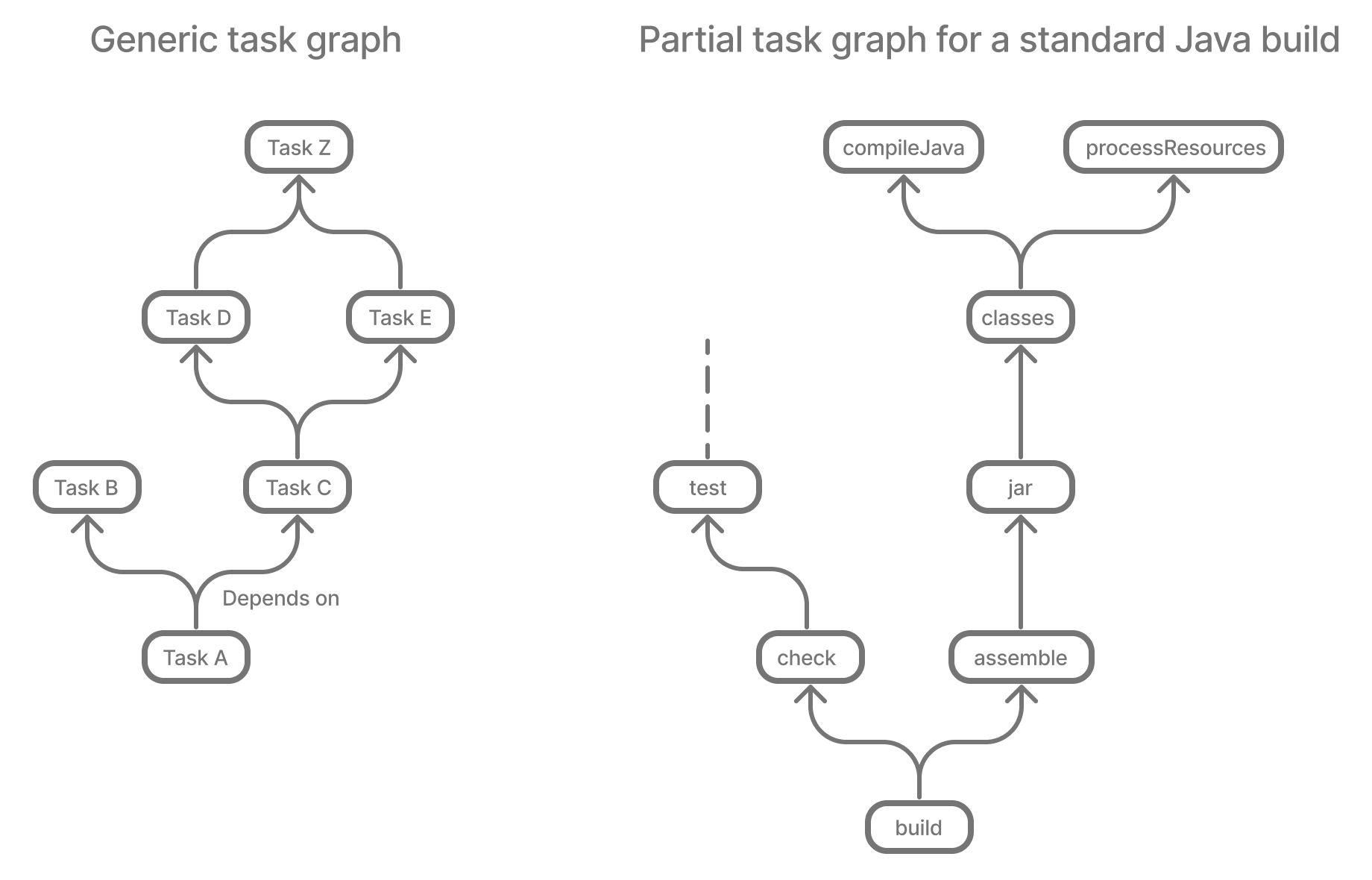

This diagram shows two example task graphs, one abstract and the other concrete, with dependencies between tasks represented as arrows:

Both plugins and build scripts contribute to the task graph via the task dependency mechanism and annotated inputs/outputs.

Build Phases

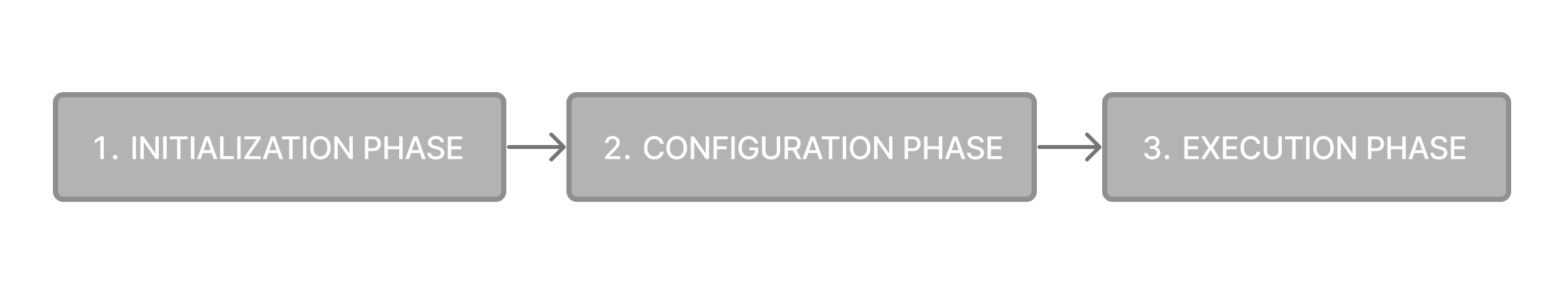

A Gradle build has three distinct phases.

Gradle runs these phases in order:

- Phase 1. Initialization

- Phase 2. Configuration

-

-

Evaluates the build scripts,

build.gradle(.kts), of every project participating in the build. -

Creates a task graph for requested tasks.

-

- Phase 3. Execution

-

-

Schedules and executes the selected tasks.

-

Dependencies between tasks determine execution order.

-

Execution of tasks can occur in parallel.

-

Example

The following example shows which parts of settings and build files correspond to various build phases:

rootProject.name = "basic"

println("This is executed during the initialization phase.")println("This is executed during the configuration phase.")

tasks.register("configured") {

println("This is also executed during the configuration phase, because :configured is used in the build.")

}

tasks.register("test") {

doLast {

println("This is executed during the execution phase.")

}

}

tasks.register("testBoth") {

doFirst {

println("This is executed first during the execution phase.")

}

doLast {

println("This is executed last during the execution phase.")

}

println("This is executed during the configuration phase as well, because :testBoth is used in the build.")

}rootProject.name = 'basic'

println 'This is executed during the initialization phase.'println 'This is executed during the configuration phase.'

tasks.register('configured') {

println 'This is also executed during the configuration phase, because :configured is used in the build.'

}

tasks.register('test') {

doLast {

println 'This is executed during the execution phase.'

}

}

tasks.register('testBoth') {

doFirst {

println 'This is executed first during the execution phase.'

}

doLast {

println 'This is executed last during the execution phase.'

}

println 'This is executed during the configuration phase as well, because :testBoth is used in the build.'

}The following command executes the test and testBoth tasks specified above.

Because Gradle only configures requested tasks and their dependencies, the configured task never configures:

> gradle test testBoth

This is executed during the initialization phase.

> Configure project :

This is executed during the configuration phase.

This is executed during the configuration phase as well, because :testBoth is used in the build.

> Task :test

This is executed during the execution phase.

> Task :testBoth

This is executed first during the execution phase.

This is executed last during the execution phase.

BUILD SUCCESSFUL in 0s

2 actionable tasks: 2 executed> gradle test testBoth

This is executed during the initialization phase.

> Configure project :

This is executed during the configuration phase.

This is executed during the configuration phase as well, because :testBoth is used in the build.

> Task :test

This is executed during the execution phase.

> Task :testBoth

This is executed first during the execution phase.

This is executed last during the execution phase.

BUILD SUCCESSFUL in 0s

2 actionable tasks: 2 executedPhase 1. Initialization

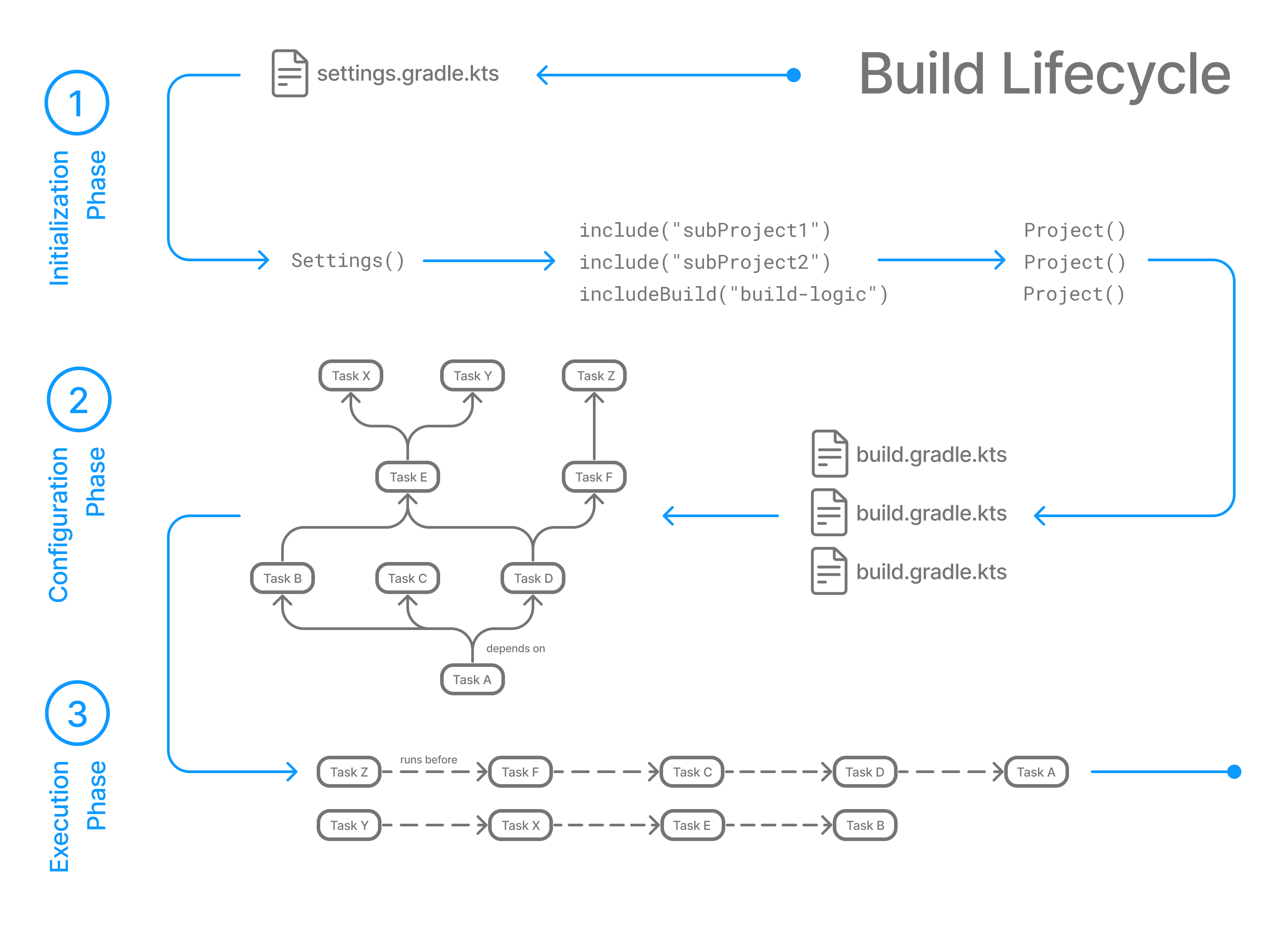

In the initialization phase, Gradle detects the set of projects (root and subprojects) and included builds participating in the build.

Gradle first evaluates the settings file, settings.gradle(.kts), and instantiates a Settings object.

Then, Gradle instantiates Project instances for each project.

Phase 2. Configuration

In the configuration phase, Gradle adds tasks and other properties to the projects found by the initialization phase.

Phase 3. Execution

In the execution phase, Gradle runs tasks.

Gradle uses the task execution graphs generated by the configuration phase to determine which tasks to execute.

Next Step: Learn how to write Settings files >>

Writing Settings Files

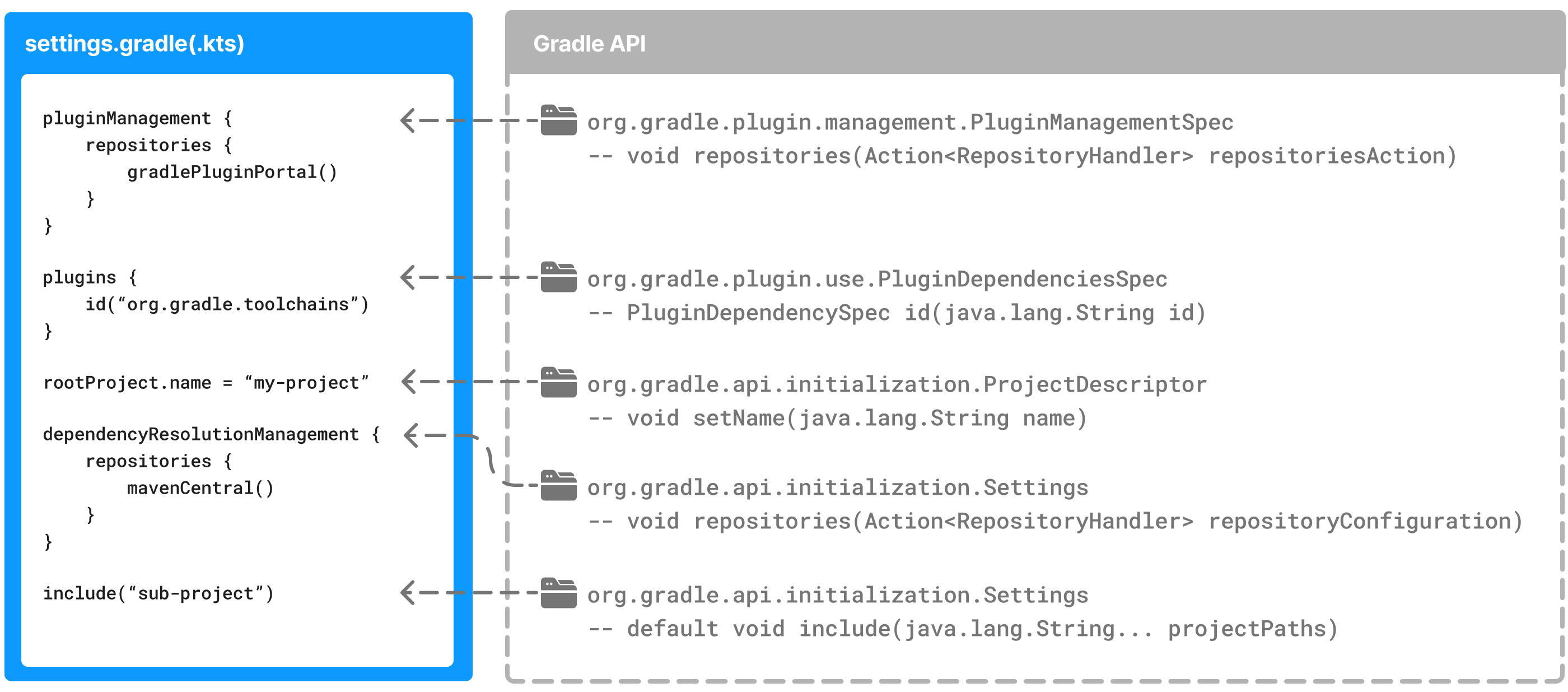

The settings file is the entry point of every Gradle build.

Early in the Gradle Build lifecycle, the initialization phase finds the settings file in your project root directory.

When the settings file settings.gradle(.kts) is found, Gradle instantiates a Settings object.

One of the purposes of the Settings object is to allow you to declare all the projects to be included in the build.

Settings Scripts

The settings script is either a settings.gradle file in Groovy or a settings.gradle.kts file in Kotlin.

Before Gradle assembles the projects for a build, it creates a Settings instance and executes the settings file against it.

As the settings script executes, it configures this Settings.

Therefore, the settings file defines the Settings object.

|

Important

|

There is a one-to-one correspondence between a Settings instance and a settings.gradle(.kts) file.

|

The Settings Object

The Settings object is part of the Gradle API.

Many top-level properties and blocks in a settings script are part of the Settings API.

For example, we can set the root project name in the settings script using the Settings.rootProject property:

settings.rootProject.name = "root"Which is usually shortened to:

rootProject.name = "root"Standard Settings properties

The Settings object exposes a standard set of properties in your settings script.

The following table lists a few commonly used properties:

| Name | Description |

|---|---|

|

The build cache configuration. |

|

The container of plugins that have been applied to the settings. |

|

The root directory of the build. The root directory is the project directory of the root project. |

|

The root project of the build. |

|

Returns this settings object. |

The following table lists a few commonly used methods:

| Name | Description |

|---|---|

|

Adds the given projects to the build. |

|

Includes a build at the specified path to the composite build. |

Settings Script structure

A Settings script is a series of method calls to the Gradle API that often use { … }, a special shortcut in both the Groovy and Kotlin languages.

A { } block is called a lambda in Kotlin or a closure in Groovy.

Simply put, the plugins{ } block is a method invocation in which a Kotlin lambda object or Groovy closure object is passed as the argument.

It is the short form for:

plugins(function() {

id("plugin")

})Blocks are mapped to Gradle API methods.

The code inside the function is executed against a this object called a receiver in Kotlin lambda and a delegate in Groovy closure.

Gradle determines the correct this object and invokes the correct corresponding method.

The this of the method invocation id("plugin") object is of type PluginDependenciesSpec.

The settings file is composed of Gradle API calls built on top of the DSLs. Gradle executes the script line by line, top to bottom.

Let’s take a look at an example and break it down:

pluginManagement { // (1)

repositories {

gradlePluginPortal()

google()

}

}

plugins { // (2)

id("org.gradle.toolchains.fake") version "0.6.0"

}

rootProject.name = "root-project" // (3)

dependencyResolutionManagement { // (4)

repositories {

mavenCentral()

}

}

include("sub-project-a") // (5)

include("sub-project-b")

include("sub-project-c")-

Define the location of plugins

-

Apply plugins.

-

Define the root project name.

-

Define build-wide repositories.

-

Add subprojects to the build.

pluginManagement { // (1)

repositories {

gradlePluginPortal()

google()

}

}

plugins { // (2)

id 'org.gradle.toolchains.fake' version '0.6.0'

}

rootProject.name = 'root-project' // (3)

dependencyResolutionManagement { // (4)

repositories {

mavenCentral()

}

}

include('sub-project-a') // (5)

include('sub-project-b')

include('sub-project-c')-

Define the location of plugins.

-

Apply plugins.

-

Define the root project name.

-

Define build-wide repositories.

-

Add subprojects to the build.

1. Define the location of plugins

The settings file can optionally define the plugins your project uses with pluginManagement, including binary repositories such as the Gradle Plugin Portal or other Gradle builds using includeBuild:

pluginManagement {

repositories {

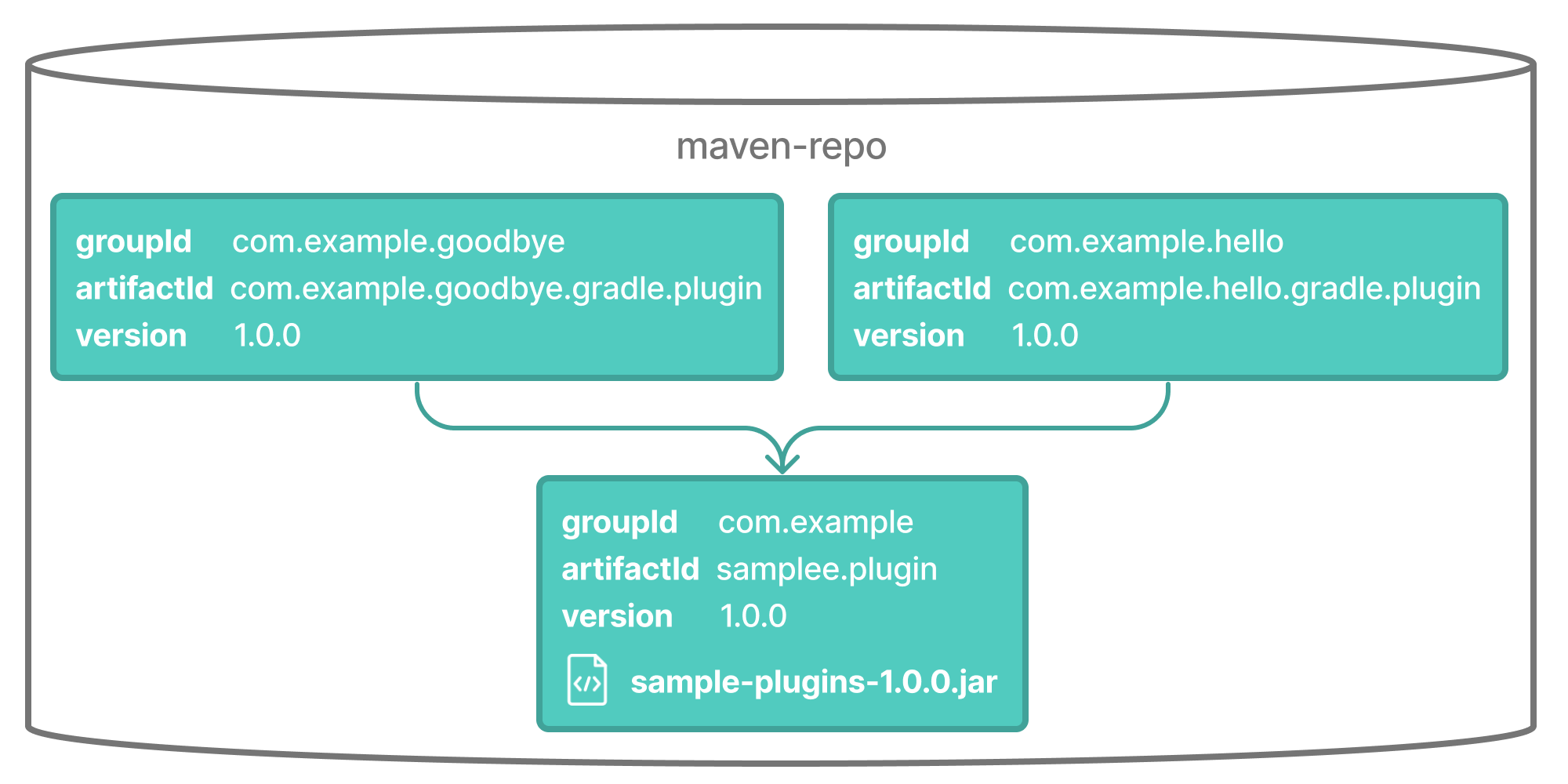

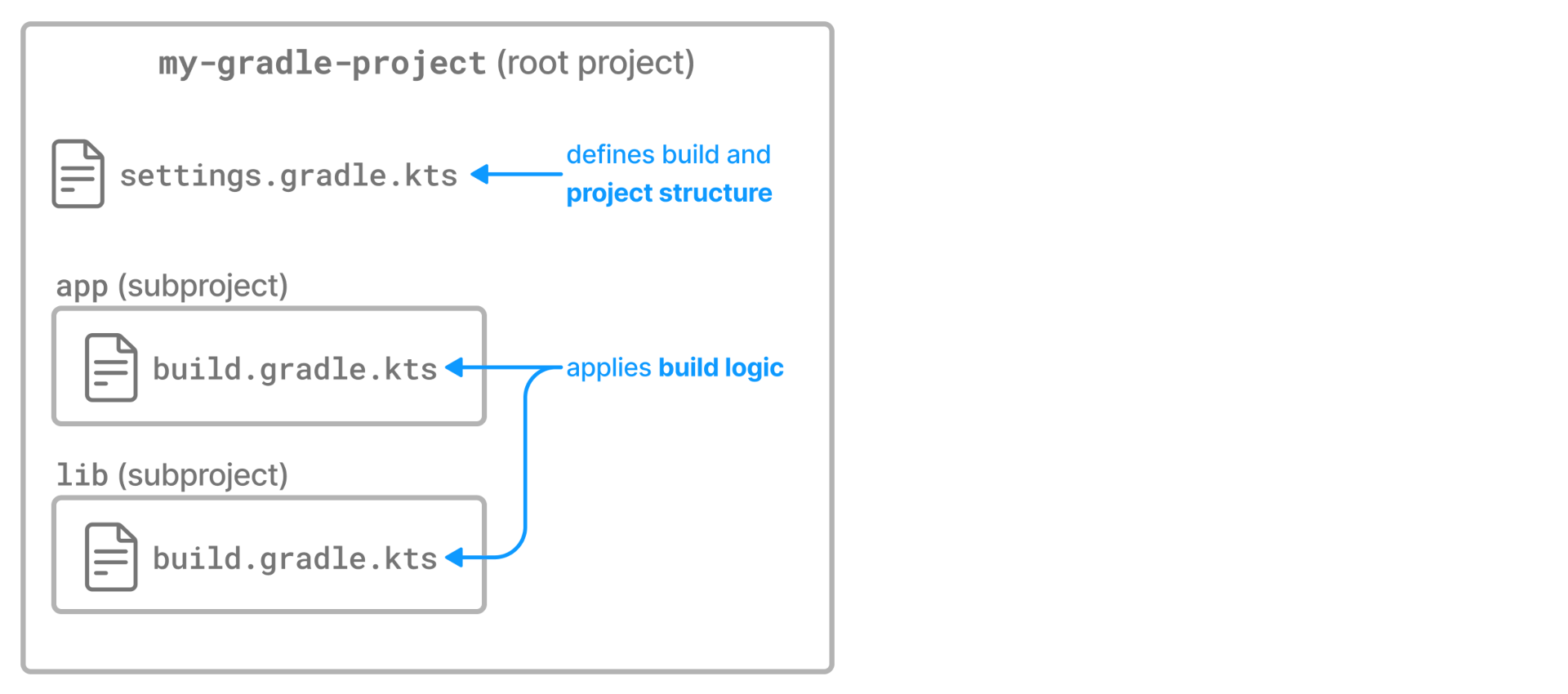

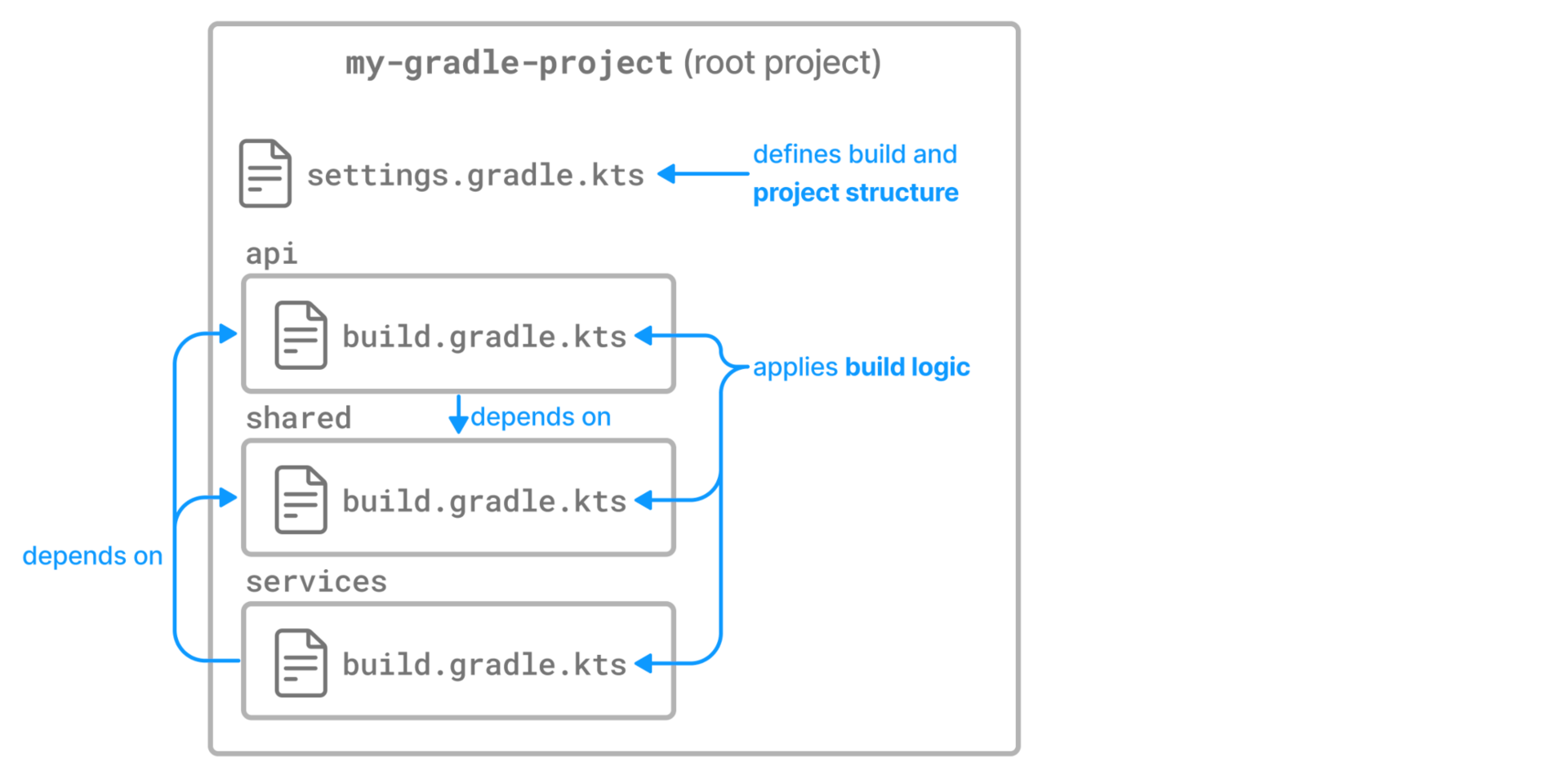

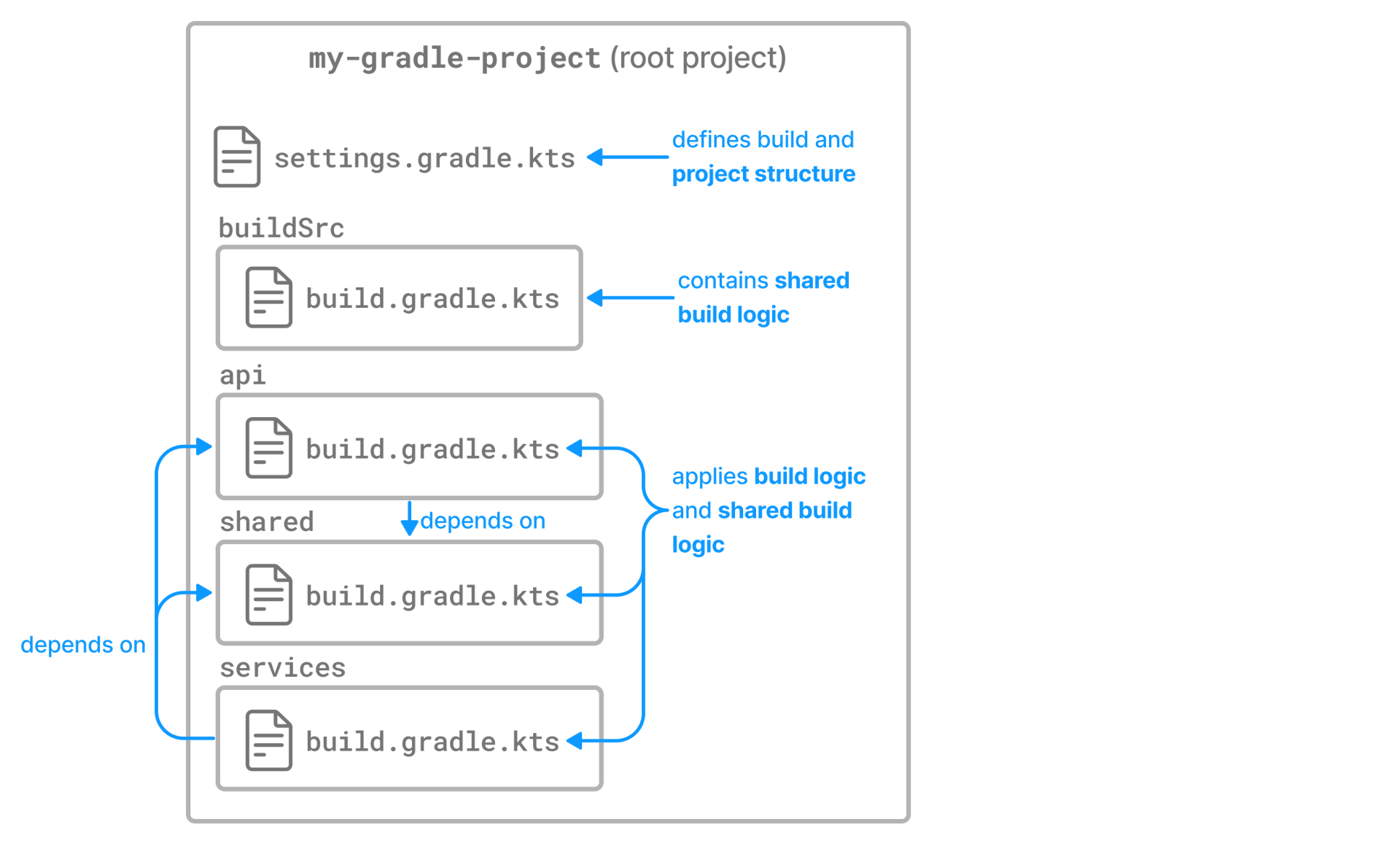

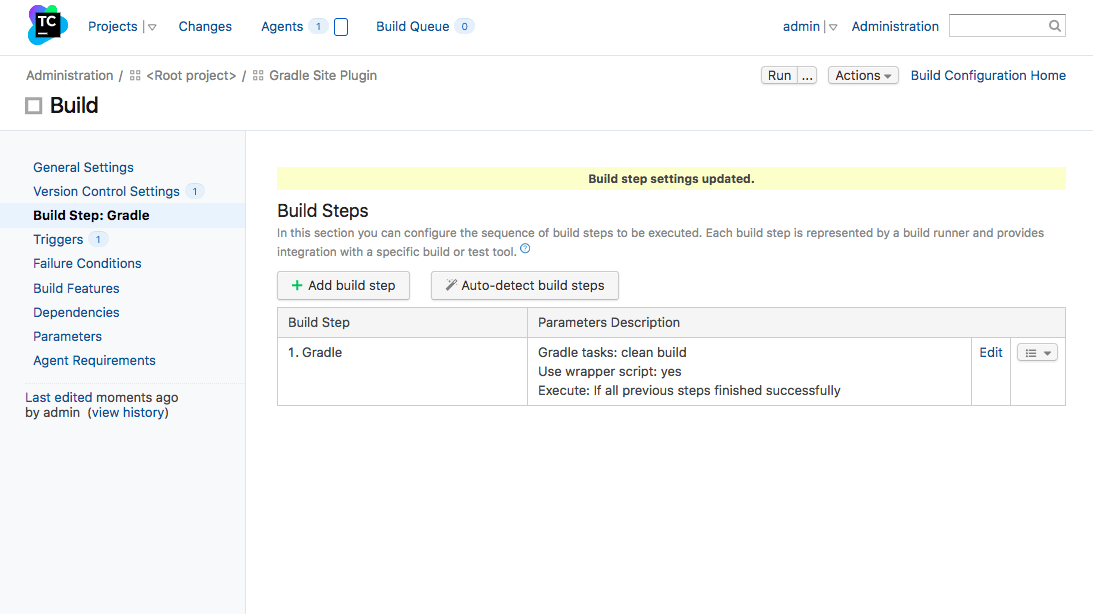

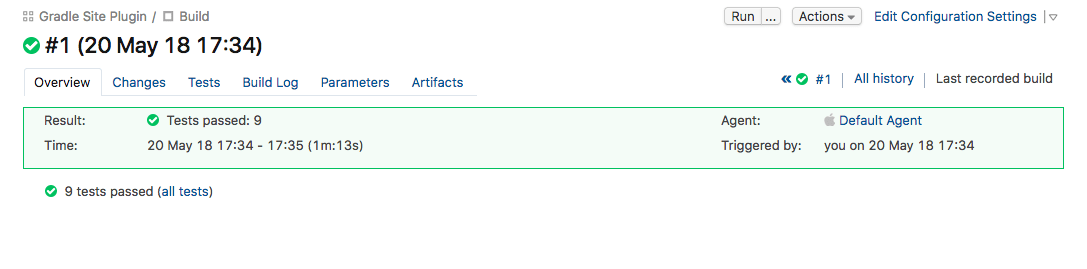

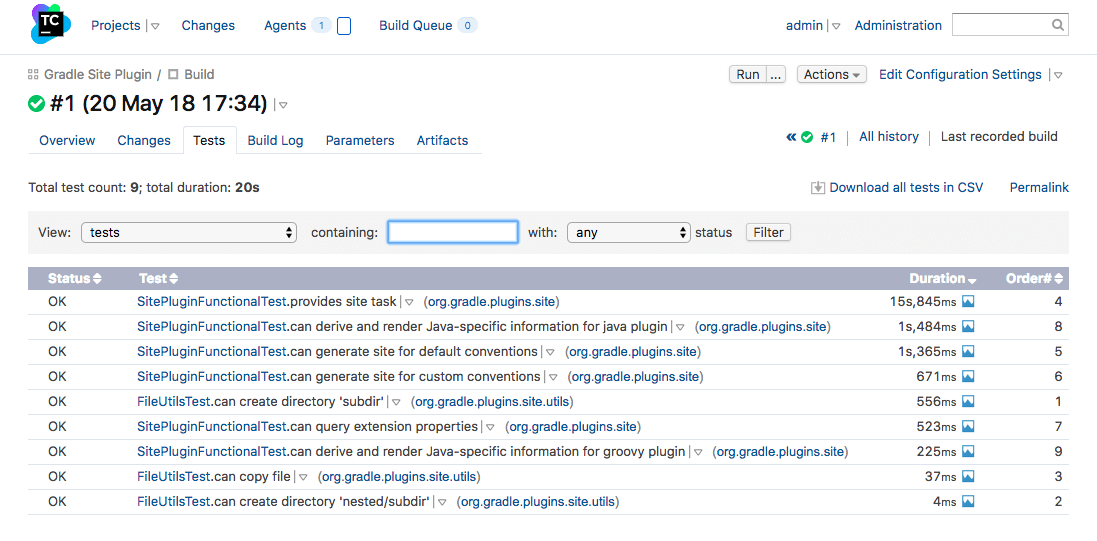

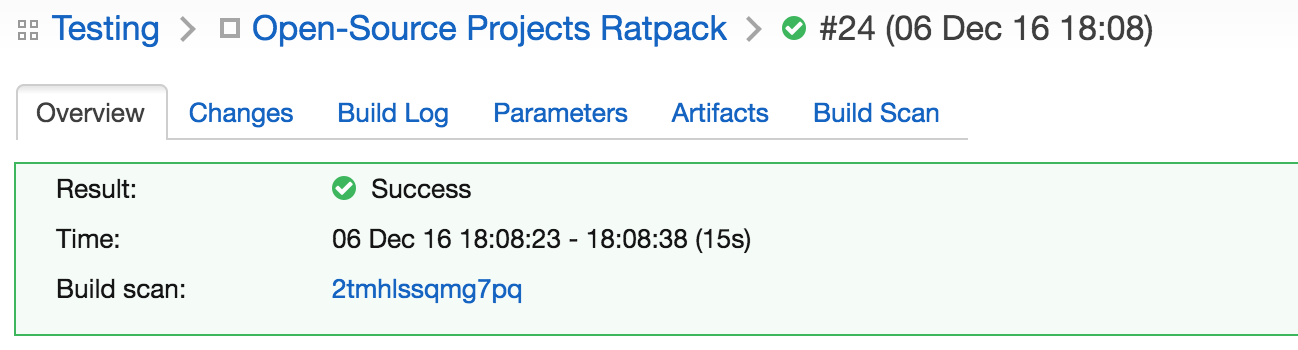

gradlePluginPortal()